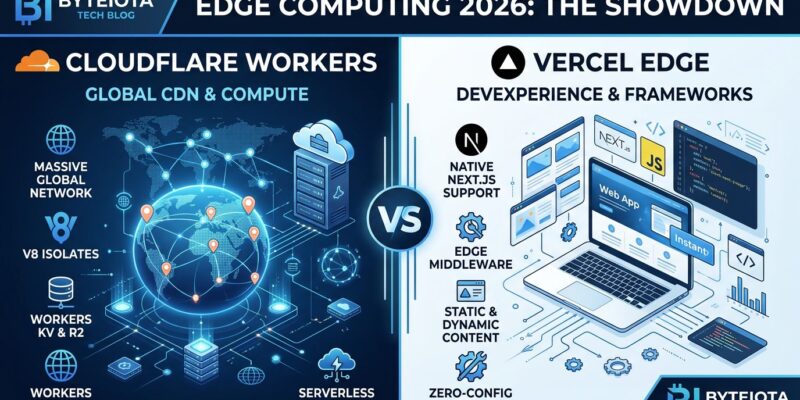

Edge computing in 2026 isn’t about choosing a single platform—it’s about knowing when to use each. Cloudflare Workers dominates global distribution with sub-5ms cold starts across 330+ cities, while Vercel Edge Functions leverages Fluid Compute to deliver 1.2x to 5x faster server-side rendering for React applications. The performance gap between these platforms comes down to architecture: Workers uses V8 isolates for 100x faster cold starts than containers, while Vercel optimizes for compute-intensive SSR throughput with 2 vCPU and 4GB RAM configurations.

This isn’t a minor difference. Choosing the wrong platform can mean 150ms+ latency gaps, failed Core Web Vitals targets, and cost inefficiencies at scale. With 78% of engineering teams now running hybrid architectures combining edge, containers, and serverless, understanding each platform’s strengths is critical for production architecture decisions.

Cloudflare Workers: Global Distribution Champion

Cloudflare Workers runs V8 isolates across 330+ cities compared to Vercel’s 19 regions, achieving sub-5ms cold starts—100x faster than container-based serverless platforms like AWS Lambda that suffer 100-1000ms cold start penalties. This architectural advantage comes from eliminating OS initialization layers entirely: Workers creates lightweight 10KB isolates with 2MB base memory overhead, while containers require 30-50MB minimum with full operating system baggage.

The result is tenant density that containers can’t match. One edge node hosts 10-20x more tenants with isolates than with containers. This efficiency translates to production scale that matters: Fermyon handles 75 million requests per second on their edge platform, and Cloudflare Workers reaches 95% of users within 50ms globally. The cost structure reflects this efficiency—flat $0.30 per million requests with zero egress fees and no per-seat charges.

For authentication, API routing, and rate limiting—where global distribution and latency matter more than raw compute power—Workers is the clear choice. Here’s JWT validation at the edge:

// Cloudflare Workers: Sub-5ms JWT validation at edge

addEventListener('fetch', event => {

event.respondWith(handleRequest(event.request))

})

async function handleRequest(request) {

const token = request.headers.get('Authorization')

// Validate JWT at nearest edge location (< 5ms)

if (!await validateJWT(token)) {

return new Response('Unauthorized', { status: 401 })

}

// Forward authenticated request to origin

return fetch(request)

}This pattern works because authentication is stateless, latency-sensitive, and benefits from global distribution. But it’s not the right tool for every workload.

Vercel Edge: SSR Throughput Leader

Vercel’s Fluid Compute outperforms Cloudflare Workers by 1.2x to 5x for server-side rendering workloads, averaging 2.55x faster across typical SSR benchmarks. This advantage comes from resource allocation: Vercel configures 2 vCPU and 4GB RAM for SSR-intensive tasks, while Workers uses shared CPU and 128MB RAM. Fluid Compute also keeps execution contexts warm across requests, achieving near-zero cold starts for active functions.

The difference matters for React-heavy applications. Guillermo Rauch, Vercel’s CEO, explains the platform’s evolution: “We gave a very, very earnest try to Workers when we explored the edge runtime / world. We had to migrate off for technical reasons”—referring specifically to SSR throughput needs. Vercel’s framework integration with Next.js includes per-route runtime selection, letting developers choose edge for speed-critical routes and serverless for capability-intensive operations.

Here’s a Vercel Edge function optimized for SSR:

// Vercel Edge: Fluid Compute for React SSR

export const config = {

runtime: 'edge', // Use Vercel's Fluid Compute

}

export default async function handler(req: Request) {

// Server-side render React component (1.2x-5x faster than Workers)

const html = await renderToString(<MyComponent />)

return new Response(html, {

headers: { 'content-type': 'text/html' },

})

}If your app is Next.js with compute-intensive server rendering, Vercel Edge wins despite having fewer global locations. The SSR throughput advantage justifies the premium for these specific workloads.

The Hybrid Architecture Reality

Here’s the truth most platform evangelists won’t tell you: 78% of engineering teams in 2026 run hybrid architectures, not single-platform deployments. They combine edge, containers, and serverless to optimize for specific workloads. The winning strategy deploys authentication and routing to edge, business logic to containers, and background jobs to serverless. This approach achieves 30-48% cost reduction compared to forcing everything onto a single platform.

The workload placement matters because CPU time changes the cost equation dramatically. Ten billion monthly requests requiring 15ms CPU time each costs $5,969 on Cloudflare Workers versus $6,557 on AWS Lambda—Workers wins. But bump that CPU time to 80ms and Lambda becomes cheaper. The question isn’t “which platform is better?” but “which workloads go where?”

Next.js supports this reality with per-route runtime selection. Deploy your authentication routes to edge for sub-5ms validation, your SSR routes to Fluid Compute for throughput, and your image processing to serverless where CPU time doesn’t kill your budget. The hybrid pattern is the winner, not the platforms themselves.

Decision Framework: When to Use Each

Platform choice depends on use case and performance profile. Choose Cloudflare Workers for global user bases with API-heavy, latency-sensitive workloads: authentication, routing, rate limiting, and high-volume edge logic. The 330+ city distribution and sub-5ms cold starts make Workers ideal when every millisecond counts. Durable Objects enable stateful coordination for WebSocket connections and global rate limiting—capabilities Vercel doesn’t offer.

Choose Vercel Edge for Next.js or React applications with compute-intensive server-side rendering. The 1.2x to 5x SSR performance advantage, framework integration, and developer experience justify the premium when rendering is your bottleneck. If your team is invested in the Vercel ecosystem and prioritizes developer velocity, the platform choice is clear.

But avoid edge functions entirely for certain workloads. Both platforms fail for stateful database operations requiring long-lived connections, heavy computations exceeding 80ms CPU time, operations needing filesystem access, or workloads with third-party SDK dependencies. Use containers or serverless for these instead—edge computing has architectural constraints that rule out these use cases.

Real-World Performance Impact

Edge computing reduces latency from 150-300ms for origin servers to under 50ms globally. Edge-side rendering typically cuts Time to First Byte by 60-80% compared to traditional server rendering. This isn’t theoretical—it directly impacts Core Web Vitals, Google’s SEO ranking factor, plus conversion rates and bounce rates. Production deployments prove viability: Cloudflare Workers handles millions of requests with sub-millisecond cold starts, Fastly Compute@Edge serves 10,000+ production users, and Vercel powers dominant Next.js deployments.

These latency improvements translate to business metrics that matter. Faster TTFB improves SEO rankings. Lower global latency reduces bounce rates. Better Core Web Vitals scores increase conversion rates. The 150ms to 50ms improvement isn’t just a benchmark—it’s a competitive advantage.

Common Pitfalls to Avoid

Edge computing projects fail when teams migrate the wrong workloads. Edge functions lack direct database connections, filesystem access, or support for long-lived connections. Deploying stateful database operations to edge causes connection limit issues and latency regressions. Keep database queries, third-party SDK calls, and image processing in containers or serverless.

The other critical mistake is treating connectivity loss as an exception. Edge locations have higher downtime rates than cloud regions—network partitions are expected, not exceptional. Design for intermittent connectivity with local caching and graceful degradation. The “move fast, fix in production” approach is dangerous at edge scale: failed deployments can brick entire fleets, making devices inoperable.

Security parity gaps create vulnerabilities. Teams disable security features like Secure Boot and encryption in test environments for easier automation, and these relaxed configurations leak into production. Maintain strict security parity from day one. Also watch for cost surprises—workloads that look cheap at 1 million requests can explode at 50 million when CPU time usage patterns change the pricing equation.

Key Takeaways

- Edge computing matured in 2026, but platform choice depends on use case. Cloudflare Workers wins for global latency-sensitive workloads with its 330+ city network and sub-5ms cold starts. Vercel Edge dominates React SSR applications with 1.2x to 5x performance advantages from Fluid Compute. Neither platform is universally better—each excels at specific workloads.

- Hybrid architectures are the real winner. 78% of teams combine edge, containers, and serverless for 30-48% cost savings. Deploy authentication and routing to edge, business logic to containers, and background jobs to serverless. Workload placement strategy matters more than platform evangelism.

- V8 isolates deliver 100x faster cold starts, but constraints matter. Sub-5ms initialization beats containers by orders of magnitude, but edge functions lack filesystem access, direct database connections, and support for long-running operations. Understand the trade-offs before migrating workloads.

- Performance improvements are production-proven. Edge computing reduces global latency from 150-300ms to under 50ms, with 60-80% Time to First Byte improvements. This directly impacts Core Web Vitals, SEO rankings, conversion rates, and user experience. The business case is clear.

- Avoid common pitfalls: wrong workloads, connectivity assumptions, and security drift. Keep stateful database operations in containers. Design for edge downtime. Maintain security parity between test and production. Watch CPU time usage patterns that change cost equations at scale.