On April 27, 2026, Microsoft and OpenAI restructured their partnership, ending Microsoft’s exclusive access to OpenAI’s models. The very next day, AWS announced that GPT-5.5, Codex, and other OpenAI products would be available on Amazon Bedrock. This marks the end of nearly seven years of Azure being the only cloud provider legally permitted to host OpenAI’s flagship AI models.

For developers and enterprises, this isn’t just a business deal—it’s a fundamental shift in cloud AI architecture. You’re no longer locked into Azure if you want GPT-5.5 or Codex. Multi-cloud flexibility reduces vendor lock-in, gives enterprises negotiating leverage, and could finally drive the price competition cloud users have been waiting for.

What Changed in the Partnership

Microsoft’s license to OpenAI intellectual property is now non-exclusive, running through 2032. OpenAI can sell its products on any cloud provider—AWS, Google Cloud, whoever else signs up. Microsoft remains the “primary cloud partner” with first access to new models for a four-month exclusivity window, but that’s the extent of Azure’s advantage.

The financial terms shifted significantly. OpenAI will continue paying Microsoft a 20% revenue share through 2030, but with a total cap (amount undisclosed). Microsoft, meanwhile, stops paying any revenue share to OpenAI. The infamous AGI trigger clause is gone too—previously, achieving artificial general intelligence would have ended Microsoft’s license, but now the license runs to a fixed 2032 date regardless of what OpenAI builds.

Behind the scenes, the restructure was driven by AWS’s massive bet on OpenAI: a $38 billion compute commitment over seven years, a $50 billion Amazon investment in the company, and a $100 billion expansion of existing agreements. That kind of deal doesn’t work when Microsoft holds exclusivity. The April restructure cleared the legal roadblock.

AWS Bedrock Gets OpenAI Models

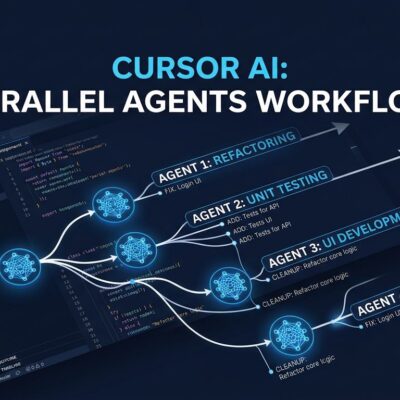

AWS didn’t wait. On April 28, Amazon Web Services launched OpenAI models on Bedrock: GPT-5.5 and GPT-5.4 in limited preview (general availability “within weeks”), Codex for code generation, Managed Agents for multi-step AI workflows, and two open-weight models (gpt-oss-20b and gpt-oss-120b). The infrastructure backing it is formidable—hundreds of thousands of NVIDIA GB200 and GB300 GPUs hosted in Amazon EC2 UltraServers.

For AWS-native shops, this changes everything. You can now access OpenAI’s most advanced models without migrating to Azure or dealing with Azure’s 15-40% pricing premium over OpenAI direct. Integration is seamless: standard Bedrock InvokeModel and Converse APIs, no new SDK required. Connect to Lambda, S3, DynamoDB—OpenAI models slot into your existing AWS workflows like any other Bedrock model.

AWS CEO Matt Garman called it “a huge partnership,” noting that customers have been requesting OpenAI models on AWS “from the very early days.” Bedrock’s multi-model strategy—61+ models, not just OpenAI—gives enterprises flexibility that Azure’s OpenAI-focused approach never offered.

Multi-Cloud AI Becomes the Default

This isn’t just Microsoft and AWS trading cloud exclusivity for market share. It’s a fundamental shift in how cloud AI works. Multi-cloud is now the default architecture, not the exception. Google Cloud is already certifying their infrastructure for OpenAI models, targeting Q4 2026 availability. Forrester analyst Mike Gualtieri nailed it: “This is the moment multi-cloud AI becomes the default architecture, not the exception.”

The procurement conversation has flipped. Before: “Which cloud has the model I need?” Now: “Which cloud has the best price, latency, and governance for the model I want?” Enterprises can negotiate better pricing by playing clouds against each other. Developers can optimize for latency (use different clouds in different regions), cost (compare pricing), or integration needs (use the cloud that matches existing infrastructure).

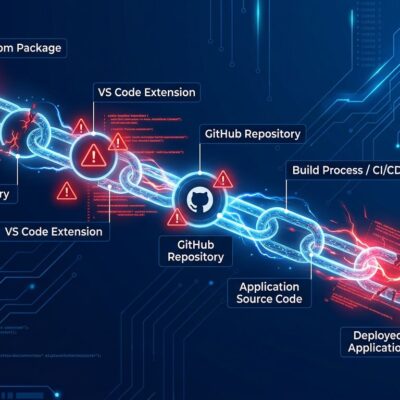

Developer tooling companies are already scrambling to add “multi-cloud LLM routing” as first-class features. Abstraction layers like LangChain and LlamaIndex gain importance when your production app might route requests to Azure in North America, AWS in Europe, and Google Cloud in Asia. Platform risk decreases when you’re not betting your business on one vendor’s pricing and availability.

What Developers Should Do Now

If you’re currently on Azure OpenAI and AWS is your primary cloud infrastructure, evaluate migration to Bedrock. The pricing advantage alone—potentially 15-25% lower total cost of ownership when consolidating multi-model workloads—justifies the exercise. Be aware: Azure OpenAI API and AWS Bedrock API are not compatible. Migration requires code changes, not just configuration tweaks.

Starting a new project? Consider multi-cloud from day one. But don’t ignore the trade-offs: API incompatibility across clouds, operational complexity of managing multiple providers, and version fragmentation (Azure gets new models first, AWS and Google Cloud lag by four months). Multi-cloud sounds great in theory. In practice, it adds overhead that small teams often underestimate.

Watch for pricing competition. None has materialized yet—Azure still charges its 15-40% premium, and AWS Bedrock pricing hasn’t undercut it significantly. But competitive pressure is real now. If one cloud drops pricing to gain market share, the others will follow. OpenAI’s path to independence—with a Q4 2026 or mid-2027 IPO anticipated—means the company is incentivized to keep clouds competing rather than letting one dominate.

The cloud AI wars just got more interesting. Developers win with choice, but only if they navigate the complexity that comes with it.