Anthropic’s newly released 2026 Agentic Coding Trends Report reveals a striking paradox defining modern software development: developers now use AI assistance in roughly 60% of their work, yet they’re only willing to fully delegate 0-20% of tasks to autonomous agents. This gap isn’t a failure of AI capability—it’s a feature of effective human-AI collaboration that requires active participation through setup, prompting, supervision, and validation. The implication is clear: productivity gains in 2026 come from making human oversight more efficient, not eliminating it.

The Developer Role Has Already Transformed

More than 75% of developers are now architecting, governing, and orchestrating instead of building applications. The shift happened fast: the average developer in early 2026 spends only 20% of their day writing code from scratch. The remaining 80% has moved to reviewing AI-generated output, crafting prompts, making architectural decisions, and orchestrating workflows.

New roles have emerged to formalize this transformation. AI Agent Orchestration Specialists define each agent’s role, rules, tools, and collaboration patterns. Cognitive Architects design “blueprints of thought” that guide AI agents through complex system integrations. The throughline across all these roles: developers are no longer implementers—they’re strategic coordinators who own outcomes while delegating execution.

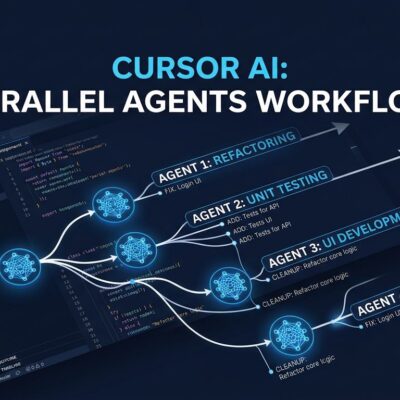

Multi-Agent Teams Replaced Solo Assistants

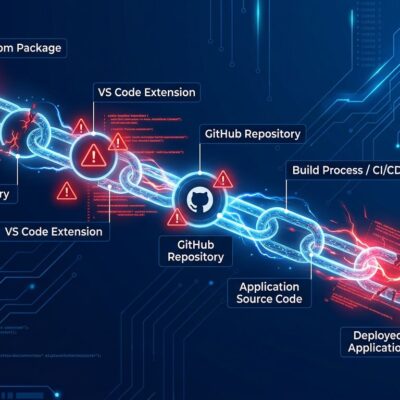

The era of single AI coding assistants is over. Anthropic’s report identifies multi-agent coordination as a defining 2026 trend, with hierarchical architectures using orchestrators to coordinate specialized agents working in parallel across separate context windows.

Real-world proof arrived in January 2026 when Cursor claimed to have built a browser in a week—over 1 million lines of code across 1,000 files using GPT-5.2 with hierarchical agent orchestration. Their architecture used three roles: Planners continuously explored the codebase and created tasks, Workers executed assigned tasks independently and pushed changes when done, and Judge agents determined whether to continue at each cycle end. The approach worked: 57% of companies now run AI agents in production, marking mainstream adoption.

Enterprise Adoption Crossed the Chasm in Q1 2026

The data confirms what engineering teams already feel: agentic AI went from experiment to standard practice in months. Moreover, 80% of enterprise applications shipped or updated in Q1 2026 now embed at least one AI agent—up from just 33% in 2024. By January 2026, 74% of developers globally had adopted specialized AI tools.

Elite teams show what mature adoption looks like: 80%+ weekly active usage, 60-75% of code AI-assisted, and sub-8-hour PR cycle times while maintaining code turnover ratios below 1.3x compared to human-only baselines. Real ROI exists—Duolingo integrated GitHub Copilot and saw a 25% speed increase for engineers in new repositories, a 10% boost for experienced staff, and a 67% reduction in median code review time.

However, there’s a cost. Teams are spending $200-$2,000+ per engineer per month in token costs on top of seat licenses. The “which tool won’t torch my credits?” conversation is now standard in engineering planning meetings.

The Trust Gap Explains Why Delegation Stays Low

Here’s the uncomfortable truth behind the adoption statistics: 46% of developers actively distrust AI tool accuracy, and only 33% trust the output (with just 3% “highly trusting”). Furthermore, positive sentiment for AI tools declined from 70%+ in 2023-2024 to 60% in 2025.

The top frustration, cited by 66% of developers, is dealing with “AI solutions that are almost right, but not quite.” Second place: “debugging AI-generated code is more time-consuming,” at 45%. Google’s 2025 DORA Report quantified the quality tension: 90% AI adoption increase correlated with a 9% climb in bug rates, 91% increase in code review time, and 154% increase in PR size.

This explains the delegation gap. Developers use AI for assistance—research, boilerplate, exploration—but reserve final judgment for themselves. The 60%/20% split isn’t a problem to solve; it’s the equilibrium where AI augments human expertise without replacing the judgment that separates working code from production-ready systems.

Long-Running Agents Expand Time Horizons

The third major trend Anthropic identifies is long-running agents—AI systems that operate over hours, days, or weeks rather than minutes, pausing only for strategic human checkpoints. Notably, Google’s Gemini Enterprise Agent Platform already supports agents that “run autonomously for days at a time,” handling workflows like sales prospecting sequences that naturally take a week to complete. Task horizons are expanding from synchronous request-response cycles to asynchronous background processes that maintain persistent state across context windows.

The Future Isn’t Replacement—It’s Orchestration

The 60%/20% delegation gap revealed in Anthropic’s report won’t close until the industry accepts a fundamental truth: AI needs human judgment, not just human prompts. The role transformation is complete—developers are already strategic orchestrators who design systems, coordinate agents, and own quality outcomes. The companies and engineers who adapt fastest to this reality—investing in architecture skills, agent coordination, and quality evaluation over manual coding—will define what elite engineering looks like for the rest of the decade.