Google’s TurboQuant cuts LLM memory requirements by 6x with near-zero accuracy loss, but developers can’t use it yet. Announced in March 2026 and presented at ICLR on April 23-25, the KV cache compression algorithm promises to run massive context windows on modest hardware using 3.5-bit quantization. The catch: Google’s official implementation won’t ship until Q2 2026, and integration into major inference frameworks like vLLM and llama.cpp likely won’t arrive until Q3-Q4 2026 at the earliest.

Why 6x Memory Reduction Actually Matters

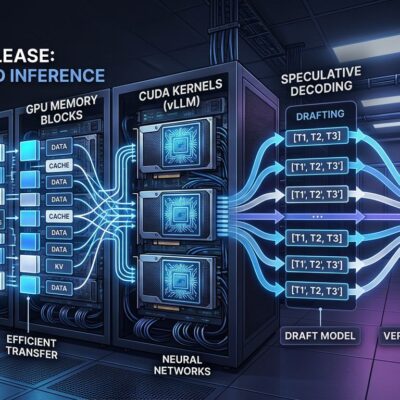

The KV cache is the primary memory bottleneck in LLM inference. A single Llama 3 70B request at 128K context consumes 42 GB of GPU memory just for the KV cache. On an 80 GB GPU, that leaves almost nothing for model weights and zero room for concurrent users. TurboQuant’s 6x reduction would shrink that 42 GB to roughly 7 GB.

This isn’t just academic optimization. It’s the difference between running LLMs exclusively on H100s versus accessible consumer GPUs. It’s serving one user versus six users on the same hardware. LLM inference costs have already dropped 1,000x in the last three years, and TurboQuant adds another multiplier to infrastructure economics. When inference costs directly affect product margins and iteration speed, a 6x memory reduction translates to real developer leverage.

How Rotation Quantization Preserves What Matters

TurboQuant’s core insight: attention mechanisms rely on angular relationships between vectors, not exact numerical values. The algorithm uses rotation-based vector quantization, multiplying input vectors by a random orthogonal matrix to simplify their geometric structure. After rotation, each coordinate follows a Beta distribution that approximates Gaussian N(0, 1/d), enabling optimal Lloyd-Max scalar quantizers to compress each coordinate independently.

PolarQuant, the key compression technique, decomposes each vector into a magnitude scalar and a direction vector on the unit hypersphere. A codebook of evenly-spaced points preserves the angular relationships that attention depends on while achieving 3.5 bits per value. The two-stage architecture applies an optimal MSE quantizer followed by a 1-bit residual quantizer, resulting in near-zero accuracy loss without any retraining.

This is clever engineering, not magic. TurboQuant compresses what doesn’t matter and preserves what does.

Benchmarks Prove It Works

At 3.5 bits per value, TurboQuant scored 50.06 on LongBench—identical to the 16-bit baseline. Even at an aggressive 2.5 bits, the score only dropped to 49.44. On Needle in a Haystack tests up to 104K tokens, TurboQuant achieved 0.997 at 4x compression, outperforming KIVI (0.981) and SnapKV (0.858). On NVIDIA H100 GPUs, 4-bit TurboQuant delivers up to 8x speedup in attention computation versus 32-bit unquantized keys, roughly 4x versus the FP16 standard used in practice.

The results hold across Llama-3.1-8B-Instruct, Gemma, and Mistral models on five standard benchmarks: LongBench, Needle-in-a-Haystack, ZeroSCROLLS, RULER, and L-Eval. The algorithm is training-free and data-oblivious, making it a drop-in optimization once framework support ships.

The Gap Between Announcement and Production

Here’s where hype meets reality. As of May 1, 2026, Google’s official TurboQuant implementation hasn’t shipped. Community implementations exist—TheTom/turboquant_plus on GitHub has 6.4k stars, and 0xSero/turboquant provides Triton kernels with vLLM integration—but these are non-mainline builds not ready for production deployment.

The llama.cpp feature request (#20977) has 277+ upvotes and remains open. vLLM has a similar feature request without official integration. TensorRT-LLM hasn’t announced support. Google’s official implementation is expected in Q2 2026, with major framework integration likely arriving Q3-Q4 2026 at the earliest.

The timeline matters: announced March 2026, presented at ICLR April 23-25, official release June-July, framework adoption late 2026 or early 2027. Developers can experiment with community implementations today, but production deployment remains months away.

Efficiency as Competitive Advantage

TurboQuant fits a broader industry shift from scaling to efficiency. While previous AI progress followed a “bigger models, more compute, longer context” playbook, 2026 marks a pivot toward “same performance, less resources.” Economic drivers are clear: infrastructure costs keep rising despite H100 prices dropping 75%, and inference optimization has become a competitive necessity rather than an optional enhancement.

TurboQuant joins other efficiency innovations: DeepSeek V4’s 1M token context at 90% less memory, traditional model quantization (int8/int4), semantic caching, and hybrid memory architectures. Gartner predicts that by 2030, inference on a 1 trillion parameter LLM will cost over 90% less than in 2025. TurboQuant accelerates that trajectory by addressing the specific bottleneck of KV cache memory during inference.

The shift from “scale at any cost” to “efficiency as competitive advantage” isn’t ideological—it’s economic reality meeting technical maturity.

What Developers Should Do Now

Track Google’s Q2 2026 official release and monitor framework integration announcements from vLLM, llama.cpp, and TensorRT-LLM. Test community implementations for evaluation purposes, but don’t deploy to production yet. Plan infrastructure roadmaps with TurboQuant integration budgeted for late 2026 or early 2027. Understanding the technical foundations now—rotation quantization, KV cache bottlenecks, angular relationship preservation—prepares you for adoption when frameworks ship official support.

6x compression sounds great, but the real question is when you can actually use it. For TurboQuant, that answer is: not quite yet, but soon enough to matter.