Qualcomm just landed its first major data center AI chip customer. Saudi Arabia’s Humain will deploy 200 megawatts of Qualcomm AI200 chips in 2026, directly challenging Nvidia’s 80 percent market dominance. The contrarian bet: using smartphone memory (LPDDR) instead of Nvidia’s expensive HBM to undercut on cost. Given Qualcomm’s track record of data center failures, this is either a breakthrough or another billion-dollar mistake.

The LPDDR Gamble: Smartphone Tech vs. Data Center GPUs

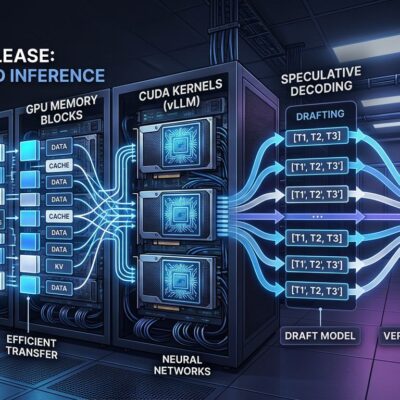

Qualcomm’s AI200 chips pack 768GB of LPDDR5X memory per card—the same technology powering smartphones, not the high-bandwidth HBM3e that Nvidia’s H100 and H200 rely on. The cost gap is staggering: LPDDR runs $2 to $4 per gigabyte versus $7,600 to $11,500 for equivalent HBM capacity. Qualcomm’s memory cost per card: roughly $1,500 to $3,000. Nvidia’s: $7,600 to $11,500.

The trade-off is bandwidth. LPDDR5X delivers 68 GB/s. Nvidia’s HBM3e exceeds 1 TB/s—a fifteen-fold gap. Qualcomm’s thesis: AI inference doesn’t need training-level bandwidth. Models load once, then run. High capacity matters more than peak throughput for inference, which will account for 75 percent of AI compute by 2030.

The thermal risk is real. LPDDR has never been tested at rack scale with 160kW power consumption. Qualcomm specs direct liquid cooling. Whether that suffices or racks overheat under load is unproven. If thermal management fails, cost savings evaporate. Qualcomm announced the AI200 and AI250 specifications in October 2025 with 768GB LPDDR5X and direct liquid cooling as key features.

Qualcomm’s Data Center Graveyard

This is Qualcomm’s third data center attempt. The previous two failed spectacularly. Centriq server CPUs launched late 2017 with Microsoft testing. Five months later, cancellation rumors circulated. By 2019, the project was dead. Qualcomm laid off 950 employees.

AI100 chips launched in 2019. Six years later, they finally landed their first meaningful deployment: 1,024 systems to Humain in 2025. That’s not a product launch. That’s a zombie project.

In 2021, Qualcomm acquired Nuvia for $1.4 billion. Arm immediately sued. Apple sued a Nuvia founder. Data center revenue now projected for fiscal 2028—seven years post-acquisition. The pattern is consistent: announcements, legal battles, slipped timelines, failed products.

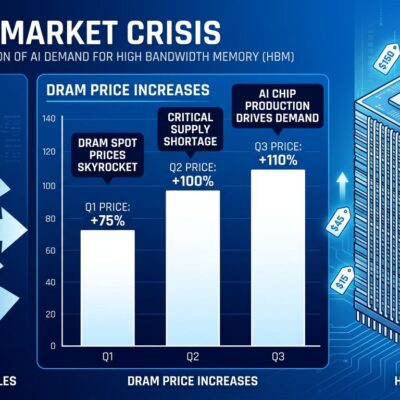

Why should this time differ? Market conditions favor challengers. Nvidia controls over 80 percent of data center AI accelerators. H100 80GB GPUs cost $31,000. HBM shortages persist through 2027. Hyperscalers spend over $200 billion annually on AI infrastructure but can’t secure enough Nvidia chips.

That desperation doesn’t guarantee Qualcomm wins. AMD’s MI325X delivers competitive hardware but lags years behind Nvidia’s CUDA ecosystem. Intel’s Gaudi 3 trains models 1.5x faster than H100 but remains a rebuilding story. Google TPUs achieve 4x better cost-per-token for inference—Anthropic bought 1 million, Midjourney cut costs 65 percent switching from GPUs—but TPUs are Google-only. Qualcomm must prove LPDDR works at scale, build a software stack, and land customers beyond one state-backed buyer.

Saudi Arabia’s Vision 2030 Gamble

Humain launched in May 2025 under Saudi Arabia’s Public Investment Fund. The goal: build a full AI stack from data centers to cloud to models. Target: 100 gigawatts of AI compute by 2026. Saudi data center investment has expanded sixfold since Vision 2030, exceeding $4.26 billion.

Humain isn’t all-in on Qualcomm. They partnered with Nvidia for 500MW over five years and with AWS for an AI Zone. The 200MW Qualcomm deal hedges bets. If Qualcomm delivers cost savings, Saudi gains an edge. If it fails, they fall back to proven Nvidia infrastructure.

Saudi Arabia declared 2026 the Year of AI. Hosting a Qualcomm Design Center creates jobs and tech transfer. Betting on the challenger differentiates Saudi’s AI strategy. Whether that translates to capability or remains prestige-driven spending is unclear. State-backed tech initiatives sustain doomed projects past rational cutoffs. Humain’s 200MW deployment signals confidence—or indifference to downside risk.

The Verdict Comes in 2026-2027

AI200 chips ship in 2026. The 200MW Saudi deployment is the validation test. If LPDDR works at rack scale, if thermal management holds, if performance suffices for inference, and if cost undercuts Nvidia, Qualcomm opens a new market. Inference shifts to LPDDR architectures. Nvidia’s pricing power weakens.

If thermal issues emerge, if performance disappoints, if software gaps make integration painful, or if no customers materialize beyond Humain, this joins Centriq and AI100 in the graveyard. Qualcomm stays dependent on mobile revenue.

For developers, cheaper AI inference matters. Cost per token determines which models scale. If Qualcomm delivers “good enough” performance at a fraction of Nvidia’s cost, economics shift. If they fail, wait for hyperscaler custom ASICs—growing 44.6 percent in 2026 versus 16.1 percent for GPUs—or accept Nvidia’s pricing.

Watch for real-world benchmarks, thermal stability, software maturity, and whether anyone beyond Saudi Arabia buys in. Bloomberg reported the announcement on April 30, making this one of the freshest challenges to Nvidia’s data center dominance. History says bet against Qualcomm in data centers. But Nvidia’s stranglehold, cost pressure, and HBM shortages mean this time might be different. Might.