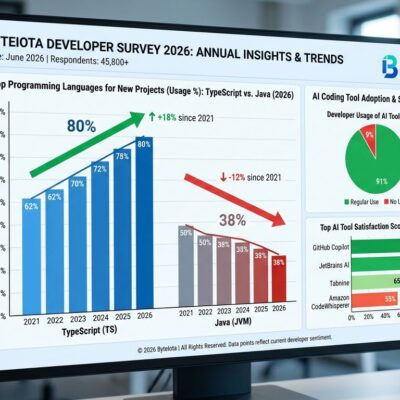

The 2025 DORA report made a stunning admission. They replaced the elite/low/medium/high performer tiers with seven team archetypes, added a fifth metric (rework rate), and introduced an eight-measure framework. Translation: even DORA’s own creators admit four metrics aren’t enough anymore. AI tools now write 41% of all code and boost developer throughput 30-40%, yet delivery stability dropped 7.2% according to Google’s 2024 DORA data. Code churn doubled from 3.1% to 5.7%, and 43% of AI-generated code requires manual debugging in production. DORA metrics capture deployment pipelines but ignore 47% of developer time spent in coordination. Elite teams layer DORA with three additional dimensions: developer experience, AI attribution, and business outcomes—achieving 40-50% productivity gains without burning out their people.

DORA’s Three Critical Blindspots

Traditional DORA metrics—deployment frequency, lead time, change failure rate, and recovery time—measure software delivery pipelines effectively. But they miss massive chunks of what actually determines team performance.

Blindspot 1: Coordination Overhead (47% of Developer Time)

DORA tracks how fast you deploy code. It doesn’t track the 47% of developer time spent in meetings, Slack threads, code reviews, architecture discussions, and context switching between tools. Teams lose 6+ hours per week to tool fragmentation alone. High-maturity platform engineering teams reduce cognitive load by 40-50%—an improvement completely invisible to DORA. You can have blazing-fast deployment frequency while your developers drown in coordination overhead, burn out, and quietly update their LinkedIn profiles.

Blindspot 2: The AI Quality Collapse

Here’s the paradox that breaks DORA: AI writes 41% of all code and boosts developer throughput 30-40%. DORA sees faster velocity and celebrates. What it doesn’t see is the quality collapse happening underneath. Bug density is 23% higher in projects with unreviewed AI-generated code. Code churn—the proportion of new code reverted within two weeks—doubled from 3.1% to 5.7%. A staggering 43% of AI-generated code changes require manual debugging in production, even after passing QA and staging tests.

Developer trust tells the real story. Only 29% of developers trust AI output in 2026, down 11 percentage points from 2024. Less than half always check AI-generated code before committing it. The result? DORA metrics show “Elite” velocity while technical debt compounds exponentially and maintenance costs quadruple within two years.

Blindspot 3: Business Outcomes Disconnect

DORA measures deployment velocity, not value delivered. It doesn’t track rework, planning reliability, team load, or business impact. Executives want answers to revenue capture, cost avoidance, and competitive advantage. DORA can’t provide them. Example: reducing mean time to recovery from four hours to one hour represents three hours of avoided revenue loss. If your platform generates $50,000 per hour, that single DORA improvement represents $150,000 in risk mitigation value. But DORA just reports “MTTR improved”—the business translation is manual.

You can achieve “DORA Elite” status while burning money on unused features, accumulating technical debt that will cripple you in 18 months, and shipping fast-but-wrong solutions that customers hate. Elite teams in 2026 refuse that trade-off.

What Elite Teams Track Beyond DORA

Elite engineering teams don’t abandon DORA in 2026—they layer it with three additional dimensions to capture the complete picture.

Layer 1: DX Core 4 Framework

The DX Core 4 framework unifies DORA, SPACE, and DevEx into four dimensions: Speed (PRs per engineer), Effectiveness (Developer Experience Index measuring 14 factors of dev experience), Quality (change failure rate plus perceived quality), and Impact (business outcome alignment). The Effectiveness dimension is the game-changer. DXI measures 14 aspects of developer experience on a 5-point scale—things like tool quality, documentation clarity, and ease of collaboration. This captures the “why” behind DORA’s “what.” Example: a team tracking DXI identified coordination overhead as their primary bottleneck, something completely invisible in their “Good” DORA scores.

Layer 2: SPACE Framework

SPACE adds five dimensions DORA ignores: Satisfaction (how fulfilled developers feel), Performance (outcomes, not just activities), Activity (with context, not raw counts), Communication quality, and Efficiency/Flow (preserving flow state, minimizing waste). The critical insight: teams can achieve “Elite” DORA status through burnout, skipped documentation, and technical debt accumulation. SPACE metrics catch this before it becomes a crisis. High psychological safety alone correlates with 19% higher productivity—a factor DORA never measures.

Layer 3: AI Attribution Metrics (New for 2026)

Without AI attribution metrics, you cannot explain why productivity increased 30% but stability decreased 7.2%. Elite teams track AI code share (41% of all code on average), quality comparison between AI-generated and human-written code, the trust gap (only 29% trust AI output), and the time-saved-versus-bugs-introduced trade-off (3 hours 45 minutes saved per week, offset by 41% more bugs).

The trust gap is the real metric in 2026, not raw AI adoption. 84% of developers use AI tools, but 96% don’t fully trust the output. That disconnect drives the quality collapse DORA can’t see.

Layer 4: Business Impact Metrics

Elite teams shifted from “how fast can we deploy?” to “what business value did we deliver?” They track cost per outcome (not percentage savings), feature adoption rates (not just deployment frequency), revenue impact and cost avoidance, and customer satisfaction tied to deployment quality. This three-layer measurement framework combines system metrics (DORA), developer experience (satisfaction, flow, burnout), and business impact (value delivered, not velocity).

The 2026 Benchmark Data: What “Good” Looks Like

Elite teams tracking DORA plus three dimensions achieve 40-50% productivity gains while improving team well-being. Mature platform engineering teams see 25-50% faster deployments. Organizations investing in developer experience report up to 53% efficiency gains. Well-being improves rather than degrades: 60-75% of developers feel more fulfilled, and teams with high psychological safety show 19% higher productivity.

The 2025 DORA report introduced seven team archetypes that make the pattern clear. “Harmonious High Achievers” excel across team well-being, product outcomes, and software delivery—they refuse to choose between velocity and sustainability. “Foundational Challenges” teams are trapped in survival mode with low performance, high system stability, and high burnout. The difference isn’t harder work or longer hours. It’s multidimensional measurement that catches problems before they metastasize.

Average teams using DORA-only can’t explain why performance varies. They have high DORA scores but burned-out teams. They ship fast but quality collapses. As one analysis put it: “DORA tells you what is happening, but not why it is happening.” Elite teams need both.

The AI reality check reinforces this. While 84% use AI tools, only 29% trust them. Real productivity boost after accounting for the 41% bug increase? Just 5-15%, not the 30-40% raw throughput numbers suggest. The trust gap is the metric that predicts which teams will thrive and which will drown in technical debt.

Key Takeaways

- DORA is table stakes, not the complete picture: Don’t abandon it—layer it with one to two additional dimensions based on your biggest blindspot.

- Developer frustration and burnout: Add DX Core 4’s Developer Experience Index to measure the 47% of time spent on coordination.

- AI quality concerns: Add AI attribution metrics tracking code share, quality comparison, and the trust gap (29% trust rate).

- Business value questions: Add business outcome metrics like cost per outcome and feature adoption to answer “what value did this sprint deliver?”

- Unexplained performance variance: Add SPACE framework dimensions—especially Satisfaction and Flow—to understand the “why” behind the “what.”

- Minimum for balanced view: Measure at least three dimensions beyond DORA’s four to five metrics. Track both “what” (DORA) and “why” (DX, SPACE, outcomes).

- Balance velocity with well-being: Elite teams achieve 40-50% gains without burning out their people. Join the “Harmonious High Achievers” archetype.

In the AI era, the teams that thrive will be the ones measuring what actually matters, not just what’s easy to track.