Claude Mythos scores 99. You can’t use it. Gemini 3.1 Pro and GPT-5.4 both score 94. Which is better? The answer exposes 2026’s biggest AI benchmark crisis: the tests developers rely on to choose models are saturated, gamed, and increasingly meaningless. When frontier models all score above 95% on standard benchmarks, the question isn’t “which model is best?” anymore. It’s “which benchmarks still matter?”

When All Models Are Excellent, None Are

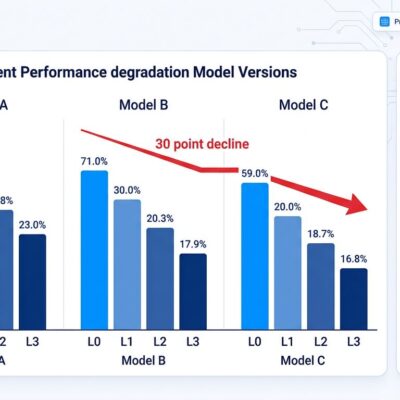

The benchmark collapse happened fast. MMLU, the knowledge test every AI lab cited in 2025, now shows frontier models scoring above 88%. GPT-5.3 Codex hits 93%. HellaSwag, once a reasoning differentiator, saturated at 95%+ across the board. HumanEval and GSM8K joined the graveyard. BenchLM’s April analysis put it plainly: “MMLU still matters historically, but it is much weaker than HLE, GPQA, and MMLU-Pro for separating current frontier rows.”

Score inflation tells the story. In 2025, hitting 80 on composite benchmarks meant you had something. In 2026, 94 is the new average for frontier models. The problem isn’t that models got too good—it’s that benchmarks got predictable. Training data contamination means models have seen the test questions. Optimization for benchmarks replaced optimization for real-world performance. LXT’s analysis confirms what developers suspect: “Models that dominate leaderboards often underperform in production.”

The industry needed harder tests. Fast.

Benchmarks That Still Have Signal

Not every benchmark died. The survivors share one trait: frontier models don’t all score 95%+. They show spread. BenchLM identified which tests still differentiate:

Knowledge & Reasoning: HLE (hard knowledge), GPQA, and MMLU-Pro replaced original MMLU. These aren’t saturated yet.

Coding: SWE-bench Pro, SWE-bench Verified, and LiveCodeBench maintain separation. Claude Opus 4.7 hits 87.6% on SWE-bench Verified. Claude Sonnet 4.5 scores 82%. That’s a gap—unlike MMLU where everyone clusters at 90%+.

Agentic Work: Terminal-Bench 2.0, BrowseComp, and OSWorld-Verified test real agent capabilities. Claude Mythos scored 79.6% on OSWorld—good, but not saturated.

Multimodal: MMMU-Pro and OfficeQA Pro avoid the visual reasoning saturation trap.

The breakout star? LiveBench. Fresh questions every month from new sources. Objective scoring. Top models still score under 70%. LXT calls it “probably the single best general benchmark right now.” When your top performers can’t crack 70%, you’ve got a test that still teaches something.

The Mythos Paradox

Claude Mythos scores 99—the highest composite score in the industry. It dominates SWE-bench Verified at 93.9%, crushes USAMO 2026 at 97.6%, and achieves a perfect 100% on Cybench’s cybersecurity challenges. By every benchmark measure, it’s the best model available.

Except it’s not available. At all.

Anthropic gated Mythos to “Project Glasswing partners”—a curated group of internet-critical companies and open-source maintainers. Defensive security research only. No public API. No general release planned. Vellum’s leaderboard ranks it #1, but that ranking means nothing to 99.9% of developers.

The Mythos situation exposes the benchmark game’s central flaw: optimizing for scores that don’t translate to access or utility. You can’t choose “the best model” if you can’t use it. The real competition starts at #2.

The Real Leaderboard

With Mythos out of reach, the accessible frontier clusters tight. Gemini 3.1 Pro and GPT-5.4 tie at 94. Claude Opus 4.6 and GPT-5.4 Pro both score 92. Cristhian Villegas notes that “for the first time, there is no absolute winner.” Different models dominate different domains:

Coding: Claude Opus 4.6 leads with 87.6% on SWE-bench Verified, integrated into Cursor, Windsurf, and Claude Code.

Mathematics: Gemini 3.1 Pro and GPT 5.2 tie at 100% on AIME 2025. Yes, 100%. That benchmark’s done.

Reasoning: Claude 3 Opus hits 95.4% on GPQA Diamond, the hardest knowledge test still showing spread.

Cost: DeepSeek V4 disrupts everything.

The 50x Cost Question

DeepSeek V4 costs $0.30 per million input tokens. Claude Opus 4.6 costs $5.00. That’s not a pricing difference—it’s a 50x canyon. On output tokens, DeepSeek charges $0.50 versus Claude’s $25.00. The gap widens to 68x.

Performance claims (unverified as of April 11, but leaked benchmarks suggest) put DeepSeek at 90% HumanEval and 80%+ on SWE-bench Verified. If accurate, that approaches Claude Opus territory at 1/50th the cost. Open-weight GLM-5 scores 85 on composite benchmarks—just 9 points below the proprietary leaders.

The question developers now ask: Is Claude’s 94 score worth 50 times DeepSeek’s price? For some use cases, yes. Claude’s SWE-bench lead matters if you’re building code agents. But for many workloads, DeepSeek’s 85-90 performance at $0.30 beats Claude’s 92-94 at $5.00.

Cost-performance is the new battleground. Benchmarks that ignore pricing tell half the story.

What Developers Should Actually Do

Composite scores mislead. MMLU is dead. Claude Mythos isn’t available. So what matters?

Use benchmarks with spread. If the top 5 models all score 95%+, that test doesn’t help you choose. Look for benchmarks where leaders score 70-90% and there’s distance between ranks. LiveBench, SWE-bench Verified, GPQA, and HLE still differentiate.

Match benchmarks to your domain. Building a code assistant? SWE-bench matters. MMLU doesn’t. Scientific research? GPQA and AIME. Generic knowledge? LiveBench.

Test with YOUR data. Villegas recommends testing “at least three models with your real data. Benchmarks are a guide, but performance on your specific domain can vary significantly.” LXT echoes this: create 100-200 test cases that represent your actual workload.

Factor in cost. A model that’s 5% better but 50x more expensive might be the wrong choice. DeepSeek V4 democratizes access to near-frontier performance. For many teams, that’s the winner.

Ask the right question. Not “which model scores highest?” but “which model solves MY problem at MY budget?” The era of one dominant model is over. The era of choosing the right tool for the job has begun.