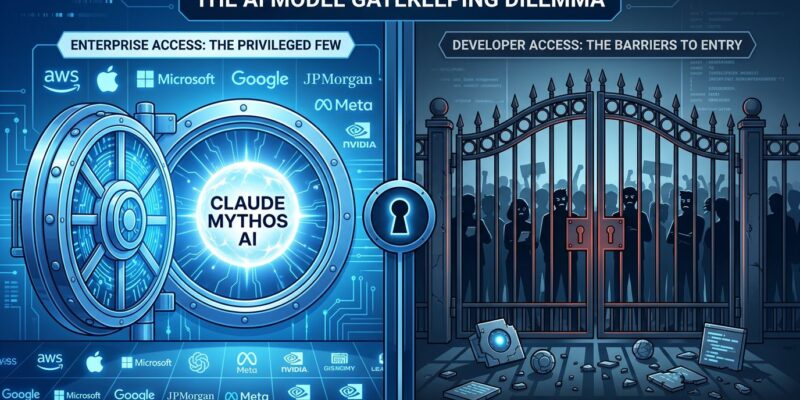

Anthropic announced Claude Mythos Preview on April 8, 2026—the most capable AI model the company has ever built—and simultaneously revealed zero public access. Only 50 organizations get access through “Project Glasswing,” an exclusive program featuring Amazon Web Services, Apple, Microsoft, Google, JPMorgan Chase, and 45 others. Meanwhile, the public is stuck with Claude Opus 4.7, which Anthropic explicitly positioned as inferior when releasing it eight days later. Anthropic claims safety concerns justify the restriction: Mythos’ cybersecurity capabilities are “too dangerous” for public release. However, critics question whether this is legitimate safety or enterprise monetization dressed up as responsibility.

Mythos Capabilities: Benchmark Dominance and Zero-Day Discovery

Claude Mythos Preview achieves state-of-the-art performance on 17 of 18 major AI benchmarks. It scores 93.9% on SWE-bench Verified (code generation), 94.6% on GPQA Diamond (graduate-level science reasoning), 97.6% on USAMO (mathematical olympiad problems), and saturates Cybench at 100%—meaning the benchmark is now obsolete. The only benchmark where Mythos doesn’t dominate is MMMLU, where Gemini 3.1 Pro overlaps at 92.6-93.6% versus Mythos’ 92.7%.

Moreover, the cybersecurity capabilities are the core controversy. The UK AI Security Institute independently evaluated Mythos and found it achieves a 73% success rate on expert Capture the Flag tasks “no model could complete before April 2025.” Mythos is the first AI to finish “The Last Ones,” a 32-step simulated corporate network attack, completing it in 3 of 10 attempts. Beyond benchmarks, Anthropic claims Mythos discovered thousands of zero-day vulnerabilities in every major operating system and web browser—including a 27-year-old memory corruption bug in OpenBSD.

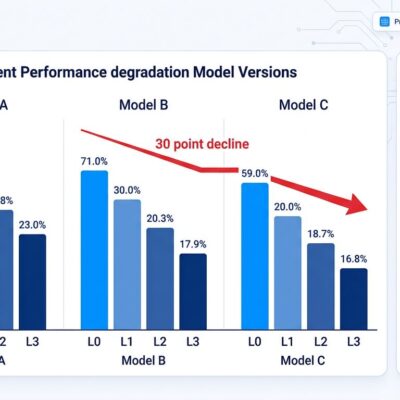

Furthermore, the performance gap between the gated model and public alternatives is measurable. Mythos scores 83.1% on CyberGym compared to Claude Opus 4.6’s 66.6%—a 17-point advantage for the model reserved for Fortune 50 companies. For code generation, security research, and advanced reasoning, developers without Glasswing access get materially worse tools.

The Controversy: Safety, Compute Capacity, or Control?

Anthropic CEO Dario Amodei stated, “We do not plan to make Claude Mythos Preview generally available. We may release a similar model publicly when new safeguards are in place.” However, no timeline was provided. The official framing is safety: offensive cybersecurity capabilities in public hands would enable attackers to weaponize zero-day discoveries at scale. Nevertheless, the problem with this narrative is that it assumes 50 corporations are more trustworthy than the broader developer community.

Conversely, venture capitalist Marc Andreessen offered an alternative explanation. He questioned whether Anthropic is “really holding back Mythos because of security concerns, or because they lack the compute to support a general rollout. They’ve had frequent outages and throttled users during peak times.” If compute capacity is the bottleneck, framing gatekeeping as “responsible scaling” becomes marketing rather than safety.

In fact, security researcher Bruce Schneier was more blunt. CSO Online reported Schneier called the Mythos announcement “an elaborate sales pitch,” noting that Project Glasswing has produced only one confirmed CVE so far despite claims of “thousands” of zero-days discovered. The skepticism centers on a fundamental question: If Mythos is too dangerous for public use, why is it safe for JPMorgan Chase, AWS, and Google? What makes mega-corporations more responsible than independent security researchers?

Related: Claude Opus 4.7 Released, Trails Unreleased Mythos Model

Two-Tier AI: Elite Access, Public Scraps

Anthropic released Claude Opus 4.7 publicly on April 16, eight days after the Claude Mythos announcement. Axios reported Anthropic “publicly conceded that the new Opus model does not match the performance of Mythos.” Consequently, developers who pay for Claude Pro subscriptions get the inferior model. Enterprise partners in Glasswing get the frontier model at $25 per million input tokens and $125 per million output tokens—compared to Opus 4.7’s $3/$15 pricing. Therefore, the message is clear: if you’re not AWS, Apple, or JPMorgan, you get scraps.

Additionally, OpenAI followed Anthropic’s lead. The company released GPT-5.4-Cyber with restricted access to “vetted security vendors, organizations, and researchers”—no public availability. Thus, the two-tier system is now the industry pattern: gated frontier models (Mythos, GPT-5.4-Cyber) for elite enterprises, hobbled public models (Opus 4.7, Gemini Pro) for everyone else. This isn’t a temporary staged rollout like GPT-4’s early access period. Anthropic stated flatly it has “no plans” to release Mythos publicly.

Indeed, the irony is hard to miss. Meta—often criticized for “closed AI”—releases Llama 3.3 as fully open source with no restrictions. Meanwhile, Anthropic—the “mission-driven” company built on AI safety principles—gatekeeps its most capable model to 50 corporations. Which approach is more democratic?

What Developers Need to Know

There is no public application process for Project Glasswing. Access is invite-only for “critical infrastructure” organizations. Anthropic provided $100 million in usage credits to cover Glasswing participants, but ordinary developers have no path to access. Therefore, the choice for non-enterprise developers is stark: accept the inferior Opus 4.7 with its 17-34% capability gap on key benchmarks, or switch to open source alternatives like Llama 3.3 and DeepSeek that lag 6-12 months behind frontier closed models.

Moreover, the competitive disadvantage is real. If you’re building security research tools, advanced code generation systems, or complex reasoning applications, Glasswing partners have access to capabilities you don’t. Consequently, startups, nonprofits, academic researchers, and indie developers are locked out of the frontier.

Furthermore, the broader question is whether this gatekeeping makes AI safer. The Council on Foreign Relations called Mythos “an inflection point for AI and global security,” noting that restricting access “concentrates power” while adversaries like China, Russia, and North Korea develop equivalent offensive capabilities regardless. Gatekeeping makes models less auditable, not more secure. Open source models like Llama haven’t caused AI catastrophes. The safety narrative assumes restricting access prevents misuse, but it also assumes 50 corporations are more trustworthy than independent verification.

Key Takeaways

- Anthropic’s Claude Mythos Preview achieves state-of-the-art performance on 17 of 18 benchmarks and can autonomously discover zero-day vulnerabilities across major operating systems and browsers, but it’s restricted to 50 organizations through Project Glasswing with no public release planned.

- The safety rationale is contested: critics question whether Anthropic is gatekeeping due to genuine cybersecurity risks or due to compute capacity limits and enterprise revenue opportunities, especially given frequent outages and only one confirmed CVE from Glasswing so far.

- A two-tier AI system is emerging where frontier models (Mythos, GPT-5.4-Cyber) go to Fortune 50 companies while the public gets inferior alternatives (Opus 4.7, Gemini Pro), with measurable capability gaps of 17-34% on key benchmarks.

- Developers without Glasswing access must choose between accepting materially worse public models or switching to open source alternatives that lag 6-12 months behind frontier capabilities, creating competitive disadvantages for startups, nonprofits, and independent researchers.

- The core question remains unanswered: If Mythos is too dangerous for public use, why is it safe for 50 corporations? Gatekeeping concentrates power in mega-corporations while making models less auditable, and it doesn’t prevent adversaries from developing equivalent offensive AI capabilities.

Anthropic positioned itself as the responsible AI company, but gatekeeping the most capable model to elite enterprises looks more like corporate control than AI safety. The precedent is set. OpenAI followed. Google and Meta are watching. Frontier AI is becoming a Fortune 50 exclusive, and developers should question whether “safety” justifies that concentration of power.