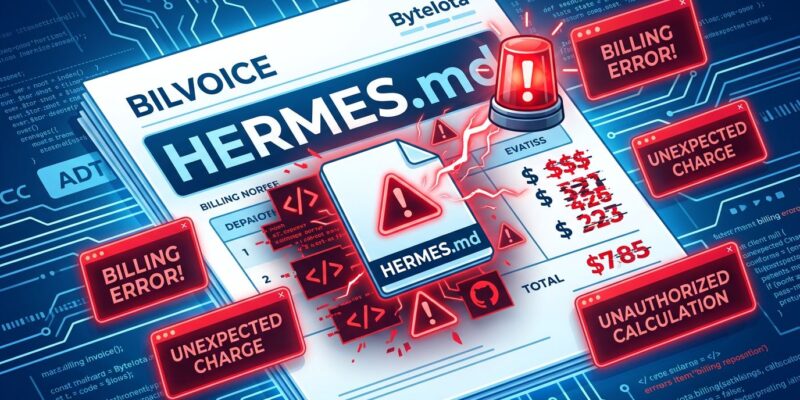

On April 25, 2026, Anthropic users discovered that a filename—the literal string “HERMES.md”—appearing anywhere in their Git commit history triggered a billing bug that bypassed their $200/month Claude Code Max subscriptions and charged them pay-as-you-go API rates instead. One user lost over $200 in overcharges while 86% of their prepaid subscription credits sat unused. When they contacted support, Anthropic refused to refund the money, categorizing the bug as an “un-refundable technical error.” Only after the story hit Hacker News’ front page with 828 upvotes and sparked outrage from developers like ThePrimeagen and Theo from t3.gg did Anthropic reverse course and promise refunds plus $200 in credits.

How a Filename Became a Billing Landmine

The technical absurdity is hard to overstate. Claude Code scans your repository context when you make requests. Anthropic’s content filter detected the string “HERMES.md” and flagged it as evidence of third-party tool usage. The system then rerouted the request from the user’s subscription tier to pay-as-you-go API billing—charging expensive per-token rates despite the user having 86% of their subscription capacity still available.

The error message compounded the problem: “You’re out of extra usage.” That’s misleading. The user wasn’t out of quota. The billing system had silently switched them to a different pricing tier because a filename in their Git history matched Anthropic’s detection logic.

ThePrimeagen captured the developer sentiment: “Just another day of being convinced that Anthropic has no clue what the hell is going on in their codebase.” Theo from t3.gg put it more bluntly: “It is genuinely insane that Anthropic will bill you differently if you mention certain words in your prompt or have certain files in your codebase.”

April 4 Policy Creates the Trap

The bug didn’t appear in a vacuum. On April 4, 2026, Anthropic blocked third-party tools like OpenClaw and Hermes Agent from using Claude Pro and Max subscription quotas. Users who relied on these tools had to switch to pay-as-you-go API billing. Anthropic’s rationale: third-party harnesses bypass prompt cache optimizations and place “outsized strain” on infrastructure.

To enforce the policy, Anthropic deployed content filters designed to detect third-party tool usage. HERMES.md is collateral damage from overly aggressive detection logic. The string likely matches documentation filenames from NousResearch’s Hermes Agent project—a self-improving AI agent unrelated to most developers’ work. The filter couldn’t distinguish between active third-party tool usage and an innocent filename reference in someone’s commit history.

No safeguards prevented the false positive from triggering financial harm. The system switched billing tiers without user knowledge or consent, ignored unused subscription credits, and provided no way to audit or challenge the decision in real time.

Refund Denial Until Social Media Forced Reversal

When the affected user contacted Anthropic support, they hit a wall. The company refused to refund the $200, categorizing it as an “un-refundable technical error.” Support is handled by Fin, an AI chatbot that users consistently describe as providing “unhelpful responses” and never escalating to a human agent despite explicit requests. Multiple customers report the same experience: Fin acknowledges billing errors in writing but no human ever follows up.

The refund policy reversal came only after public pressure. The bug report hit Hacker News on April 29 with 828 upvotes and 313 comments. ThePrimeagen and Theo amplified the story on social media. An Anthropic employee then announced the company would issue refunds plus $200 in credits to affected users.

That sequence reveals the accountability problem. Enterprise AI companies charge $200/month but provide no human support for billing disputes. Customers shouldn’t need Twitter threads or Hacker News front-page posts to get refunds for documented bugs. The message: public embarrassment works better than support tickets.

A Pattern, Not an Anomaly

HERMES.md isn’t Anthropic’s first billing bug. In January 2026, one user was charged 26 times in a single day. In February, double billing was confirmed as a system bug. Between March 25 and April 20, at least six users across Taiwan, Poland, the UK, and the US reported unauthorized charges. Some were billed for “Gift Max 5X” or “Gift Pro” subscriptions they never purchased. Others were charged despite being on the free tier.

Trustpilot reviews give Anthropic a 3/10 rating, with billing complaints dominating feedback. In late 2025, the company deactivated 1.45 million accounts, adding to concerns about system stability. The pattern suggests systemic billing architecture problems, not isolated incidents.

AI Billing Is a Black Box—And That’s Untenable

Anthropic’s HERMES.md fiasco illustrates a broader industry problem: AI billing has become a black box. CFOs can’t forecast costs when invisible content filters secretly reroute charges from subscriptions to API tiers. Developers can’t audit why they’re being billed differently. There are no real-time dashboards showing which billing tier is active or spending alerts before overages hit.

The enterprise software industry spent decades establishing accountability standards: transparent pricing, human support for disputes, SLA-backed uptime guarantees, and predictable costs. AI companies want enterprise budgets but resist enterprise accountability. Traditional SaaS pricing was seat-based and predictable. AI pricing is usage-based, elastic, and—in Anthropic’s case—dependent on content filters scanning your Git history.

Industry analysts note the problem: “CFOs are scrambling as AI pricing breaks traditional SaaS billing models,” with companies facing “opaque, hard-to-forecast expenses with fragmented pricing and technical invoices that resemble utility bills.” The demand for transparency is clear: real-time dashboards, spending caps, and documentation explaining what triggers different billing tiers.

Anthropic’s competitors are already there. OpenAI provides credit-based pricing with real-time dashboards. Google’s Vertex AI offers transparent per-request pricing and usage monitoring. Microsoft’s Azure OpenAI includes consumption-based billing with alerts and spending caps. Anthropic’s content-filter-based routing is undocumented, unmonitored, and—as HERMES.md proves—error-prone.

Trust Once Lost

Anthropic charges $200 per month for Claude Code Max. At that price point, billing accuracy isn’t optional. Content-based routing that can be triggered by a filename in your Git history is architectural malpractice. Refusing refunds for acknowledged bugs until social media forces a reversal is a customer service failure.

The lesson extends beyond Anthropic. AI companies entering enterprise markets must accept enterprise accountability standards. Transparent billing. Human support for disputes. Real-time monitoring and spending controls. Documentation explaining what affects pricing. No more “un-refundable technical errors” when the error is yours, not the customer’s.

Developers will vote with their wallets. When one provider’s billing system is a black box and another’s is transparent, the choice is obvious. Trust once lost is hard to rebuild—and Anthropic is losing trust one billing bug at a time.