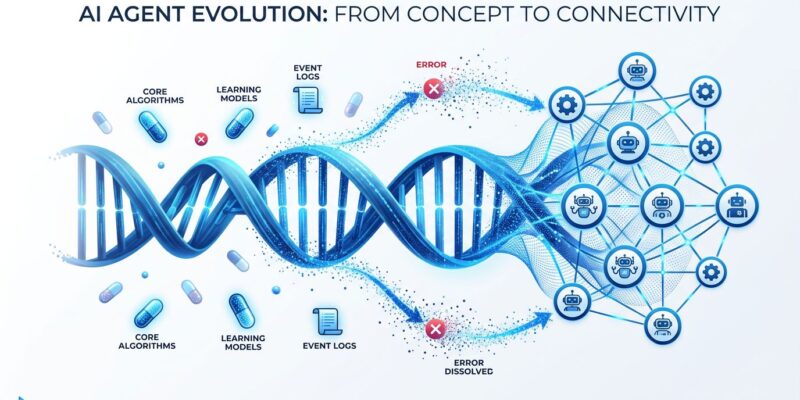

AI agents fail. A lot. They hallucinate tool parameters, ignore validation rules, and repeat the same mistakes across deployments. Traditionally, fixing this means developers spending weekends tweaking prompts, debugging edge cases, and manually updating configurations—only to watch agents fail differently next week. The Genome Evolution Protocol (GEP) flips this model: instead of humans debugging agents, agents debug themselves. Systematically. With complete audit trails.

GEP enables production AI agents to analyze their own failures, extract reusable improvement patterns (Genes), bundle validated fixes (Capsules), and maintain full evolution lineage (Events). This isn’t theoretical—JPMorgan Chase uses self-evolving AI agents for fraud detection across all transaction streams. Siemens runs predictive orchestration agents that auto-optimize supply chains. Pharmaceutical companies deploy them for regulatory document drafting. Gartner predicts 40% of enterprise applications will embed AI agents by year-end 2026, up from under 5% in 2025. Agent reliability isn’t optional anymore.

From Manual Debugging to Systematic Evolution

GEP treats agent improvements like biological genetics—capturing proven solutions as “Genes” (reusable improvement patterns), sharing them as “Capsules” (validated fixes), and maintaining evolutionary lineage through “Events” (append-only audit logs). The Evolver framework, open-sourced in February 2026 and currently trending on GitHub with 525 stars gained today, transforms ad hoc prompt tweaking into auditable, systematic evolution.

The six-phase evolution cycle works like this: SCAN reads agent transcripts and error logs. SIGNALS extracts typed failures (log_error, protocol_drift, high_tool_usage). SELECTION scores available Genes by signal match quality. MUTATION creates a risk-assessed change record with blast radius calculation. PROMPT generates a GEP-constrained evolution directive. SOLIDIFY validates the output, executes whitelisted commands, and appends the Event to the audit log.

Compare this to traditional debugging: you manually notice failures, diagnose root causes, write fixes, test manually, and redeploy. Worse, the knowledge dies with you—tribal wisdom lost in Slack threads. GEP automates this entire loop while preserving institutional memory. Real-world deployments show 60% error reduction after initial evolution cycles. One agent learns. A million inherit.

Related: OpenAI Agents SDK Sandbox: Production Code Execution

The Three GEP Building Blocks

Genes are abstract improvement strategies categorized as repair, optimize, or innovate. Evolver ships with three defaults: gene_gep_repair_from_errors fixes bugs from log patterns, gene_gep_optimize_prompt_and_assets handles refactoring, and gene_gep_innovate_from_opportunity adds features from user requests. You tune behavior via evolution strategies—balanced (50% innovate, 30% optimize, 20% repair), innovate (80/15/5 for rapid shipping), harden (20/40/40 for stability), or repair-only (80% for emergencies).

Capsules store concrete validated solutions. They include confidence scores, blast radius data (how many files affected), and environment fingerprints. This prevents repair loops—if you’ve solved a problem once, the Capsule stores that pattern forever. No redundant reasoning on identical failures.

Events form the append-only audit log with parent-child traceability. Every evolution cycle creates an Event record. You get full lineage of any change—critical for compliance in pharmaceutical and financial deployments. Roll back when needed. Prove systematic improvement to regulators. Git for AI behavior.

# Evolution strategy presets

node index.js --loop # Balanced: daily operations

EVOLVE_STRATEGY=harden node index.js --loop # Stability focus

EVOLVE_STRATEGY=repair-only node index.js --loop # Emergency mode

Production Deployment: The Three-Phase Approach

Don’t go autonomous day one. That’s a footgun. Phase 1 (weeks 1-4): Run single weekly manual evolutions with –review flag only. Study generated prompts. Build confidence. Phase 2 (weeks 5-8): Supervised cron automation still with review mode enabled. Add notification workflows. Phase 3 (month 3+): Selective autonomous apply restricted to low-risk mutations affecting five or fewer files.

Critical gotchas that will break production: Evolver’s default “mad dog mode” applies changes immediately without the –review flag. Always use –review until you trust the patterns. Setting EVOLVE_ALLOW_SELF_MODIFY=true is catastrophic—it lets evolver modify its own prompt logic, causing cascading failures. Never enable this. Repair loops inject innovation signals after three consecutive repairs, potentially adding unwanted features when you only need fixes. Use repair-only strategy during stabilization.

Safety gates protect you: command whitelisting allows only node, npm, and npx execution. 180-second timeouts prevent runaway validation. Git integration enables instant rollback. Protected source files prevent evolver self-modification. Strict cwd isolation is mandatory for multi-agent setups—prevents log contamination across agents.

For production deployment best practices, the BrightCoding self-evolving agents guide provides comprehensive safety checklists and phased rollout strategies validated across enterprise deployments.

# Install and first evolution run

npm install -g @evomap/evolver

cd your-agent-directory

git init # Required for rollback

evolver setup-hooks --platform=cursor # Or claude-code

node index.js --review # Human-in-loop mode

When to Use the Genome Evolution Protocol

Three major self-evolving frameworks exist. Evolver/GEP evolves behavior protocols via signal-based scoring with command whitelists and audit trails—ideal for regulated environments. OpenAI’s Cookbook approach evolves prompts using parallel LLM graders with version rollback—simpler for teams needing prompt improvement without protocol overhead. Karpathy’s autoresearch evolves Python code using single hard metrics with Git revert—faster iteration for ML researchers automating hyperparameter tuning.

Choose GEP when you need audit trails for compliance, when cross-agent capability sharing via the EvoMap network adds value, when Node.js 18+ is acceptable, and when protocol constraints are tolerable overhead. Choose alternatives when you’re a Python-native team (OpenAI Cookbook is simpler), when you’re doing ML experimentation (autoresearch iterates faster), or when you’re running single-agent DevOps (less complexity needed).

Wrong framework choice wastes weeks. GEP’s protocol overhead isn’t justified for simple prompt tweaking. But for multi-agent enterprise deployments needing compliance documentation, GEP’s audit trails and Capsule sharing become killer features. The Softmax Data comparison provides detailed technical analysis of when each framework excels.

Real-World Production Deployments

Self-evolving agents are in production now, not research prototypes. JPMorgan Chase deploys agents across all transaction streams that automatically update fraud detection behavior based on new patterns. Siemens runs predictive orchestration agents that find alternative suppliers and re-route deliveries autonomously when disruptions hit. Pharmaceutical companies use GEP for regulatory document drafting with repeatable retraining loops that capture reviewer feedback and promote improvements.

The market validates this approach: agentic AI surges from $7.8 billion in 2026 to a projected $52 billion by 2030—seven-fold growth. Real deployments reduce KYC onboarding from days to minutes at global banks. Evolver case studies show 60% agent error reduction. Siemens’ supply chain agents adapt continuously without manual reconfiguration.

Early adopters gain competitive advantage through systematic reliability improvement. Developers building agents today can implement the same patterns Fortune 500 companies are betting on. The EvoMap network amplifies this: publish your validated Capsules, fetch proven patterns from others, skip redundant debugging. Institutional memory becomes shared infrastructure.

# Lifecycle management for production

node src/ops/lifecycle.js start # Background daemon

node src/ops/lifecycle.js check # Health check and auto-restart

node src/ops/lifecycle.js stop # Graceful shutdown

Key Takeaways

- GEP automates agent debugging through Genes (improvement patterns), Capsules (validated fixes), and Events (audit trails)—stop weekend prompt tweaking

- Deploy in three phases: weeks 1-4 manual review only, weeks 5-8 supervised automation, month 3+ selective autonomous apply for low-risk changes

- Critical footguns: use –review flag always, never enable EVOLVE_ALLOW_SELF_MODIFY, watch for repair loops injecting unwanted innovation

- Choose GEP for regulated environments needing audit trails and cross-agent learning (pharmaceutical, financial), not for simple prompt evolution

- Production validation: JPMorgan Chase fraud detection, Siemens supply chain, pharma regulatory docs—60% error reduction proven

Install Evolver via npm: npm install -g @evomap/evolver. Requires Node.js 18+ and Git for rollback. Start with –review mode and balanced strategy. The EvoMap network awaits—one agent learns, a million inherit. Stop debugging. Start evolving.