OpenAI broke free from Microsoft’s cloud on Tuesday, launching GPT-5.4, Codex, and Managed Agents on Amazon Web Services’ Bedrock platform—one day after ending Azure’s exclusive access to its frontier AI models. The timing wasn’t coincidental. This was a calculated power shift that reshapes the AI cloud wars and eliminates the forced choice between OpenAI’s models and infrastructure independence.

The Monday-Tuesday Maneuver

On Monday, April 27, Microsoft and OpenAI announced a renegotiated partnership ending Azure’s exclusive cloud access. On Tuesday, April 28, OpenAI made GPT-5.4 immediately available on Amazon Bedrock, with GPT-5.5 coming within weeks. AWS CEO Matt Garman captured the customer frustration that drove this shift: “Their production applications run in AWS. Their data is in AWS. They trust the security of AWS, and we’ve forced them for the last couple of years, to get great OpenAI models, to go to other places.”

That “forcing” ends now. The announcement at AWS’s San Francisco event marks the beginning of multi-cloud AI—where enterprises choose infrastructure based on fit, not model availability.

What’s Available on Bedrock Now

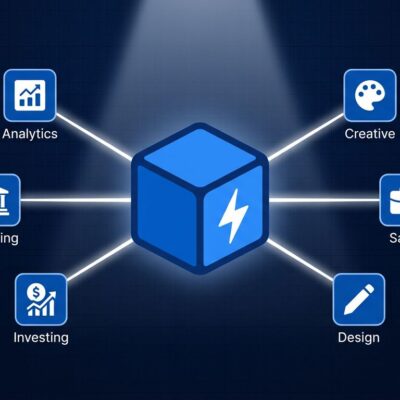

Three offerings launched in limited preview: OpenAI models (GPT-5.4 now, GPT-5.5 within weeks), Codex coding agent, and Bedrock Managed Agents powered by OpenAI. All three integrate natively with AWS infrastructure—authenticate with AWS credentials, inherit IAM access controls, leverage PrivateLink for private connectivity, and log activity through CloudTrail. No separate authentication systems. No forced multi-cloud complexity.

Codex on Bedrock matters for the 4+ million developers who use it weekly. Enterprise teams can now run OpenAI’s coding agent within their existing AWS environments, processing inference through Bedrock infrastructure while accessing it via the CLI, desktop app, or VS Code extension. Managed Agents bring production-ready OpenAI-powered workflows to AWS with built-in enterprise controls—multi-step reasoning, tool use, and long-running tasks that maintain context across complex business processes.

Who This Unblocks

AWS-native teams no longer face the Azure-or-GPT dilemma. Before Tuesday, accessing OpenAI models meant migrating workloads to Microsoft’s cloud, duplicating infrastructure, or accepting multi-cloud operational overhead. Now they access GPT-5 where their data already lives, using security controls they already trust, within compliance frameworks they already maintain.

Multi-cloud enterprises gain negotiating leverage. When model access required Azure exclusivity, Microsoft set the terms. With OpenAI available on both Azure and AWS—and likely Google Cloud soon—enterprises choose based on infrastructure fit, pricing, and integration quality. OpenAI’s revenue chief told employees in an internal memo that while the Microsoft relationship had been “critical,” it “has also limited our ability to meet enterprises where they are—for many that’s Bedrock.”

What Changed in the Microsoft Deal

The renegotiated partnership flips the economics and removes the constraints. Under the old agreement, Microsoft held exclusive access to all OpenAI products and IP, sharing 20% of OpenAI’s revenue with no cap. The new deal gives Microsoft a nonexclusive license through 2032, eliminates Microsoft’s revenue share payments to OpenAI, and caps OpenAI’s payments to Microsoft through 2030. As CNBC reported, the shift removes “significant long-term uncertainty” while opening OpenAI to customers on AWS, Google Cloud, and beyond.

What This Means for Multi-Cloud AI

Amazon committed up to $50 billion to OpenAI in February—$15 billion immediately, another $35 billion contingent on undisclosed conditions. Microsoft invested $13 billion since 2019 but no longer controls where OpenAI’s models run. That’s the power shift: AWS willing to pay more for access than Microsoft paid for exclusivity.

The competitive dynamics change. Hyperscalers can’t differentiate on model exclusivity anymore—OpenAI models run on both Azure and AWS, with Google Cloud likely next. Competition shifts to integration quality, enterprise tooling, pricing, and developer experience. AWS Bedrock now offers the broadest model portfolio: OpenAI GPT-5, Anthropic Claude, Meta Llama, Cohere, Amazon Titan, and more through a unified API.

For developers and enterprises, the implication is clear: cloud choice should be based on where your infrastructure already operates, not where specific AI models are available. As Stratechery’s Ben Thompson noted, Azure’s exclusivity was “actively damaging Microsoft’s investment in OpenAI” because enterprises prioritized their existing cloud infrastructure over model access. OpenAI on Bedrock removes that friction.

The multi-cloud AI era begins not with redundancy for its own sake, but with eliminating vendor lock-in as a decision factor. Access GPT-5 on Azure if you’re Azure-native. Access it on AWS if you’re AWS-native. Soon, access it on Google Cloud if that’s where your workloads run. The model stays the same. Your infrastructure doesn’t have to change.