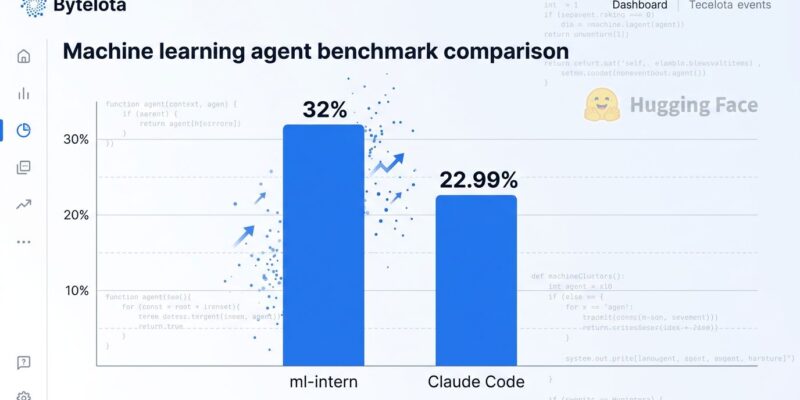

Hugging Face released ml-intern on April 21, 2026—an open-source AI agent that autonomously automates LLM post-training workflows. In demonstrations, it improved Qwen3-1.7B from 10% to 32% accuracy on the GPQA benchmark in under 10 hours, outperforming Claude Code’s 22.99% on the same task. This marks a significant milestone: open-source specialized agents beating proprietary general-purpose coding tools for the first time.

The timing matters. The 2026 State of Code survey revealed a verification bottleneck: 84% of developers use AI tools, but only 29% trust the output. ml-intern tackles this problem through iterative self-correction—it autonomously diagnoses failures, adjusts training strategies, and retrains until benchmark targets are met, delivering 70% time savings without the manual review overhead.

How ml-intern Works: 5-Phase Autonomous Workflow

ml-intern operates as a virtual ML engineer through five interconnected phases. First, it conducts literature review by browsing arXiv and Hugging Face Papers, reading methodology sections and traversing citation graphs to identify relevant training techniques. Next, it discovers datasets by searching 200,000+ options on Hugging Face Hub, assessing quality and reformatting for training compatibility.

The agent then generates Python training scripts using advanced techniques like Group Relative Policy Optimization (GRPO) or synthetic data generation for edge cases. It launches these jobs on cloud GPUs via Hugging Face Jobs, monitors progress, and uploads sessions for tracking. Finally, ml-intern evaluates results, diagnoses specific failures (like reward collapse in RLHF pipelines), and retrains with adjustments until performance improves.

Built on Hugging Face’s smolagents framework, the system runs up to 300 iteration loops with automatic doom loop detection to prevent repetitive patterns. This architectural choice—code-based actions instead of JSON tool-calling—reduces steps by approximately 30% compared to traditional agent frameworks like LangChain or AutoGen.

Benchmark Proof: Outperforms Claude Code on GPQA

The GPQA benchmark measures graduate-level science reasoning, making it a rigorous test of autonomous agent capabilities. ml-intern achieved 32% accuracy compared to Claude Code’s 22.99%—a 9-point margin that demonstrates specialization’s advantage over general-purpose tools. The Qwen3-1.7B model started at 10% baseline, crossed 27.5% in just 3 hours, and reached 32% in under 10 hours through 12 supervised fine-tuning passes.

Moreover, ml-intern delivered a 60% improvement on HealthBench by autonomously generating synthetic medical training examples for edge cases—including medical hedging language and multilingual emergency response scenarios—when it determined existing datasets were insufficient. This self-directed problem-solving mirrors how human ML researchers approach domain adaptation challenges.

The significance extends beyond benchmark scores. This represents the first instance of an open-source ML agent outperforming a major proprietary coding assistant on a reasoning benchmark, signaling a strategic shift in the open versus proprietary AI landscape.

Getting Started: Two Operational Modes

ml-intern offers interactive and headless modes for different workflows. Interactive mode launches a chat session where users guide the agent’s research and training decisions—ideal for novel research requiring human expertise. Headless mode executes single tasks with auto-approval, enabling CI/CD integration for automated fine-tuning pipelines.

Setup requires three API tokens: ANTHROPIC_API_KEY (powers the reasoning LLM), HF_TOKEN (access to Hugging Face Hub and Jobs), and GITHUB_TOKEN (code search and repository access). Hugging Face is provisioning $1,000 in GPU and Anthropic credits for early users, covering multiple experiments without upfront investment.

Interactive example:

ml-intern

User: "Improve Qwen2-7B on GSM8K math benchmark"

ml-intern:

1. Reading GSM8K benchmark documentation...

2. Found 3 relevant papers on math reasoning improvement

3. Identified technique: Chain-of-thought fine-tuning + GRPO

4. Searching Hugging Face for math datasets...

5. Found: GSM8K (8,792 grade school math problems)

6. Writing training script with GRPO...

7. Launching job on A100 (estimated 4 hours)...

8. Training complete. Accuracy: 67% → 82% (+15 points)Headless mode simplifies automation:

ml-intern "Fine-tune Llama-3-8B for medical Q&A using MedQA dataset"A web app is also available for non-technical exploration at Hugging Face Spaces, requiring no local setup or command-line knowledge.

When to Use ml-intern vs Alternatives

ml-intern specializes in ML post-training and outperforms general tools in this domain, but it’s not suitable for all tasks. Use ml-intern for benchmark optimization, domain adaptation (medical, legal, finance), rapid prototyping of paper techniques, and synthetic data generation. These are the workflows where its 70% time savings and autonomous research capabilities deliver maximum value.

However, Claude Code remains superior for general refactoring and multi-file edits, scoring 80.8% on SWE-bench compared to ml-intern’s focus on ML tasks. GitHub Copilot still leads for real-time autocomplete and moment-to-moment coding flow. The most productive engineering teams in 2026 use multiple tools strategically: Copilot for autocomplete, Claude Code for architecture exploration, and ml-intern for ML post-training.

Understanding when to use each tool maximizes productivity. ml-intern isn’t a replacement for all coding assistants—it’s a specialized instrument for ML workflows, and specialists consistently outperform generalists in their respective domains.

Open-Source Catches Up to Proprietary

ml-intern represents a broader trend: open-source specialized agents outperforming proprietary general-purpose tools. The GPQA results (32% vs 22.99%) aren’t just numbers—they signal that transparency and domain focus can beat closed ecosystems and feature breadth. This matters strategically for ML teams evaluating tooling investments.

The verification bottleneck identified in the State of Code survey—where 84% use AI tools but only 29% trust output—finds a partial solution in ml-intern’s iterative self-correction loops. Instead of generating code that requires extensive human review, the agent diagnoses its own failures and retrains until targets are met. Trust increases when the system demonstrates self-verification.

Additionally, ml-intern’s integration with the Hugging Face ecosystem (200,000+ datasets, model hub, compute jobs) exemplifies the 2026 developer tools trend where integration quality beats feature quantity. Context switching costs developers an estimated $78,000 annually in lost productivity, and tools that minimize this overhead win adoption battles.

The open-source advantage extends beyond cost. Transparency enables debugging, customization, and trust—critical factors when 71% of developers distrust AI-generated output. As specialized open-source agents continue outperforming general proprietary alternatives in specific domains, the strategic calculus for ML infrastructure shifts toward open, focused tools.

Key Takeaways

- ml-intern beats Claude Code 32% to 22.99% on GPQA, marking the first time an open-source ML agent outperformed a major proprietary coding assistant on reasoning benchmarks

- 70% time savings through autonomous workflows: literature review, dataset discovery, training script generation, execution, and iterative improvement all run without human intervention

- Two operational modes serve different needs—interactive for novel research requiring human guidance, headless for CI/CD automation of standard fine-tuning pipelines

- Specialized tools outperform general-purpose alternatives in specific domains: use ml-intern for ML post-training, Claude Code for refactoring, GitHub Copilot for autocomplete

- The verification bottleneck has a solution through iterative self-correction: ml-intern diagnoses failures and retrains autonomously, reducing manual review overhead while improving trust

- Available now via GitHub repo, web app, or CLI with $1,000 in credits (GPU + Anthropic API) for early users