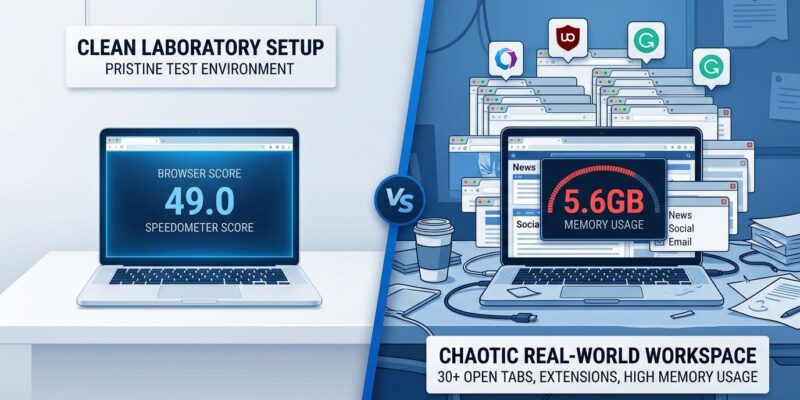

Browser speed tests crown Chrome and Safari as 2026’s fastest browsers, scoring 49.0 and 45.5 on Speedometer 3.1, but here’s the problem: 43% of sites fail INP responsiveness thresholds, and the browsers dominating synthetic benchmarks often feel sluggish during actual multi-tab, extension-heavy sessions. 2026 research exposes why lab winners become real-world losers, identifying three factors that Speedometer and JetStream completely miss.

Why Lab Winners Feel Slow in Real Use

Synthetic benchmarks test the wrong things. Speedometer and JetStream simulate narrow, idealized workloads that don’t reflect how developers actually use browsers—30+ tabs, a dozen extensions, hours of sustained work. The gap between lab scores and daily performance comes down to three factors.

First, extension overhead. Every browser extension consumes 30-150MB of memory depending on complexity. Ten or more extensions occupy over 1GB of RAM—none of which gets measured in clean lab environments. Lightweight extensions like uBlock Origin use 20-50MB, while heavier tools like Grammarly consume 100-200MB analyzing page content in real time. Best practice is keeping total active extensions under 12 to maintain good performance.

Second, RAM pressure from tab isolation. Chrome’s multi-process architecture—one renderer process per tab plus site isolation—trades memory for security. At 50 tabs, Chrome uses 5.6GB more RAM than Firefox. That’s not a bug, it’s a design choice. But when you’re actually working with 50 tabs open, those 5.6GB matter more than a Speedometer score.

Third, sustained workloads trigger thermal throttling, battery drain, and performance degradation that brief benchmark runs never capture. Real work happens over hours, not the seconds it takes to run JetStream. The quote nails it: “Chrome slow many tabs is a real phenomenon”—which is exactly why Chrome Memory Saver and Edge efficiency modes exist.

Memory Usage: The Missing Benchmark

Firefox uses the least RAM at high tab counts where its shared-process model has the biggest advantage. At 50 tabs, Firefox saves approximately 5.6GB compared to Chrome. At idle or 5 tabs on Windows, Firefox consumes around 450MB while Chrome sits at 600MB, Edge at 500MB, Opera at 550MB, and Vivaldi at 700MB.

Safari’s story is different but equally interesting. On macOS, Safari uses 30-40% less RAM than any Chromium browser. That optimization matters for MacBook users who value battery life and thermal management over raw JavaScript execution speed.

Chrome’s architecture isn’t inefficient—it’s intentional. The multi-process model delivers better security and stability by isolating sites and tabs. Firefox’s shared-process approach prioritizes memory efficiency with different security trade-offs. Neither is wrong, but they optimize for different use cases. If you’re the type of developer who keeps 50+ tabs open across multiple projects, Firefox’s memory advantage outweighs Chrome’s Speedometer lead.

INP Metrics Contradict Benchmark Rankings

Core Web Vitals’ INP (Interaction to Next Paint) metric reveals what benchmarks hide. Good INP performance is under 200ms, acceptable is 200-500ms, and anything above 500ms is poor. In 2026, 43% of sites fail the 200ms threshold—making INP the most commonly failed Core Web Vital despite browsers bragging about their Speedometer scores.

INP is a pure field metric that cannot be measured by lab tools like Lighthouse. It requires real user data from the Chrome User Experience Report. INP replaced First Input Delay in March 2024 and proved far more demanding. It captures every interaction throughout the full page lifecycle and reports the worst interaction at the 75th percentile. Far harder to game, far more representative of actual user experience.

Here’s the disconnect: browsers with top Speedometer scores often rank lower on INP. Mobile performance makes this even more critical since mobile devices represent approximately 58% of all website visits in 2026, and mobile Core Web Vitals performance is the primary factor in Google’s ranking algorithms. Optimize for INP, not synthetic JavaScript benchmarks.

The Benchmark Paradox

Speedometer 3.1 results from March 2026 on Apple Silicon show Chrome at 49.0, Edge at 48.5, Brave at 46.2, Safari at 45.5, and Firefox at 38.2. Firefox achieves only 60-80% of the top speeds in synthetic tests. Yet in real-world task simulations, Firefox slightly outperformed Chromium browsers despite slower JavaScript execution.

The explanation is simple: benchmarks test narrow workloads over seconds, while real pages use small amounts of code executed within milliseconds. As one expert put it, “Most benchmarks feel like trivia now—interesting on their own, but not really how people use machines in the real world.” Raw JavaScript benchmarking doesn’t account for the reality of the web.

JetStream 3.0, released March 31, 2026, modernized with over 200 pull requests touching 500+ files across 70+ workloads. WebAssembly now makes up 15-20% of the suite, up from just 7% in version 2. The collective work drove measurable improvements, with Safari gaining roughly 10% from version 26.0 to 26.4. But even modernized benchmarks ignore thermal throttling, battery drain, and sustained performance degradation.

How to Choose Your Browser Based on Real Use

Test with your actual workflow. Monitor memory usage with your typical extension load, not vendor benchmark marketing. Track INP metrics, not just JavaScript speed. Consider your use case—5 tabs versus 50 tabs means different browsers win.

Hardware matters significantly. A high-end workstation with a modern multi-core processor and 32GB of RAM handles dozens of extensions with no perceptible lag. A budget-oriented device feels the impact of even three or four active add-ons. The perception of speed is heavily influenced by your hardware, not just your browser choice.

Additional real-world factors include thermal management and battery consumption during sustained use. Test browsers with 30+ tabs, your typical extensions, and hours of sustained use. Only testing with your workflow predicts your experience—synthetic benchmarks can’t.

Key Takeaways

- Synthetic benchmarks optimize for tests, not daily developer workflows with 30+ tabs and multiple extensions

- Chrome uses 5.6GB more RAM than Firefox at 50 tabs—memory efficiency predicts real performance better than Speedometer scores

- 43% of sites fail INP 200ms threshold in 2026; INP is a pure field metric that benchmarks can’t measure

- Firefox scores 38.2 on Speedometer but outperforms Chromium browsers in real-world task simulations

- Test browsers with your actual workflow—extension count, tab count, sustained use—instead of trusting vendor benchmark claims