Amazon announced on April 20, 2026 that it will invest up to $25 billion in Anthropic in exchange for Anthropic committing to spend over $100 billion on AWS infrastructure over the next decade. This isn’t a traditional venture deal. It’s infrastructure lock-in disguised as investment. Amazon puts in $5 billion upfront (with $20 billion more tied to milestones), and Anthropic commits to buying it all back—and then some—in AWS compute capacity. The deal secures 5 gigawatts of Amazon’s Trainium chips for Anthropic, bringing Amazon’s total investment to $33 billion.

Infrastructure Lock-In, Not Just Capital

The math is simple: Amazon invests $25 billion, Anthropic commits to spending $100+ billion on AWS over 10 years. The net effect? Amazon gets $75+ billion in guaranteed revenue while locking Anthropic into AWS exclusively. This is vendor lock-in disguised as partnership.

The deal structure reveals the real game. Anthropic receives $5 billion immediately, with up to $20 billion more tied to “commercial milestones”—spend targets, essentially. In return, Anthropic commits to spending over $100 billion on AWS infrastructure and secures 5 gigawatts of Trainium chip capacity (Trainium2, Trainium3, and Trainium4). For developers, this fundamentally changes the calculus. If you’re building on Claude, you’re building on AWS. Period. Multi-cloud strategies sound great in theory, but a $100 billion infrastructure commitment tells you where Claude’s optimization priorities lie.

The Compute Scarcity Behind the Deal

This deal wouldn’t exist without the AI chip crisis. Nvidia GPU lead times hit 36-52 weeks in 2026, DDR5 memory prices jumped 166% (from $90 to $240), and custom AI accelerators like Trainium are sold out years in advance. The scarcity is so severe that Nvidia prioritized AI chips over gaming GPUs for the first time in 30 years, triggering backlash from gamers who feel abandoned.

Anthropic’s $100 billion commitment isn’t about negotiating discounts. It’s about securing guaranteed capacity. With Claude’s $30 billion annualized revenue and 100,000+ customers on Amazon Bedrock hitting throttles during peak hours, Anthropic needed infrastructure certainty. The alternative? Waiting 52 weeks for GPUs while competitors scale. Amazon bet correctly that infrastructure access trumps price in a supply-constrained market.

The numbers validate the strategy. Anthropic already deploys over 1 million Trainium2 chips for Claude training and inference through Project Rainier, one of the world’s largest AI compute clusters with 500,000+ chips. By end of 2026, Anthropic will bring nearly 1 gigawatt of combined Trainium2 and Trainium3 capacity online. The 5-gigawatt total commitment through 2030 solves Claude’s infrastructure problem for the next decade.

Amazon’s AI Hedge: $83 Billion Across Two Horses

Amazon announced a $50 billion OpenAI investment just two months before this Anthropic deal (February 2026). The strategy is transparent: bet on multiple AI leaders, lock them all into AWS infrastructure. Whoever wins the AI race, AWS wins.

Compare the cloud vendor consolidation. Microsoft invested $13 billion in OpenAI with a $250 billion Azure commitment through 2032. Google built Gemini internally, ensuring vertical integration on GCP. Amazon split $83 billion between OpenAI and Anthropic, hedging its bets while securing infrastructure revenue regardless of which model dominates.

For developers, this consolidation kills multi-cloud AI flexibility. The old model—pick the best AI model, deploy anywhere—is dead. The new reality: pick your cloud vendor first (AWS, Azure, GCP), then use their flagship AI. Claude equals AWS, OpenAI equals Azure, Gemini equals GCP. Multi-cloud strategies now mean compromising on performance, missing vendor-specific optimizations, and paying more for cross-cloud data transfer.

What This Means for Developers

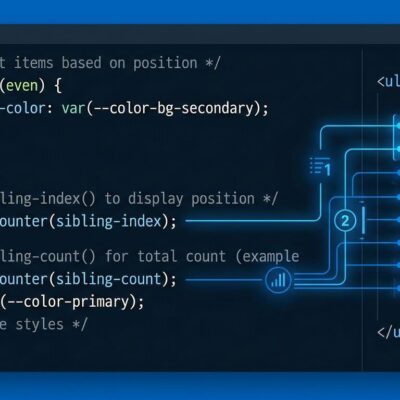

Anthropic is integrating Claude directly into the AWS console through Claude Platform (currently in private beta). Over 100,000 customers already run Claude on Amazon Bedrock with unified AWS billing, IAM, and monitoring. The $100 billion commitment guarantees Claude will be optimized for Trainium chips, not generic Nvidia GPUs.

The implications are concrete. If you’re building on Claude for production, assume AWS infrastructure. The API still works multi-cloud, but the optimized experience—Trainium acceleration, Bedrock integration, AWS-native monitoring—is AWS-only. Architectural decisions made today create decade-long lock-in. You can’t easily migrate a Claude-dependent application to GCP or Azure when the underlying infrastructure is Trainium-specific and Bedrock-integrated.

The smart play? Align AI platform choice with your existing cloud strategy upfront. If you’re AWS-native, Claude’s Bedrock integration and guaranteed capacity make sense. If you’re Azure-native, stick with OpenAI’s deep integration. If you’re GCP-native, Gemini on Vertex AI is the natural choice. Trying to maintain multi-cloud AI flexibility will cost more, perform worse, and limit access to vendor-specific optimizations that define competitive advantage.

Key Takeaways

- AI infrastructure is the new oil. Compute scarcity is so severe (36-52 week GPU lead times) that companies commit $100+ billion just to secure capacity, not negotiate discounts.

- Cloud vendors are using capital for lock-in, not equity. Amazon invests $25 billion, gets $100+ billion back in AWS revenue—this is a subscription deal disguised as venture investment.

- Multi-cloud AI is dead. Pick cloud vendor first (AWS/Azure/GCP), AI model second (Claude/OpenAI/Gemini). Vendor-specific optimizations and decade-long commitments kill flexibility.

- Developer choice follows infrastructure. AWS-native shops should use Claude (Bedrock integration), Azure shops use OpenAI, GCP shops use Gemini. Architectural alignment trumps model quality.