Agentic AI has crossed into mainstream adoption with 64% of developers now using these tools, according to a SonarSource survey of 1,100+ developers globally. Twenty-five percent use them regularly while 39% are actively experimenting. AI now accounts for 42% of all committed code. But beneath this adoption surge lies a critical paradox: 96% of developers don’t fully trust AI output, yet only 48% always verify it before committing. This 48-percentage-point verification gap—combined with stark effectiveness variations ranging from 70% for documentation to just 28% for security—reveals that agentic AI is reshaping development workflows faster than developers can adapt their quality controls.

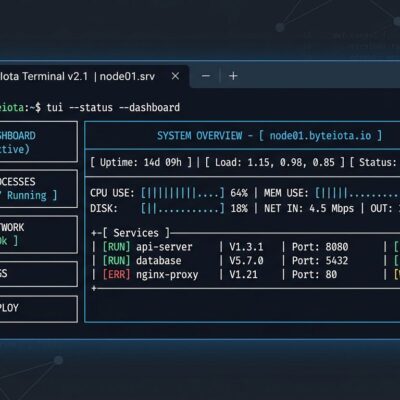

The Agentic AI Verification Gap Crisis

Here’s the math that should alarm every engineering leader: 42% of production code is AI-generated, 96% of developers don’t trust it, yet only 48% verify before committing. This trust-action gap creates mounting technical debt and security risks as unverified AI code enters production systems. Developers are treating AI like a junior engineer they don’t trust, then skipping the code review they’d normally require.

The consequences compound. Seventy-two percent of developers who’ve tried AI tools now use them daily, creating high dependency without systematic verification practices. Worse, 38% report that reviewing AI-generated code requires MORE effort than reviewing human code. The promise of productivity gains gets offset by verification burden—agents generate code 10x faster, but human verification capacity remains static.

The most insidious problem? Sixty-one percent of developers agree AI produces code that “looks correct but isn’t reliable.” These aren’t obvious syntax errors. AI generates plausible logic with hidden bugs—null pointers, race conditions, hallucinated dependencies—that compiles and passes basic tests but fails in production. Traditional code review processes (spot-checking) miss these issues entirely. Organizations racing to adopt agentic AI without automated verification tools (Sonar, Snyk, CodeQL) are accumulating invisible debt.

AI Coding Tools Effectiveness: 70% for Docs, 28% for Security

Not all agentic AI use cases deliver equal results. The SonarSource survey reveals a clear effectiveness hierarchy:

- Documentation generation: 70% effectiveness (68% adoption) – Agents excel at structured, formulaic content with clear success criteria

- Automated test generation: 59% effectiveness (61% adoption) – Strong performance analyzing code paths and generating comprehensive coverage

- Automated code review: 52% effectiveness (57% adoption) – Mediocre results requiring human oversight for context-dependent decisions

- Security vulnerability patching: 28% adoption – Critically low, suggesting developers recognize security is too high-stakes for current agent capabilities

This 42-percentage-point gap between documentation (70%) and security (28%) exposes a fundamental limitation: agents handle structured tasks well but struggle with nuanced, high-stakes work requiring deep context. Use agentic AI for documentation and test generation where failure is low-risk and verification is straightforward. Avoid security and architectural work where mistakes scale and compound.

Organizations need task-specific policies, not blanket approval or rejection. Treat agents like specialists—deploy them for what they’re demonstrably good at, keep humans on critical decisions. The data provides a clear decision framework: auto-approve documentation (70% effective), require human review for code review (52%), manually handle security (28%).

SMB Advantage and Enterprise Struggle

Organization size significantly impacts agentic AI effectiveness. Small-to-medium business developers report 67% effectiveness for “vibe coding” tasks (rapid prototyping, quick fixes, exploratory coding) compared to only 52% for enterprise developers—a 15-percentage-point gap that translates to 29% relative difference. Smaller teams see agents as force multipliers while larger organizations wrestle with complexity and constraints.

The explanation is architectural. SMBs operate with simpler codebases, fewer compliance requirements, faster iteration cycles, and less architectural rigidity. Agents thrive in this environment, compensating for limited headcount and enabling lean teams to maintain competitive output velocity. Enterprise environments present the opposite profile: complex legacy systems, strict compliance frameworks, extensive architectural patterns, and risk-averse cultures that slow agent adoption.

The scaling gap reinforces this divide. Deloitte reports 64% of organizations experimenting with agentic AI, but fewer than 25% successfully scaled to production. Most large organizations are trying to automate existing human processes instead of redesigning workflows for autonomous agents. This fundamental misunderstanding—layering agents onto old workflows rather than reimagining operations—explains why enterprise lags SMB by 15 percentage points.

The strategic implication: smaller teams should adopt agentic AI aggressively (67% effectiveness provides competitive advantage), while enterprise organizations need workflow redesign before deployment (52% effectiveness indicates structural friction).

The Verification Bottleneck

Agentic AI creates a new bottleneck at verification. Agents can generate code 10x faster than humans, but verification capacity doesn’t scale proportionally. Thirty-eight percent of developers report AI code review takes MORE time than human review—effort increases, not decreases. This verification paradox threatens to negate the productivity gains agents promise.

Why verification is harder: AI-generated code “looks correct but isn’t reliable” (61% of developers agree). Subtle logic errors, security vulnerabilities, and architectural inconsistencies hide beneath surface correctness. Agents also make localized decisions that conflict with system-wide patterns—introducing REST endpoints when GraphQL is standard, or applying different authentication mechanisms than established conventions. These architectural drifts are invisible during isolated code review but create systemic problems at scale.

Security compounds the challenge. Only 28% of developers use agents for security tasks, leaving a massive gap as 42% of code becomes AI-generated. The OWASP Top 10 for Agentic Applications 2026 identifies emerging threats: prompt injection, memory poisoning, supply chain vulnerabilities. Barracuda Security found 43 agent framework components with embedded vulnerabilities. Organizations adopting agents without security review are introducing exploitable code into production systems.

The solution requires automated verification. Tools like Sonar, Snyk, and CodeQL can scale verification capacity to match AI output volume. Manual review won’t suffice—if 38% already find AI review harder than human review, increasing agent usage without verification automation will create exponential backlog.

Key Takeaways

- Close the verification gap immediately: 96% distrust plus 48% verification equals mounting risk. Verify ALL AI-generated code before production deployment. The 42% of code that’s AI-generated with only 48% verification coverage is a ticking time bomb.

- Implement task-specific policies: Use agents for documentation (70% effective) and test generation (59% effective). Require human oversight for code review (52%). Manually handle security (28% adoption indicates inadequate agent capabilities for critical work).

- SMBs should move fast, enterprises should redesign: Smaller teams (67% effectiveness) gain competitive advantage from aggressive adoption. Large organizations (52% effectiveness) need workflow reimagination, not automation of existing processes.

- Invest in automated verification: Thirty-eight percent report AI review takes MORE time than human review. Deploy Sonar, Snyk, CodeQL to scale verification capacity. Manual review won’t keep pace with 10x code generation velocity.

- Don’t ignore the security blind spot: Only 28% use agents for security while 42% of code is AI-generated. This mismatch creates vulnerabilities. Systematic security review must keep pace with AI adoption rates.

Agentic AI is production-ready for specific tasks, not blanket deployment. Organizations treating these tools as universal solutions will accumulate technical debt and security vulnerabilities. Those implementing evidence-based, task-specific policies will gain productivity without sacrificing quality. The survey data provides the roadmap—it’s time to stop treating all agentic AI use cases as equivalent and start matching agent capabilities to task requirements.