AI Tools Promise Productivity. They Deliver Burnout.

AI coding tools promised to make developers more productive with less effort. The reality, according to research published in February and March 2026 by Harvard Business Review, BCG, and UC Berkeley, inverts that promise entirely. Developers using AI tools work more hours, report higher burnout rates, and experience more cognitive fatigue than before AI adoption. This isn’t anecdotal frustration—it’s quantified across multiple independent studies with disturbing consistency.

The numbers tell a stark story. Sixty-seven percent of workers who adopted AI tools in 2025 reported working MORE hours by year-end, not fewer, according to UC Berkeley’s Labor Center. Twenty-two percent of developers are at critical burnout levels per LeadDev’s survey of 617 engineering leaders. Fourteen percent of AI-using workers experience what BCG researchers call “AI brain fry”—mental fatigue from monitoring and correcting AI output beyond cognitive capacity. Seventy-six percent of professional developers now use these tools daily, which means this paradox affects three out of four developers reading this.

How AI Tools Actually Intensify Work

Harvard Business Review’s eight-month ethnographic study at a 200-person U.S. tech company identified exactly how AI tools increase work intensity. Researchers observed employees twice weekly, tracked communication channels, and conducted over 40 in-depth interviews. They found three specific mechanisms that transform productivity gains into workload expansion.

First, task expansion. Workers take on responsibilities previously held by others because AI’s cognitive scaffolding makes unfamiliar tasks feel accessible. Product managers write code. Researchers handle engineering tasks. The same headcount covers wider responsibilities, and the organization adjusts by expecting that new baseline to continue.

Second, blurred work-life boundaries. AI prompting feels conversational and less formal than “work,” so it extends into breaks, lunch periods, even the seconds waiting for files to load. UC Berkeley measured the physiological impact: AI-using workers showed 23% higher sustained cortisol levels during workdays, took 31% fewer natural micro-breaks per hour, and experienced 40% more decision fatigue by day’s end. The natural recovery periods that prevented burnout in pre-AI workflows simply disappear.

Third, increased multitasking. Developers manage multiple concurrent tasks, constantly switching attention between AI outputs. One engineer from the HBR study captured the paradox perfectly: “You had thought that maybe…you can work less. But then really, you don’t work less. You just work the same amount or even more.”

The Ratchet Effect: Productivity Gains Become New Baselines

Here’s the organizational force driving the AI productivity paradox. AI productivity gains don’t reduce workload—they immediately become higher baseline expectations. Organizations recalibrate upward within weeks, not years, compressing a cycle that took decades with previous technology waves.

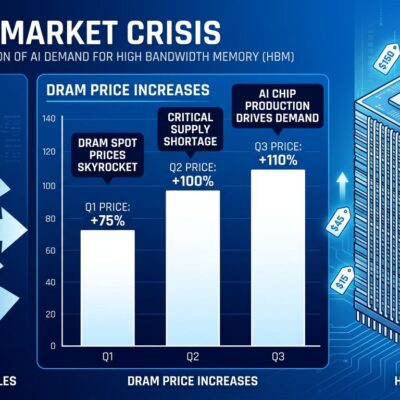

Teams adopting AI writing tools saw output expectations increase 35% within 90 days. Engineering teams using AI coding assistants had sprint velocity baselines recalibrated upward 40% within two quarters. Marketing departments using AI content generation were expected to produce 3.2 times more pieces per month compared to pre-AI baselines. The productivity gains are real, but they’re captured organizationally and redistributed as higher demands, not worker relief.

The consequences are measurable. A consulting firm saw analyst output increase 50% within six months after AI adoption. However, turnover among those analysts doubled. Exit interviews cited “unsustainable pace” as the primary reason for leaving. The firm got its productivity gains, but lost the people who generated them.

Only 8% of AI-generated time savings actually benefit workers, according to research tracking time reallocation. The remaining 92% gets absorbed by higher output expectations (42%), additional project assignments (27%), AI tool management overhead (18%), and meetings about AI transformation (5%). The time savings are real. They just don’t belong to the people who generated them.

From Creator to Reviewer: The Role Reversal

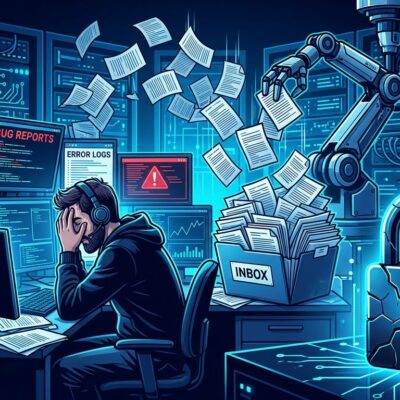

Developers using AI tools spend 11.4 hours per week reviewing AI-generated code versus 9.8 hours writing new code—a 2026 role reversal from the traditional developer workflow. Teams with high AI adoption complete 21% more tasks and merge 98% more pull requests, but pull request review time increases 91%. The bottleneck shifted from code generation to code evaluation.

This role shift is cognitively expensive. Code review requires sustained critical evaluation without the creative satisfaction of building. Developers scan for subtle logic errors, security vulnerabilities, and edge case failures in code they didn’t write and don’t fully understand. The mental load compounds across dozens of reviews per week.

GitHub Copilot delivers legitimate gains—55% faster task completion, 75% reduction in pull request time from 9.6 days to 2.4 days. Nevertheless, those gains introduce new costs in review complexity that don’t show up in velocity metrics. Duolingo reported 25% faster developer speed and initially 67% faster code reviews, but the sustained review burden increased over time as AI-generated code accumulated technical debt that required human oversight.

AI Brain Fry: The Cognitive Toll Quantified

BCG’s study of 1,488 workers across industries quantified the cognitive cost. Fourteen percent of AI-using workers experience mental fatigue from monitoring AI tools beyond their cognitive capacity. Symptoms include a “buzzing” feeling, mental fog, difficulty focusing, slower decision-making, and headaches. Those experiencing brain fry show 33% more decision fatigue, 11% more minor errors, and 39% more major errors than workers without brain fry.

The study found productivity peaks at three AI tools. One or two tools produce real gains. Three is the performance ceiling. Four or more tools reverse the pattern—cognitive strain rises even as output declines. Most developers run three-tool stacks combining chatbots, IDE assistants, and terminal agents, putting them right at the cognitive limit.

The critical finding: it’s not delegation to AI that causes brain fry. It’s monitoring and correcting AI output. The tools marketed as “assistants that reduce cognitive load” actually increase cognitive load through constant oversight requirements. The promise inverts at the point of actual use.

Developer Burnout Isn’t Improving—It’s Accelerating

LeadDev’s survey of 617 engineering leaders found 22% of developers at critical burnout levels, with another 24% moderately burned out. Only 21% qualify as “healthy.” Sixty-five percent reported expanded responsibilities, and 40% manage more direct reports than before AI adoption. Moreover, the correlation is hard to miss: 73% of engineering teams now use AI tools daily, up from 41% in 2025 and 18% in 2024. As AI adoption accelerates, burnout rises in lockstep.

The data challenges the productivity narrative. AI tools work exactly as advertised—they accelerate task completion and increase output. But that acceleration creates organizational expectations that consume the gains and demand more. The faster developers work, the faster they’re expected to work, and the gap between capacity and expectation widens instead of closing.

What Developers Can Actually Do

This is organizational, not individual. Developers can’t solve it by working smarter or using better productivity techniques. The problem is structural: how organizations respond to productivity gains.

Harvard Business Review’s researchers propose an “AI Practice” framework with three interventions. First, intentional pauses—structured moments to assess whether accelerated work is aligned work, breaking the reflex toward continuous responsiveness. Second, sequencing—deliberately timing work advancement instead of immediately acting on every AI-enabled possibility. Third, human grounding—protected time for interpersonal connection and team dialogue without AI mediation.

Individual strategies help at the margins. Furthermore, limit AI tools to three maximum to stay below the cognitive strain threshold. Block separate time for review work versus creation work instead of mixing them. Protect natural breaks and resist AI-prompting during lunch or downtime. Additionally, recognize that productivity gains will be captured organizationally unless you actively defend boundaries.

But the real solution requires organizations to change how they redistribute productivity gains. When AI enables 40% faster output, the options are: maintain the same output with 40% less work, or demand 40% more output with the same work. Organizations consistently choose the latter. Until that pattern breaks, the AI productivity paradox will compound. Developers will keep working harder with tools designed to make work easier, and burnout will keep rising alongside adoption rates.

The tools aren’t broken. The system redistributing their benefits is.