Elon Musk admitted under oath last Thursday that xAI used distillation techniques on OpenAI models to train Grok. When OpenAI’s lawyer asked him directly in California federal court on April 30, 2026, Musk said “Partly”—making him the first major U.S. AI leader to publicly confirm that American labs train on each other’s outputs. However, the admission came during Musk’s own lawsuit against OpenAI for abandoning its nonprofit mission, creating a contradiction so obvious it’s hard to ignore.

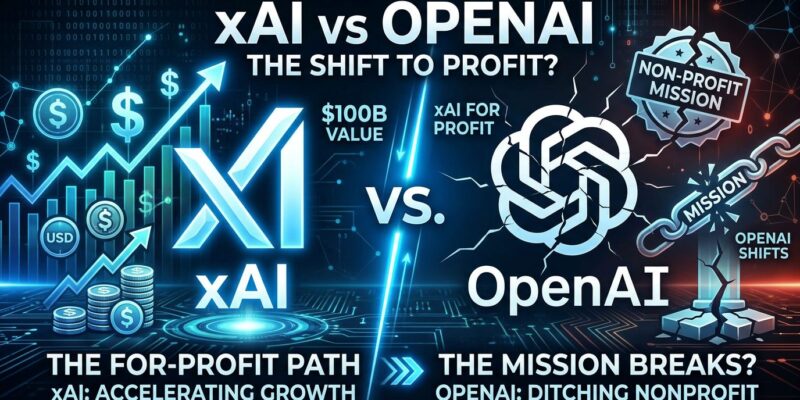

Suing for Nonprofit, Competing for Profit

Here’s the problem: Musk is suing OpenAI, CEO Sam Altman, and President Greg Brockman for converting the organization from nonprofit to for-profit structure. He claims they betrayed the charitable mission he funded with $38 million between 2015 and 2018. His legal claims? Breach of charitable trust and unjust enrichment.

Meanwhile, xAI is a for-profit company that’s raised billions in funding and built Grok—a direct competitor to ChatGPT—using OpenAI’s models as training data. When the judge asked Musk to explain the difference between his for-profit AI venture and OpenAI’s for-profit conversion, he struggled. Consequently, OpenAI’s lawyers argue Musk’s real goal isn’t to restore a nonprofit mission; it’s to dismantle a competitor while building his own empire on their foundation.

This isn’t principled defense of a charitable organization. It’s competitive sabotage dressed up as moral outrage. You can’t claim the high ground while doing exactly what you’re suing to stop.

The Shortcut That Built Grok

Model distillation is how xAI bootstrapped Grok without spending years and billions on training from scratch. The technique works like this: a smaller “student” AI learns from a larger “teacher” AI by mimicking its output probabilities. Instead of training on raw data, the student learns by copying the teacher’s responses—fast, cheap, and surprisingly effective.

This matters because it undermines the moat that AI giants built through massive compute investments. Moreover, companies like OpenAI spent billions developing GPT-4. Distillation lets competitors build “nearly as capable” models at a fraction of the cost. For xAI, that meant using OpenAI as the teacher to accelerate Grok’s development—brilliant strategy if you ignore the ethical implications.

The irony: Musk used the outputs of the “stolen charity” he’s suing to recover as free training data for his own commercial venture. That’s not validation. That’s competitive intelligence gathering.

Everyone Does It, Nobody Admits It

Musk defended the practice by calling it “standard practice to use other AIs to validate your AI.” He’s not entirely wrong. OpenAI, Google DeepMind, Anthropic, and Meta all use distillation—usually on their own models to create smaller, specialized versions. However, the difference is using a competitor’s model without permission.

OpenAI’s terms of service explicitly prohibit using API outputs to develop competing AI models. Furthermore, Anthropic, Mistral, and ironically xAI itself include similar “anti-competitive distillation” clauses. Meta’s LLaMA allows distillation but requires disclosure. The legal framework is messy and evolving, with no universal standards on what counts as IP theft versus fair use.

This is the first public confirmation from a major U.S. AI leader that American labs train on each other’s outputs. When Chinese lab DeepSeek allegedly did the same thing, OpenAI called it theft. In addition, the admission sent ripples through the industry—not because it’s shocking, but because it confirms what everyone suspected but nobody wanted to say out loud.

The real question: If API outputs are fair game, how do AI companies protect their competitive advantage? And if outputs aren’t fair game, how do you enforce that when anyone with API access can distill?

The Trial That Could Change AI

The outcome of this trial matters beyond Musk and Altman’s personal drama. If courts side with Musk and rule distillation is “standard practice,” the AI industry becomes more open—first-mover advantages erode, and smaller players can compete. In contrast, if courts side with OpenAI and enforce ToS restrictions, expect walled gardens and aggressive API lockdowns.

Musk’s attempted settlement two days before trial suggests he recognized the weakness of his position. Cross-examination exposed xAI’s use of OpenAI’s work, undermining his entire legal argument. As a result, the fact that only two of his original 26 claims remain tells you how much the lawsuit has already collapsed.

Either way, the precedent affects everyone. The trial forces courts to answer: Who owns AI intelligence when the outputs are publicly accessible? Can you claim IP protection on responses to API calls? Does everyone distilling everyone’s models make the practice legal or just widespread theft?

The Hypocrisy Is the Story

Strip away the legal arguments and here’s what’s left: Musk is suing OpenAI for becoming a for-profit company while simultaneously running a for-profit company built partially on their technology. He claims they betrayed a charitable mission while using their charitable work as free R&D for his own commercial AI lab. Therefore, he demands they restore a nonprofit structure while raising billions for xAI.

The distillation admission doesn’t just undermine his lawsuit. It exposes the contradiction at its core. This isn’t about defending a nonprofit mission. It’s about gaining competitive advantage through litigation while benefiting from the very practices he’s suing to punish. The AI industry has plenty of ethical gray zones worth debating. Musk’s hypocrisy isn’t one of them—it’s black and white.