Anthropic released its “2026 Agentic Coding Trends Report” on January 21, documenting a transformation that’s already reshaping enterprise software development: engineers are shifting from writing code to orchestrating AI agents that write code. The report identifies 8 key trends backed by enterprise case studies showing Rakuten reducing feature delivery time by 79%—from 24 days to 5 days—and TELUS accumulating 500,000 hours in time savings across 57,000 team members. But here’s the paradox driving the industry conversation: while 84% of developers now use AI tools that write 41% of all code, 96% don’t fully trust the output, and developers can only “fully delegate” 0-20% of their work despite using AI in 60% of tasks.

Engineers Don’t Write Code Anymore—They Orchestrate Agents

Engineering roles are fundamentally reorganizing around agent supervision, system design, and output review rather than hands-on implementation. Agents handle writing, testing, debugging, and documentation while engineers focus on architecture and decision-making. This isn’t a future prediction—it’s production reality at companies shipping measurable results.

Rakuten’s engineers now run 5 tasks in parallel: delegate 4 to Claude Code while focusing on the remaining one. The company’s “ambient agent” breaks complex tasks into 24 parallel agent sessions handling work that would take over a month manually. TELUS created 13,000+ custom AI solutions, shipping code 30% faster while accumulating those 500,000 hours saved. The math is stark: one developer with AI augmentation can match the output of a traditional 5-person team.

The skills that matter now are breaking down complex tasks for multi-agent coordination, reviewing AI output effectively, and designing system architecture. This isn’t about “AI replacing developers”—it’s about developers evolving to higher-value orchestration work or being left behind by those who do.

Anthropic’s 8 Agentic Coding Trends: Foundation, Capability, Impact

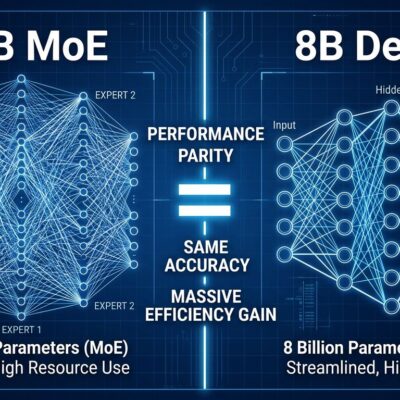

The report organizes the transformation into three categories spanning 8 specific trends. Foundation trends reshape how development happens: the software development lifecycle undergoes a tectonic shift, agents evolve from solo workers to coordinated teams, and execution extends from short tasks to 7-hour autonomous sessions. Capability trends expand what agents accomplish: they learn to request human input at critical decision points and spread beyond software engineers into security, operations, and design roles. Impact trends affect business outcomes: delivery timelines collapse, non-engineers embrace agentic coding, and agents help both defenders and attackers scale their capabilities.

Multi-agent coordination delivers the biggest ROI. Organizations transition from single agents to specialized groups working in parallel under an orchestrator. Rakuten’s 24-agent system coordinates different aspects of repository updates simultaneously—agents handling different subsystems, orchestrating dependencies, resolving conflicts. This parallelization drives that 79% time reduction.

End-to-end execution represents the maturity shift. Agents progress from quick autocomplete to sustained autonomous work. Rakuten achieved 99.9% numerical accuracy implementing activation vector extraction across vLLM’s 12.5-million-line codebase in seven autonomous hours—work that would take weeks manually. However, Trend 8 carries a warning: agents improve defensive code review AND lower barriers for offensive exploit development, requiring early security controls and automated defense.

Related: Cognitive Debt: AI Coding Agents Outpace Comprehension 5-7x

The Trust Gap Paradox: 96% Don’t Trust, 0-20% Delegation

While 84% of developers use AI tools and AI writes 41% of all code, 96% of developers don’t fully trust AI-generated code is functionally correct. Developers use AI in roughly 60% of their work but can only “fully delegate” 0-20% of tasks. Only 48% always verify AI output before committing. This gap between usage and trust explains why aggregate productivity gains remain stuck at 10% despite 84% adoption.

The “almost right” problem drives developer frustration. 66% cite AI solutions that are “almost right, but not quite” as their biggest frustration. Reviewing AI code requires MORE effort than human code—38% of developers report this—because engineers must understand what the AI attempted, identify subtle errors, and correct hidden assumptions. It’s not wrong code that’s the problem; it’s code that looks correct but has bugs buried in logic the AI inferred incorrectly.

AI pull requests face acceptance challenges that quantify the trust issue. Only 32.7% of AI PRs get accepted versus 84.4% for human PRs. They wait 4.6x longer before review starts, though once review begins, they’re reviewed 2x faster. The bottleneck isn’t review speed—it’s the validation burden. The trust gap is the current constraint on productivity, not AI capability.

Enterprise ROI: Rakuten’s 79%, TELUS’s 500K Hours

Real enterprise deployments show dramatic productivity gains with auditable metrics. Rakuten: 79% time-to-market reduction (24 days → 5 days), 99.9% accuracy on complex modifications, 7-hour autonomous coding sessions, 24 parallel agent sessions. TELUS: 500,000+ hours saved across 57,000+ members, 13,000+ custom AI solutions created, 30% faster code shipping, $90 million in benefits from 47 large-scale GenAI solutions.

Rakuten’s breakthrough demonstrates the capability ceiling: Claude Code achieved 99.9% numerical accuracy implementing activation vector extraction across vLLM’s 12.5-million-line codebase in seven autonomous hours. That’s work requiring weeks manually—identifying the right code paths across millions of lines, implementing changes consistently, testing the modifications, and documenting the approach. The ambient agent that breaks complex monorepo updates into 24 parallel sessions represents the coordination maturity: not just one agent working autonomously, but multiple specialized agents collaborating on interdependent tasks.

TELUS’s scale shows enterprise-wide adoption impact. With 57,000+ team members using AI-augmented workflows, they’ve created specialized solutions for nearly every use case. Average time savings of 40 minutes per AI interaction compound across tens of thousands of developers daily. 500,000 hours saved is the equivalent of 240 full-time engineers for a year. The $90 million ROI from 47 large-scale solutions demonstrates measurable business value, not vanity metrics.

2030 Timeline: What Developers Need to Learn Now

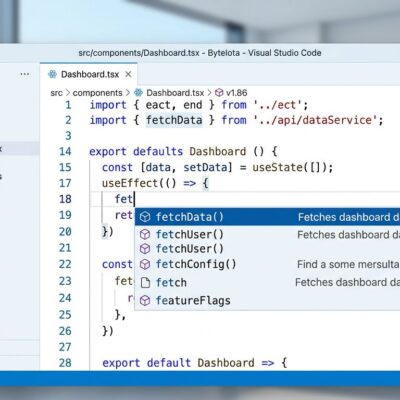

Gartner predicts that by 2030, 80% of organizations will evolve large software engineering teams into smaller, more nimble teams augmented by AI. This is a 4-year timeline, not a distant future. AI already writes 41% of code in 2026, projected to reach 65% by 2027. Model Context Protocol (MCP), introduced by Anthropic and adopted by OpenAI, now has 1,000+ community servers, making it the de facto standard for agent coordination.

The critical skills for 2026-2030 are orchestration-focused, not syntax-focused. Breaking down complex tasks for multi-agent coordination. Effective validation and review of AI-generated code. System architecture and design—higher-level thinking the agents can’t do. Security-first development, since agents help attackers too. Managing long-running autonomous workflows without losing context or control. Survey data shows 47% of developers rank “reviewing and validating AI-generated code for quality and security” as the most important skill in the AI era.

Tool selection matters for workflow fit. GitHub Copilot ($10-19/month) excels at file-focused tasks with tight GitHub integration. Cursor ($20/month) balances multi-file editing with developer control. Claude Code ($50-150/month during active sprints) offers maximum autonomy for long-running tasks. The learning curve is 6-12 months, not years—and many tools have free tiers. Developers who learn orchestration skills now will lead the restructured teams. Those who resist will find themselves obsolete—not because AI replaced them, but because other developers learned to leverage AI better.

Key Takeaways

- Engineering roles are shifting from coding to orchestration: Rakuten reduces features from 24 days to 5 days with 24-agent parallel coordination; TELUS accumulates 500K hours saved with 13K custom AI solutions across 57K team members

- The trust gap is the productivity bottleneck: 96% of developers don’t trust AI code despite 84% adoption and 41% AI-generated code, with only 0-20% of work fully delegated despite 60% AI usage

- Enterprise ROI is measurable and dramatic: Rakuten achieves 99.9% accuracy on 12.5M LOC autonomous modifications in 7 hours; TELUS generates $90M in benefits from GenAI deployments

- Gartner’s 4-year timeline: 80% of organizations will restructure to AI-augmented teams by 2030, with AI-generated code reaching 65% by 2027 from 41% in 2026

- Learn orchestration skills now: Multi-agent coordination, effective code review, system architecture, and security-first development are the critical skills—not syntax or implementation details