Anthropic’s Project Glasswing proved AI can find security vulnerabilities at unprecedented scale. Claude Mythos Preview discovered thousands of zero-days across every major operating system and browser in just weeks—including a 27-year-old OpenBSD flaw, a 16-year-old FFmpeg bug, and a 17-year-old FreeBSD remote code execution vulnerability that grants root access. The problem: fewer than 1% have been patched. Glasswing didn’t just find vulnerabilities—it exposed a remediation crisis that makes security worse by overwhelming defenders with bugs they can’t fix.

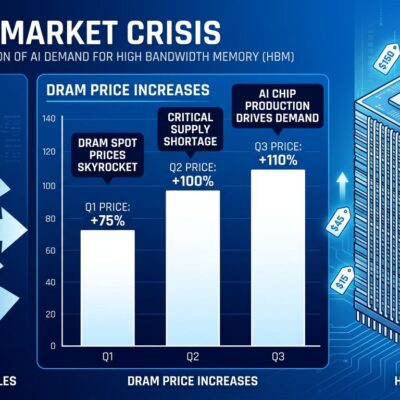

The Numbers: Detection Outpacing Remediation

AI vulnerability discovery operates at machine speed. Claude Mythos Preview scanned critical software infrastructure and identified thousands of zero-days that survived decades of human code review and millions of automated security tests. It achieved a 72.4% success rate in autonomous exploit development against Firefox by chaining together multiple independent bugs into working attack sequences.

The remediation rate tells a different story. Less than 1% of discovered vulnerabilities have been patched. Defenders operate on “four days on a good day” cycles while attackers move at machine speed. The median time from vulnerability disclosure to weaponized exploit dropped from 771 days in 2018 to single-digit hours by 2024. One recent threat actor compromised 2,516 organizations across 106 countries using an AI-powered autonomous attack chain.

This isn’t a success story. It’s a structural failure. Detection capability is exponential. Remediation capacity is linear. The gap between finding bugs and fixing them is widening, not closing. A growing backlog of known, exploitable vulnerabilities sits in production systems while AI continues discovering thousands more.

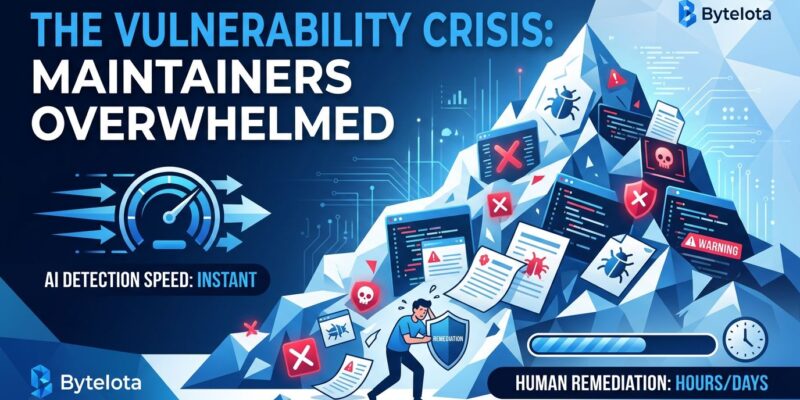

Open-Source Maintainers Are Drowning

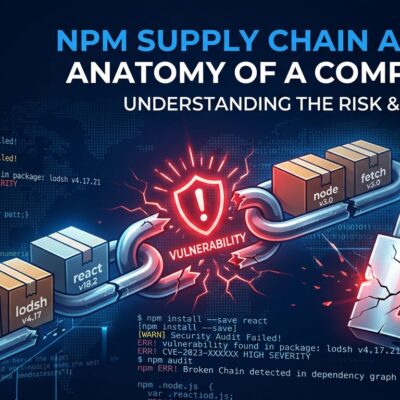

AI made it trivially easy to generate and submit vulnerability reports at scale. The result: cURL terminated its bug bounty program after being inundated with low-quality AI-generated reports. The maintainer spent 10-15 hours per week triaging submissions that clearly came from ChatGPT—reports that misunderstood basic C concepts, suggested vulnerabilities that didn’t exist, or proposed “fixes” that would introduce actual security holes.

Legitimate security reports get lost in the noise. Researchers have had valid bug discoveries initially dismissed because maintainers assumed they were more AI-generated garbage. The signal-to-noise ratio has collapsed.

Project Glasswing dumps thousands of additional vulnerability reports on maintainers who are already overwhelmed. 60% of open-source maintainers work unpaid. Critical infrastructure is maintained by volunteers operating at capacity. There’s no remediation support pipeline. Anthropic just found thousands of bugs in their code and expects them to handle it.

The Funding Mismatch: $100M for Finding, $4M for Fixing

Anthropic committed $104 million to Project Glasswing: $100 million in AI model usage credits and $4 million to open-source security organizations. That’s a 25:1 ratio favoring detection over remediation.

The $100 million covers AI model usage—the cost of finding vulnerabilities. It does not cover fixing them. Each organization participating in Glasswing is responsible for remediating vulnerabilities discovered in their own software. The 12 founding partners—Amazon Web Services, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorgan Chase, the Linux Foundation, Microsoft, NVIDIA, and Palo Alto Networks—have resources to staff remediation teams. Open-source projects get $4 million total to address thousands of zero-days across every major operating system and browser.

Finding bugs is now funded, automated, and scalable. Fixing bugs remains underfunded, manual, and bottlenecked. This creates a permanently growing backlog. Is $4 million enough for thousands of critical vulnerabilities? Should the founding partners—companies worth trillions combined—provide dedicated remediation teams instead of just model credits?

Responsible Disclosure Breaks Down at AI Scale

Traditional responsible disclosure assumes a manageable volume of vulnerability reports. A security researcher finds a flaw, reports it to the vendor, waits 90 days for a patch, then publicly discloses. The process handles dozens or hundreds of reports per year.

AI-scale discovery generates thousands of vulnerability reports simultaneously. Maintainers can’t triage that volume. Security teams are overwhelmed. Disclosure timelines become meaningless when the backlog is thousands deep. OpenAI introduced “open-ended timelines by default” for its Outbound Coordinated Disclosure Policy, explicitly recognizing that AI will find vulnerabilities faster than humans can fix them.

We have no process model for this. The entire responsible disclosure framework was designed for human-scale discovery. What happens when AI finds more bugs in a week than the security industry traditionally discloses in a year? The 99% of vulnerabilities that remain unpatched don’t disappear—they sit exposed in production systems while defenders drown in triage work.

We Automated Discovery, Not Remediation

AI can find vulnerabilities, develop exploits, and generate patches. The technology exists. What’s missing is the process and funding to review, test, and deploy those fixes at scale.

Who reviews AI-generated patches? Who tests them for regressions? Who validates they don’t break existing functionality? Who deploys them to production? Who pays for this work? Project Glasswing proved AI can find bugs. It also proved we have no answer to these questions.

We built AI to scale up vulnerability discovery without scaling up vulnerability remediation. The result is security theater where finding more bugs doesn’t actually improve security—it just creates a longer list of known weaknesses for attackers to exploit. Detection capability advances exponentially. Remediation capacity remains static. The gap grows.

Glasswing needs a remediation pipeline, not just an AI model. Anthropic and its founding partners should provide funded teams to fix the vulnerabilities they’re discovering—or admit that finding thousands of bugs without fixing them makes security worse, not better.