Platform Engineering’s 80% vs 10% Paradox

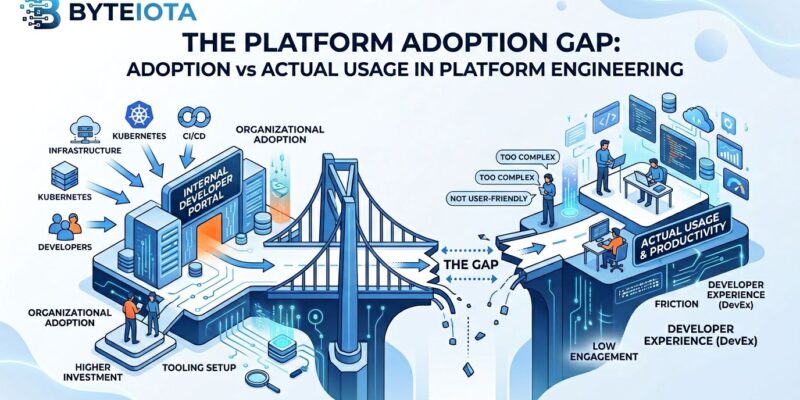

Platform engineering has hit a critical adoption crisis that nobody’s talking about. While Gartner predicts 80% of large software engineering organizations will have platform teams by the end of 2026, average Internal Developer Platform (IDP) adoption rates sit stubbornly around 10%. This creates a 70-percentage-point gap between organizational commitment and developer reality.

Companies are investing $900,000 to $10 million annually in platform engineering initiatives, with median budgets doubling in 2026. Yet 90% of developers reject these platforms. In one documented case, 64% of engineers actively circumvented their company’s platform, deploying with raw kubectl run commands instead.

The numbers expose a massive disconnect. Organizations are confusing team formation with value delivery. However, having a platform team means nothing if developers don’t use the platform. This is the crisis: multi-million dollar investments with 10% ROI.

The Multi-Million Dollar Failure Pattern

70% of platform teams fail to deliver measurable impact, with nearly half disbanded or restructured within 18 months. Meanwhile, 29.6% of organizations don’t measure platform success at all. Without measurement of actual usage versus team formation, organizations burn millions proving nothing.

The investment is substantial. For scale-ups with 50-100 engineers, platform teams cost $900,000 to $1.8 million annually in fully loaded salaries, tooling, and infrastructure. Furthermore, enterprise organizations with 200+ engineers spend $2 million to $4 million. Leading organizations are doubling down in 2026, investing $5-10 million to support comprehensive platform capabilities including AI, security, and observability integration.

The theoretical ROI when platforms succeed is compelling: 185-220% returns through reduced toil, shortened cycle times, and faster revenue realization. Nevertheless, theory collides with a 10% adoption reality. When 90% of developers reject your platform, ROI is negative regardless of team formation or budget allocation.

The measurement crisis compounds the problem. Organizations without usage metrics can’t answer basic questions like “What percentage of developers actually use the platform?” or “What’s the real ROI?” Consequently, this makes platforms the first budget cut when economic conditions tighten.

Why Developers Reject Platforms: The Golden Cage

The “golden cage syndrome” explains the rejection pattern. IDPs designed primarily for operational control rather than developer consumption create platforms that feel like constraints instead of enablers. Moreover, when abstractions leak—and they always do eventually—developers find themselves trapped, unable to see or debug what’s happening under the hood, waiting for platform teams to fix issues.

The biggest problem is the leaky abstraction: developers can’t see what’s happening when deployments fail with cryptic errors, creating a “trapped in the cage” experience that breeds circumvention. The data confirms this. Specifically, 45.3% of organizations report developer resistance as the number one barrier to platform engineering success.

Top-down mandates without developer input accelerate failure. Platforms forced on developers they didn’t ask for breed active rejection. The Backstage pattern illustrates this: adoption is high internally at Spotify, but stalls at less than 10% in other organizations despite 6-12 months of implementation time and 7-15 full-time engineers invested.

The maintenance burden compounds the problem. Platform teams burn out maintaining portals and fixing broken plugins before delivering the features developers actually want. The lesson is clear: platforms built by platform teams for platform teams fail. Conversely, platforms built with developer input and designed for developer productivity have a chance at success.

The DevOps Rebranding Question

Critics argue platform engineering is just “DevOps rebranded”—title drift without substance change. Some teams that were SysAdmins, then DevOps, are now platform teams without fundamentally changing what they actually do. Additionally, the best DevOps candidates now call themselves “Platform Engineers,” and companies change job postings overnight even when no one plans to build an actual platform.

The skepticism has merit. If platform engineering is DevOps rebranded, the 10% adoption crisis suggests we’re repeating the same mistakes—over-promising and under-delivering—with a new name and bigger budgets.

However, proponents argue platform engineering represents genuine structural evolution. Research from Spotify’s developer productivity team found that developers in DevOps-mature organizations spend 30-40% of their time on infrastructure tasks entirely unrelated to business logic. Platform engineering aims to reclaim this time by having specialists build self-service abstractions once, at scale.

The truth likely sits between hype and genuine innovation. Evidence for rebranding exists: some platform teams are just operations teams with new titles. Meanwhile, evidence for evolution exists: product mindset, self-service golden paths, and measurable cognitive load reductions are genuinely new approaches. Therefore, the question is whether organizations are chasing hype or solving real problems—and the 10% adoption rate suggests many are doing the former.

What Successful Platforms Do Differently

The few platforms that achieve high adoption share common patterns that separate success from the 70% failure rate. Successful platforms treat the IDP as a product, not a project, with product managers conducting user research, building roadmaps, measuring adoption metrics, and maintaining feedback loops with developer “customers.”

Developer-first design separates winners from failures. Platforms must be built for developer productivity, not operational control. Successful implementations report 40-50% reductions in developer cognitive load, 78% faster resource provisioning, and 92% reductions in support tickets. However, these gains only materialize when developers actually use the platform.

Transparent abstractions with escape hatches prevent golden cage syndrome. When abstractions leak, developers need visibility into underlying configurations and the ability to override when necessary. Furthermore, platforms that provide “magic” one-click deployments without visibility trap developers when things break.

Measurement is mandatory, not optional. Spotify’s approach provides a model: add instrumentation to your deployment, make metrics visible to contributing teams, and send weekly digest emails showing how usage metrics changed for individual plugins. Additionally, track actual usage—percentage of developers actively using the platform versus circumventing it—not just team formation or feature delivery.

Start small and deliver value fast. Don’t build everything at once. If documentation sprawl is the pain point, tackle only TechDocs first. Partner with one or two friendly teams for a pilot. Organizations that try to build comprehensive platforms before proving value burn out their teams on maintenance before delivering anything developers want to use.

Key Takeaways

Organizational adoption doesn’t equal developer usage. The 80% versus 10% gap proves that having a platform team tells you nothing about whether developers actually use what you build. Measure actual usage or accept that you’re burning millions on infrastructure developers actively avoid.

Golden cages built for control drive developer rejection. Leaky abstractions without visibility or escape hatches create trapped experiences that breed circumvention. Design for developer consumption, not operational control, or watch your adoption rate stall in single digits.

The measurement crisis is the foundation of failure. 29.6% of organizations don’t measure platform success at all, making ROI unprovable and iteration impossible. Without usage metrics answering “What percentage of developers actively use this platform?”, you’re flying blind while burning millions.

Product thinking is required, not optional. Platforms need product managers, user research, adoption metrics, and feedback loops—treating developers as customers worthy of the same rigor applied to external products. Engineering-first approaches without product thinking join the 70% failure rate.

The billion-dollar question remains: Is platform engineering a genuine solution to DevOps scaling problems, or a rebranded hype cycle heading toward disillusionment? The 2026 data suggests we’re already in the disillusionment phase, with 80% organizational adoption masking a 10% developer usage crisis. Organizations doubling budgets to $5-10 million need to answer one question before writing checks: will developers actually use this, or are we just forming expensive teams to build platforms that join the 70% failure rate?