AI infrastructure spending is racing toward $600 billion in 2026, but while the industry obsesses over bigger models and more GPUs, a critical constraint is quietly strangling performance: memory bandwidth. Yesterday, Majestic Labs unveiled Prometheus, an AI server designed to tackle what engineers call the “memory wall.” The timing matters. As AI workloads shift from training to real-time inferencing, the gap between how fast processors can compute and how fast they can access data from memory is becoming the limiting factor, not raw processing power.

Understanding the Memory Wall

The memory wall refers to the performance gap between processor speed and memory access speed. Over the past two decades, server compute capacity has scaled at 3× every two years, while memory bandwidth has only managed 1.6× growth over the same period. That widening gap means modern AI workloads spend an increasing proportion of time waiting for data instead of actually computing.

For AI inference specifically, the problem is acute. During token generation, a 70-billion-parameter model at FP16 precision transfers approximately 140 GB of data per token generated. The GPU must read model weights from VRAM for every single output token, making inference fundamentally memory-bandwidth-bound. Adding more GPUs doesn’t reduce latency when you’re hitting memory limits—the GPU can compute far faster than it can feed data to those compute units, leaving raw processing power idle while the memory bus becomes the constraint.

This matters now because 2026 is shaping up to be the year of AI inference. Unlike batch training workloads, inferencing requires constant memory access for real-time responses, and at the scale of a $600 billion infrastructure buildout, idle GPU time translates directly into wasted capital.

Prometheus: A System-Level Solution

Majestic Labs’ approach differs from the industry’s incremental memory improvements. Prometheus isn’t just a faster chip—it’s a complete server architecture designed to connect vastly more memory to processors at high bandwidth. The specs are striking: up to 128 terabytes of memory capacity per standard-size server, delivering roughly 1000× more memory per processor than today’s leading GPUs.

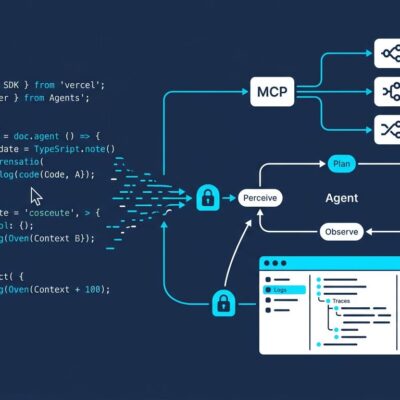

The architecture uses custom interconnects and proprietary AI Processing Units called “Ignite” to bridge the gap between compute and memory. Critically, the system supports existing frameworks including PyTorch, vLLM, and OpenAI Triton, meaning developers can deploy current code without rewrites. Majestic Labs claims the server achieves performance equivalent to multiple racks in a single unit, capable of running multi-trillion-parameter models and enabling context windows of hundreds of millions of tokens.

The company has credibility behind the claims. The founding team built and shipped hundreds of millions of custom chips at Google and Meta, raised $100 million in November 2025, and is currently working with early customers ahead of wide availability expected in 2027.

Competing Approaches to the Memory Bottleneck

The industry has been attacking the memory wall from multiple angles. High Bandwidth Memory (HBM) remains the standard approach—HBM3E dominates the 2026 market, and HBM4 is entering production with 11 Gbps transfer speeds. NVIDIA’s H200 packs 141 GB of HBM3. The limitation is capacity: even cutting-edge HBM implementations top out at 80-141 GB per GPU.

AI chip startups have tried more radical approaches. Cerebras uses wafer-scale on-chip SRAM to keep all data on the processor, but SRAM is far less dense and more expensive than off-chip memory. Groq (recently acquired by NVIDIA in January 2026) built an SRAM-only architecture optimized for inference throughput, but the reliance on expensive SRAM limits capacity per chip.

There’s also Compute Express Link (CXL), an industry-standard protocol for memory pooling that enables dynamic allocation across devices. CXL deployments show 3.8× speedup compared to RDMA and are transitioning from technical exploration to commercial deployment.

Majestic Labs’ differentiation is clear: while others make memory faster or use expensive on-chip alternatives, Prometheus focuses on connecting massively more memory—128 TB versus under 200 GB—through custom system-level architecture while maintaining software compatibility.

Why Infrastructure Optimization Matters

While headlines chase the next frontier model release, infrastructure efficiency is quietly becoming the critical competitive advantage. The numbers back this up: 73% of organizations are actively moving AI inferencing to edge environments for energy efficiency, driving a shift from massive centralized data centers to distributed 5-20 MW facilities closer to end users.

For developers, understanding these infrastructure constraints shapes real deployment decisions: model selection, batch sizes, context window lengths, and cost-per-inference calculations all flow from memory bandwidth realities. For businesses, reducing inference costs isn’t an optimization—it’s what makes AI applications economically viable at scale.

What to Watch

Prometheus is unproven technology from a startup competing against NVIDIA’s entrenched ecosystem. The critical questions are straightforward: Can Majestic Labs deliver 128 TB capacity at the claimed bandwidth in production? How does total cost of ownership compare to multi-rack GPU deployments? Will the software ecosystem develop beyond framework support?

Execution risk is real, but the industry momentum is undeniable. Whether Prometheus succeeds or not, memory optimization is becoming the next infrastructure frontier. The memory wall isn’t going away, and solving it—whether through proprietary architectures like Majestic Labs’, standards-based approaches like CXL, or improved HBM implementations—will define which AI deployments scale and which hit a ceiling.

The $600 billion question isn’t just how much compute we can buy. It’s whether we can feed data to that compute fast enough to justify the investment.