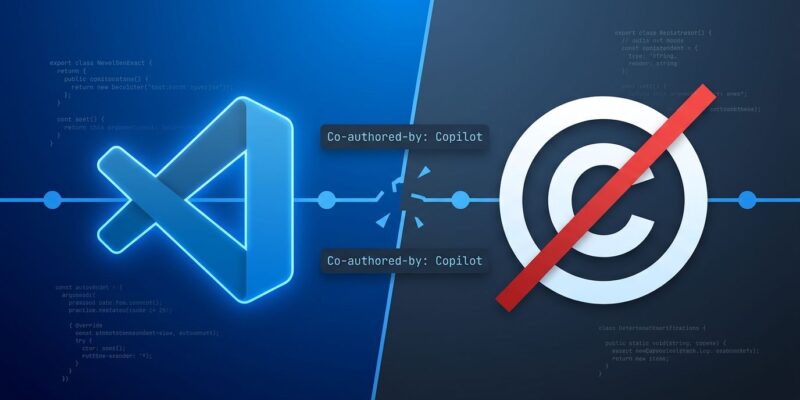

On April 29, 2026, Microsoft released VS Code 1.118 with a controversial new default: automatically adding “Co-authored-by: Copilot <copilot@github.com>” to git commits whenever AI makes any code contribution—even trivial autocomplete like fixing typos or adding commas. The feature is opt-out, not opt-in, and has already affected 4 million commits on GitHub. But here’s the problem: just one month earlier, the U.S. Supreme Court denied certiorari in Thaler v. Perlmutter, cementing the precedent that non-human authors cannot hold copyright. Listing a non-human as co-author on copyrightable code creates a legal minefield that Microsoft never addressed.

Non-Human Authors Can’t Hold Copyright—But VS Code Lists One Anyway

The timing is remarkable. On March 2, 2026—just 58 days before VS Code 1.118 shipped—the Supreme Court declined to hear Thaler v. Perlmutter, leaving intact the D.C. Circuit’s ruling that “the Copyright Act requires copyrightable works to be authored by a human being.” The Copyright Office was explicit: only works authored by humans are eligible for copyright protection.

This creates immediate problems. If copyright law says non-humans can’t be authors, what does it mean to list one as a co-author on copyrightable code? Open source licenses like GPL, MIT, and Apache all assume human authorship for copyright to apply. Corporations with strict IP requirements may inadvertently complicate ownership claims by listing AI as co-author. The legal question isn’t theoretical—4 million commits already carry this attribution.

Microsoft released a feature that conflicts with recent Supreme Court precedent without addressing the copyright implications. That’s not thoughtful product design. It’s legal recklessness.

Developers Revolt: “Forced Advertising in My Git History”

The backlash was swift. Within 24 hours of discovery, GitHub Issue #313064 erupted with complaints. “GitHub injecting an authorship claim into my repository without consent,” wrote user matriac. “You don’t get to silently assert authorship on my work.” The thread quickly filled with similar frustrations.

Hacker News was equally brutal. One highly-upvoted comment compared it to spam: “That is not coauthorship. It’s like ‘Sent from an iPhone’ levels of nuisance default advertising.” Another developer who doesn’t even use Copilot complained the tool was claiming ownership of their code anyway, calling Microsoft’s approach “desperate.”

The philosophical objection is clear: git history is permanent. Once you’ve pushed commits with “Co-authored-by: Copilot,” removing it requires rewriting history, which breaks forks and disrupts collaboration. Developers feel their commit history—a professional record of their work—is being used for Microsoft marketing without their consent.

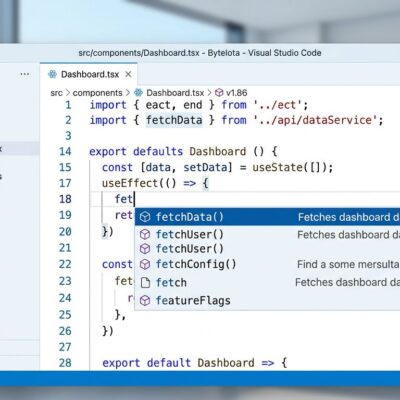

How VS Code’s Auto-Attribution Works (And How to Turn It Off)

VS Code 1.118 introduces the git.addAICoAuthor setting, defaulting to chatAndAgent. When you commit code, the editor detects AI-assisted content and automatically appends a co-author trailer. The trigger threshold is low: even accepting a comma suggestion or typo fix qualifies.

Here’s what happens silently:

# What you type:

git commit -m "Fix authentication bug"

# What VS Code 1.118 creates:

git commit -m "Fix authentication bug

Co-authored-by: Copilot <copilot@github.com>"The modification happens unless you manually review the commit message before confirming. Many users discovered it only after seeing “Co-authored-by: Copilot” appear in git logs or CI output—weeks after the feature activated via auto-update. VS Code 1.118 documentation explains the feature, but most developers don’t read release notes.

To disable it, add this to your settings.json:

{

"git.addAICoAuthor": "off"

}Or search “Git Add AI Co Author” in VS Code settings and toggle it off. Check if you’re already affected by running: git log --grep="Co-authored-by: Copilot". GitHub’s co-authoring documentation explains how the trailer format works.

Other AI Tools Don’t Do This—Here’s How Cursor and Claude Code Handle It

Microsoft’s approach is an outlier. Other AI coding assistants make attribution opt-in, not opt-out. Cursor offers an optional PR footer in settings, disabled by default. Claude Code uses explicit co-author format (Co-Authored-By: Claude Sonnet 4.5 <noreply@anthropic.com>), but only when the user chooses. Sourcegraph Cody has no automatic commit attribution at all.

The pattern is clear: industry consensus respects user choice. Microsoft broke from that consensus by forcing attribution as the default, then requiring users to find and disable a buried setting. This isn’t about transparency—transparent tools ask permission. This is about branding.

What This Means for Open Source: GPL, MIT, and Copyright Ambiguity

Open source licenses rest on copyright law, which assumes human authorship. GPL requires derivative works remain freely available under the same license. MIT and Apache offer permissive terms but still assume the copyright holder is human. If AI is listed as co-author and non-humans can’t hold copyright, the legal foundation becomes uncertain.

There’s a deeper problem: GitHub Copilot was trained on open source code under various licenses. When it suggests code, you might be accepting GPL-licensed snippets without knowing it. Listing a non-human co-author on that code adds another layer of attribution ambiguity on top of existing concerns about AI training on copyleft-licensed code. Open source licensing guides don’t yet address this scenario because the law hasn’t caught up to the technology.

Some open source projects may start rejecting PRs with AI co-author markers to avoid copyright complications. That’s not paranoia—it’s prudent legal hygiene when the law hasn’t caught up to the technology.

Key Takeaways

- Check if you’re affected: run

git log --grep="Co-authored-by: Copilot"to see if your commits already include AI attribution - Disable the feature immediately if you care about copyright clarity: set

"git.addAICoAuthor": "off"in VS Code settings - Understand the legal risk: the Supreme Court ruled non-humans can’t be copyright authors (March 2026), yet Microsoft lists one as co-author by default (April 2026)

- This approach is an outlier: Cursor, Claude Code, and Cody all make AI attribution opt-in, not opt-out—Microsoft’s default-on model prioritizes branding over user control

- Open source implications are real: projects relying on GPL, MIT, or Apache licenses may reject AI co-author markers to avoid copyright ambiguity

Microsoft released a feature that conflicts with recent Supreme Court precedent, activated it by default without asking permission, and left millions of developers to discover “Co-authored-by: Copilot” in their git logs after the fact. The legal implications remain unresolved. The user consent question is clear: this was the wrong call.