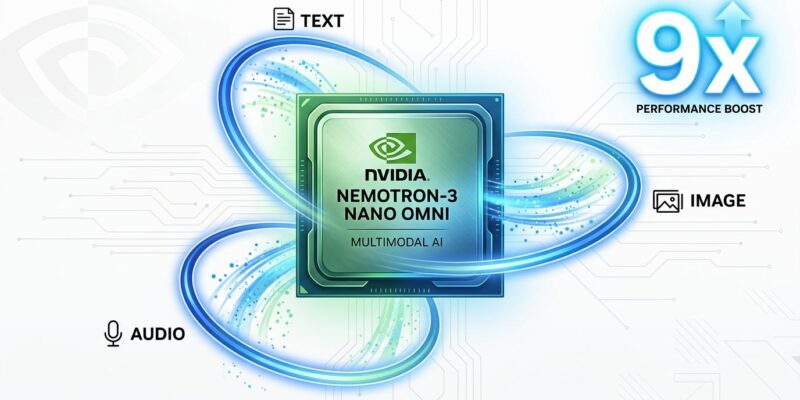

NVIDIA launched Nemotron 3 Nano Omni on April 28, 2026, claiming it’s the first open multimodal model with 9x higher throughput than comparable alternatives. The model processes text, images, audio, video, documents, charts, and GUIs simultaneously through a unified 30B-A3B architecture. This matters because it’s the first time an open model credibly challenges closed alternatives on throughput—changing the economics of multimodal AI from per-token API costs to self-hosted infrastructure.

The 9x Throughput Claim Deserves Scrutiny

NVIDIA claims Nemotron 3 Nano Omni delivers “up to 9x higher throughput than other open omni models.” The evidence: 9.2x greater system capacity for video reasoning, 7.4x for multi-document processing, and 2.9x faster single-stream speed. However, there’s a catch—this compares to “other open omni models,” not GPT-4V, Claude, or Gemini. The likely baseline is Qwen3-Omni 30B, the closest open competitor, measured under “fixed interactivity thresholds”.

The architecture enables this efficiency. Nemotron uses a hybrid mixture-of-experts design: 30 billion total parameters, but only 3 billion active per token. That’s 4x improved memory and compute efficiency versus dense models. Moreover, the question is whether this advantage comes from architectural innovation or NVIDIA’s hardware optimization for its own GPUs. Either way, it makes 24/7 document, audio, and video processing economically viable at scale—something blocked by per-token API pricing.

Unified Architecture Eliminates Pipeline Complexity

Traditional multimodal systems juggle separate models—vision encoder, speech transcriber, language model—losing time and context as data passes between them. In contrast, Nemotron 3 Nano Omni combines all modalities in a single unified architecture: 23 Mamba layers for long-context efficiency, 23 MoE layers with 128 experts, and 6 attention layers for global reasoning. Vision processing uses C-RADIOv4-H encoder with dynamic resolution (512×512 to 1840×1840), while audio runs through Parakeet-TDT-0.6B-v2 for native processing—not just transcripts.

The 256K token context window handles 100+ page documents or 5+ hours of audio. Real-world impact: analyze a financial report with mixed text, charts, and images in one pass. Process customer service calls with audio and video together without stitching pipelines. Furthermore, build computer-use agents that understand screens and context simultaneously. Simpler stack, lower latency, native cross-modal reasoning.

Open Weights Change the Economics

Nemotron 3 Nano Omni ships with fully open weights, training recipes, and evaluation code. This enables self-hosting without per-token costs, unlike GPT-4V, Claude, or Gemini. The trade-off: closed APIs scale with usage (pay per token), while open models require upfront GPU investment but then run at fixed cost. Consequently, high-volume use cases—processing millions of documents, 24/7 monitoring, enterprise data pipelines—break even fast.

Deployment is flexible. NVFP4 quantization runs on consumer GPUs like the RTX 4090 with 18GB VRAM. Production deployments use FP8 (30GB) or BF16 (60GB) for higher accuracy. Additionally, the model’s available via Hugging Face, OpenRouter, and 25+ platforms, with early adopters including Palantir, Oracle, Foxconn, Dell, Docusign, and Infosys. That’s enterprise validation.

Benchmarks show Nemotron leads open alternatives. It scores 57.5 on MMLongBench-Doc versus Qwen3-Omni’s 49.5, hits 72.2 on Video-MME versus 70.5, and reaches 89.4 on VoiceBench versus 88.8. It tops six leaderboards for document intelligence and audio-video understanding. Against closed models, it’s competitive but not universally better—GPT-4V still leads on some tasks. Nevertheless, the value proposition: open models are catching up.

NVIDIA’s Strategic Conflict

NVIDIA is a GPU hardware company. It sells chips to OpenAI, Anthropic, Meta, and Google—billions in revenue. Now it’s releasing AI models that compete with those customers. This is a strategic pivot: vertical integration to control the full stack, similar to Apple moving from Intel chips to custom silicon.

The open-source strategy is a moat. By releasing competitive open models, NVIDIA prevents closed AI providers from controlling the entire stack. However, it creates tension—will OpenAI and Anthropic keep buying GPUs from a competitor? Short-term, NVIDIA maintains hardware sales while building model credibility. Long-term, it’s positioning as a full-stack AI company: hardware plus software.

Key Takeaways

- NVIDIA’s Nemotron 3 Nano Omni launched April 28, 2026, as the first competitive open multimodal model

- 9x throughput claim compares to open alternatives (Qwen3-Omni), not closed models (GPT-4V)

- Unified architecture handles text, images, audio, video in 256K context without pipeline complexity

- Open weights enable self-hosting economics: viable for high-volume use cases versus per-token APIs

- NVIDIA’s strategic shift: GPU hardware company now competes in AI models against its own customers