Nine days ago, Sam Altman sat on a podcast and criticized Anthropic for gatekeeping their Mythos cybersecurity model. He called it “fear-based marketing” and compared it to selling $100 million bomb shelters. On April 30, OpenAI announced GPT-5.5 Cyber will roll out only to “critical cyber defenders” through a gated access program. Same playbook, different logo.

The Bomb Shelter Sales Pitch

During an April 21 appearance on the Core Memory podcast, Altman took direct shots at Anthropic’s restricted release strategy. His criticism was specific and biting:

“It is clearly incredible marketing to say: ‘We have built a bomb. We are about to drop it on your head. We will sell you a bomb shelter for $100 million. You need it to run across all your stuff, but only if we pick you as a customer.'”

He accused Anthropic of wanting to keep AI “in the hands of a smaller group of people” and using fear to justify gatekeeping. The implication was clear: OpenAI would do things differently.

Then OpenAI Did the Same Thing

Days later, OpenAI announced its Trusted Access for Cyber program. GPT-5.5 Cyber will roll out to governments, critical infrastructure operators, security vendors, cloud platforms, and financial institutions. Individual security researchers can apply at chatgpt.com/cyber after providing identification and professional use-case justification. Access is tiered based on trust level. The Register called it “checking IDs at the door.”

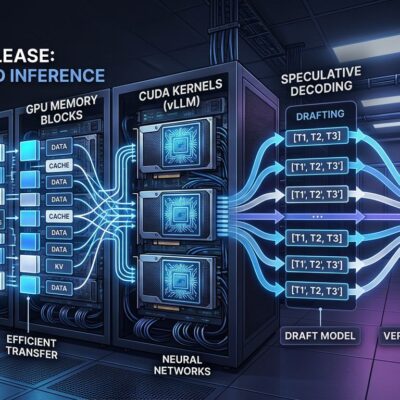

The tool is powerful. Moreover, it handles penetration testing, vulnerability discovery, and malware reverse engineering. The UK AI Security Institute rated it “one of the strongest models” tested for cyber tasks. It completed complex network attack simulations that take human experts 20 hours. However, it cannot independently develop full-chain exploits against real-world targets, classifying it as “High” capability but not “Critical” under OpenAI’s framework.

Who Gets to Play and Who Doesn’t

OpenAI plans to distribute GPT-5.5 Cyber to “thousands of verified defenders and hundreds of teams.” That sounds broad until you realize who’s excluded: independent security researchers, academics without institutional backing, smaller security firms, and developers who want to experiment with cutting-edge pentesting AI.

Anthropic restricted Mythos to roughly 50 organizations. OpenAI’s scale is larger, but the philosophy is identical. Both companies decide who’s trustworthy enough. Furthermore, both cite security concerns. Both create tiered access based on organizational size and importance. The difference is degree, not kind.

Why Developers Should Care

When the most powerful security tools get locked behind institutional gates, innovation happens inside walled gardens. Security researchers can’t experiment. The feedback loop during early testing narrows to vetted players. Consequently, knowledge consolidates with governments and large enterprises who already have massive security teams.

Alex Stamos, chief product officer at Corridor, defended the cautious approach but acknowledged the window is closing: “we only have something like six months before the open-weight models catch up to the foundation models in bug finding.” If that timeline holds, these restrictions are temporary theater.

Gatekeeping Doesn’t Even Work

On April 23, a group leaked Anthropic’s Mythos model by guessing where it was located. One member was an Anthropic contractor. Restrictions created a black market that defeated the security theater within days of Mythos deployment. OpenAI’s broader TAC program might slow this, but determined actors will find ways around access controls. They always do.

The choice isn’t between safe restriction and dangerous openness. Instead, it’s between official channels and unofficial ones, legitimate research and underground distribution.

The “Open” in OpenAI Fades Further

OpenAI released GPT-2 openly. GPT-3 began restricting access. GPT-4 tightened further. Now cybersecurity tools require identity verification, institutional backing, and multi-tier approval processes. The company’s trajectory is away from its founding premise with each model release.

Altman’s April 21 criticism of Anthropic reads differently now. He wasn’t objecting to gatekeeping. He was objecting to someone else controlling the gate. Corporate hypocrisy dressed up as principle.

What This Means Going Forward

Watch this pattern. Both leading AI companies now restrict their most capable security tools. They’ll frame it as responsible deployment. They’ll cite security risks. They’ll promise broader access later. And access to cutting-edge AI capabilities will stratify along institutional lines while companies that criticized gatekeeping implement it themselves.

The tools that promise to democratize security testing are being distributed through the least democratic channels possible. And the irony is apparently lost on no one except the people selling the bomb shelters.