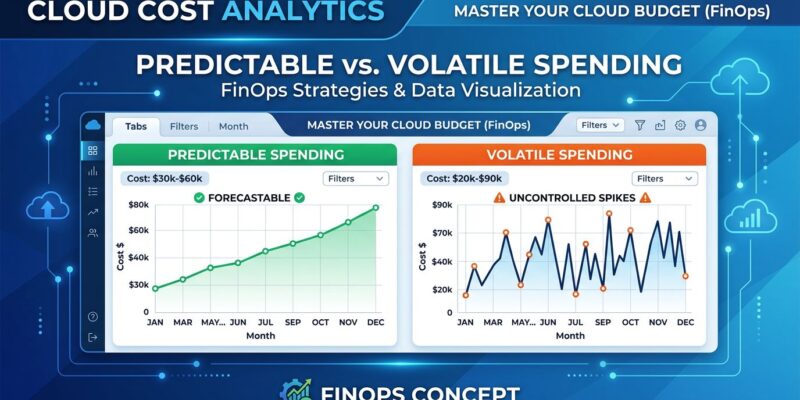

Companies pulling ahead in 2026 aren’t spending less on cloud infrastructure—they’re predicting better. The real issue isn’t how much infrastructure costs, but how reliably those costs behave over time. A volatile $50,000-per-month cloud bill is worse than a stable $60,000 bill because the former prevents planning. Finance teams need reliable forecasts for business planning, and cloud cost volatility breaks traditional budgeting.

Yet 74% of CFOs report monthly cloud forecast variance of 5-10% or higher, according to Flexera’s 2026 data. The strategic shift is underway: from “reduce spend” to “predict spend.” And the companies that figure this out first will widen the performance gap over the next 12-24 months.

The Waste That Won’t Go Away

Despite FinOps maturity nearly doubling—from 39% to 72% formal adoption between 2022 and 2025—cloud waste remains stubbornly high at 27-35%. In fact, CloudZero’s 2026 data shows efficiency actually dropped from 80% to 65%. More tools and dashboards haven’t solved the problem.

The waste breakdown reveals compute as the largest culprit, accounting for 35% of wasted cloud dollars, according to SpendArk’s 2026 benchmark report. Storage follows at 25%, data transfer at 20%, and unused resources at 15%. The paradox is clear: FinOps programs proliferated, but efficiency collapsed 15 points. Why? Traditional optimization playbooks don’t address modern cloud economics, particularly AI workload patterns.

Furthermore, well-managed teams can reduce waste to 10-15%, and organizations achieving advanced FinOps maturity demonstrate waste levels of 14-18%—roughly half the sector average. However, most organizations aren’t there yet. The implementation gap is real: Hacker News discussions reveal that only about 5% of FinOps tool recommendations actually get executed. Pretty dashboards don’t equal cost reduction.

AI Makes Cloud Costs Unpredictable

AI and ML workloads now account for 22% of cloud costs, and they behave nothing like traditional infrastructure. Eighty percent of companies exceed AI cost forecasts by 25% or more, according to Finout’s 2026 statistics. The problem isn’t just overspending—it’s the inability to predict spending patterns.

Moreover, GPU utilization has plummeted to just 5% across analyzed clusters, according to TechBriefly’s April 2026 report, yet GPU prices are rising for the first time in cloud history. AWS raised H200 Capacity Block prices by 15% in January 2026. AI and GPU workloads grew 62% year-over-year from 2025 to 2026, making GPU waste the fastest-growing category of cloud overspend. An idle GPU costs dollars per hour, making that 5% utilization rate a massive financial leak.

Consequently, the cloud cost predictability gap is stark. FinOps matured in a world where costs were mostly predictable—spin up compute, run workload, shut down. AI doesn’t behave like that. Training runs spike unpredictably, inference costs scale non-linearly, and GPU utilization patterns defy traditional capacity planning. This gap has led to a $400 million collective “leak” in unbudgeted cloud spend across the Fortune 500.

Hyperscalers are doubling down: Amazon, Alphabet, Microsoft, Meta, and Oracle are forecast to exceed $600 billion in capital expenditure in 2026, with $450 billion—75% of the total—directly tied to AI infrastructure. The AI cost crisis is structural, not temporary.

Why Cloud Cost Predictability Beats Absolute Savings

Organizations using FinOps frameworks with a predictability focus are 2.5 times more likely to meet ROI expectations. The real value isn’t saving 30%—it’s gaining the ability to forecast within 5% variance month-to-month. Yet only 26% of CFOs achieve that precision, according to Flexera.

Industry benchmarks reveal wide variance even within sectors. Media and streaming companies spend $750 per employee per month on average, but the range spans $300 to $2,000—a 6.6x difference. E-commerce companies average $210 per employee, with a range of $80 to $500—a 6.25x variance. Fintech companies show a $620 average with a $250 to $1,200 range. This variance isn’t just about waste; it reflects wildly different cost predictability across organizations.

For example, an energy operator reduced Azure costs by 30% through managed cloud services, according to SoftSysHosting’s 2026 case study. But the real win wasn’t the savings—it was improved forecast accuracy and governance that enabled leadership to trust cloud ROI justifications. Budget forecasting went from difficult to reliable, giving leadership a clear view of where money was spent and which workloads delivered business value.

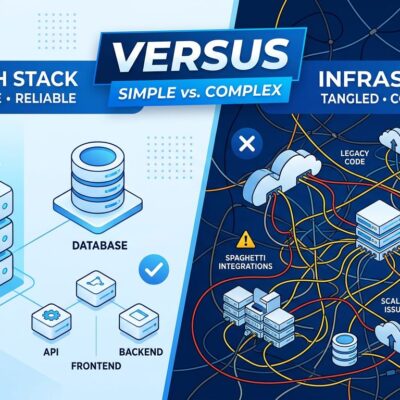

This is driving a surprising trend: dedicated servers are re-emerging not as a legacy option, but as a financial control mechanism. For workloads with 40-60% or higher utilization, dedicated infrastructure offers fixed pricing and cost predictability that cloud can’t match. Dedicated servers run $60 to $700 per month with no surprise scaling events or hidden egress charges. If your monthly cloud spend has crept past $5,000 and you’re running stable, predictable workloads, you’re likely overpaying.

Hybrid cloud adoption is expected to reach 90% by 2027, according to Database Trends’ 2026 predictions. The pattern is clear: dedicated for the predictable baseline, cloud for spikes and flexibility. Best of both worlds.

What Engineering Leaders Should Do

First, shift KPIs. The old metric was total monthly spend. The new metric is month-to-month variance percentage. Goal: less than 5% variance for predictable planning.

Second, attack GPU waste. With 5% utilization and 62% year-over-year growth, GPUs are the highest-impact optimization target. Switch training jobs to spot or preemptible GPU nodes for 60-70% savings, according to LeanOps’ 2026 guide. Apply GPU time-slicing for inference workloads to cut per-inference costs by 50-75%. Right-size GPU selection instead of defaulting to A100s or H100s.

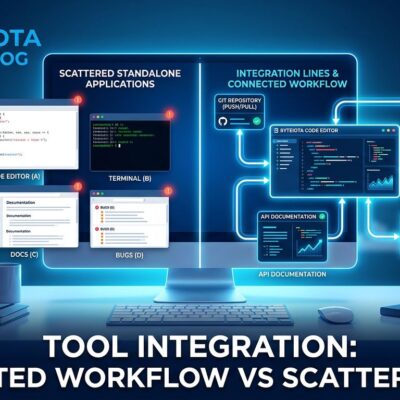

Third, fix visibility before optimization. CloudZero’s data shows 70% of companies can’t articulate exactly where their cloud budget goes, and only 43% track unit-level costs per product, customer, or feature. Fifty-nine percent use three or more tools, fragmenting visibility. Fix that first.

Fourth, focus on implementation, not dashboards. The industry implementation rate on FinOps recommendations is about 5%. You need process and automation, not just reports. Teams conducting monthly waste reviews achieve advanced-maturity results faster than those relying on dashboards alone.

Finally, consider hybrid strategies. Ninety percent hybrid adoption by 2027 isn’t hype—it’s organizations learning that cloud isn’t always the answer. For sustained workloads, particularly AI and high-performance computing, dedicated infrastructure often provides lower total cost of ownership and, critically, predictable monthly bills that Finance can trust.