AI coding tools promise to understand your entire codebase, but they hit a wall fast. Loading full directories into Claude Code or Cursor burns tokens like a gas guzzler burns fuel, and keyword search misses the semantically related code you actually need. Enter claude-context, an MCP server that just exploded to #2 on GitHub trending with 1,011 stars gained today. It makes your entire codebase searchable context using hybrid semantic search, cutting token usage by 40% while finding code your AI agent would otherwise miss.

What claude-context Actually Does

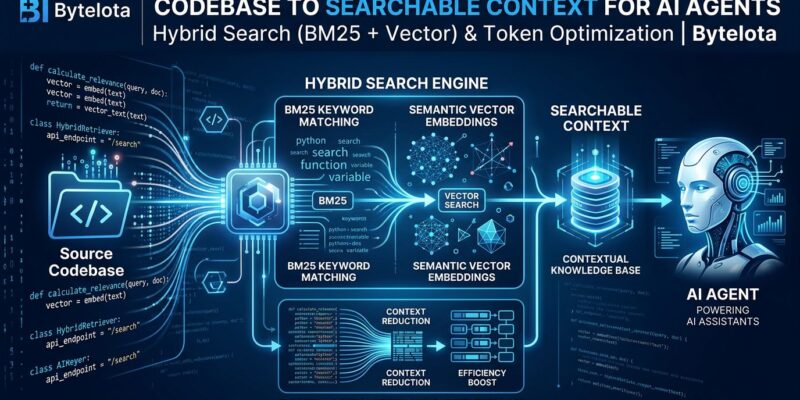

Claude-context is an MCP (Model Context Protocol) server that turns your repository into searchable, semantic context for AI coding agents. Created by Zilliz Tech—the team behind Milvus—it uses hybrid search combining BM25 keyword matching with vector embeddings to find exactly the code your AI needs.

The magic is in what it doesn’t do: it doesn’t dump entire folders into context. Instead, it indexes your codebase once, then retrieves only relevant snippets. That’s how it achieves 40% token reduction.

Built on MCP—the open standard rapidly becoming the USB-C of AI tool integration—it works with any MCP-compatible assistant: Claude Code, Cursor, Cline, Windsurf, and others.

Why This Matters

Token costs add up. Using Claude Code daily on a 50,000-line codebase easily hits 100,000 tokens per session just loading context. That wastes your AI’s limited window on boilerplate you don’t need.

Keyword search betrays you. Search for “authentication” and miss validateUser. Search for “database queries” and skip ORM logic in models.ts. Semantic search fixes this by understanding meaning, not matching strings.

MCP hit critical mass in 2026—OpenAI, Vercel, and major dev tools adopted it. Claude-context is riding that wave: 8,600 stars and climbing, with a production-ready MIT-licensed codebase.

Prerequisites

Claude Code or any MCP-compatible tool. Cursor, Cline, Windsurf, and others work fine.

Node.js 20.x through 23.x. Node.js 24.0.0+ breaks compatibility. Downgrade if needed.

OpenAI API key. For generating embeddings (default: text-embedding-3-small).

Zilliz Cloud account. Free tier works for personal projects. Sign up, create a cluster, grab your endpoint and API key.

Installation

One command for Claude Code:

claude mcp add claude-context \

-e OPENAI_API_KEY=sk-your-openai-api-key \

-e MILVUS_ADDRESS=your-zilliz-cloud-public-endpoint \

-e MILVUS_TOKEN=your-zilliz-cloud-api-key \

-- npx @zilliz/claude-context-mcp@latestRestart Claude Code. You now have four MCP tools: index_codebase, search_code, get_indexing_status, and clear_index.

Common issues: API key must start with sk-, Zilliz endpoint needs full URL with https://, Node.js must be <24.0.0.

How to Use It

Step 1: Index your codebase. Tell Claude Code: “Index my current project using claude-context.” It chunks code, generates embeddings, stores them in Zilliz Cloud. For a 50,000-line repo, this takes a few minutes once.

Step 2: Query semantically. Ask: “Find all functions that handle user authentication.” Claude Code runs hybrid search and returns relevant snippets.

Step 3: Watch token usage drop. Instead of loading entire directories (50,000+ tokens), you get targeted snippets (20,000 tokens). That’s 60% reduction plus better context quality.

Example: Ask “Where do we validate API keys?” Traditional search finds validateApiKey() in middleware/auth.ts. Claude-context also finds checkAuthToken() in utils/security.js and JWK parsing in lib/jwt.ts—semantically related code you didn’t know to look for.

Alternatives Comparison

GitHub MCP: Free, keyword-only. No semantic search.

WarpGrep: Fast (8 parallel searches, <6 seconds), but no semantic understanding.

Self-hosted (OpenGrok, Zoekt): Full control, no cloud deps, but no MCP or semantic search.

Claude-context wins on hybrid search: BM25 keyword matching plus vector embeddings. You’re not just using fewer tokens—you’re using better tokens.

When to Use It

Use if: Codebase is 10k+ lines, you use AI tools daily, token costs matter, or you work with microservices.

Skip if: Project is <1k lines, budget is tight, or grep does the job.

The real ROI isn’t token savings—it’s code discovery quality. Semantic search surfaces patterns you wouldn’t find manually.

The MCP Advantage

MCP is the standard that’s winning. Anthropic released it in late 2024, and by 2026 it’s everywhere: OpenAI, Vercel, every major AI coding tool.

Building on MCP means claude-context works with any tool that speaks the protocol. Switch from Claude Code to Cursor? Your indexed codebase comes with you. That’s the USB-C analogy in action.

The ecosystem now has 100+ MCP servers. Claude-context is popular because it solves a universal problem: making large codebases tractable for AI agents.

Try It

Setup takes one command and three environment variables. Index once, benefits compound forever.

Check the official GitHub repo for docs. Sign up for Zilliz Cloud’s free tier to get your endpoint. For more on MCP, Anthropic offers a free course.

The 40% token reduction is nice. Semantic code discovery is nicer. But the real win? Making AI coding tools actually understand your codebase. That’s what claude-context delivers.