Google released Gemma 4 on April 2, 2026, under Apache 2.0 license—a model family (2B, 4B, 26B MoE, 31B dense) that runs frontier-class AI on phones, Raspberry Pi, and consumer GPUs with 5-34GB RAM. NVIDIA co-optimized the models for near-zero latency and completely offline operation. The 31B variant ranks #3 globally on Arena AI leaderboard, achieving 84.3% on GPQA Diamond (doubling Gemma 3’s 42.4%) and 80.0% on LiveCodeBench for code generation. This eliminates the “hardware tax”—developers no longer need expensive cloud infrastructure to run powerful multimodal models.

Apache 2.0 License Shifts the Economics

Gemma 4’s Apache 2.0 license is the biggest differentiator. Full commercial freedom, no MAU caps, no acceptable-use restrictions. Previous Gemma versions used a custom Google license that technically allowed usage restrictions—Apache 2.0 removes that uncertainty entirely.

“Apache 2.0 license means no strings attached for modification and deployment. This changes the commercial equation,” notes Sam Witteveen, Google Developer Expert. Nathan Lambert, AI researcher, emphasizes that “success hinges on integration friction rather than benchmark margins. Gemma 4 is strong enough, small enough, with the right license.”

The cost comparison is stark: zero per-token fees after a one-time hardware investment versus $15 per 1M tokens for Claude 3.5. For organizations processing high volumes of standard tasks—coding assistance, document processing, structured data extraction—the ROI crossover happens around 67-133M tokens. Beyond that threshold, local inference pays for itself.

Related: Claude Opus 4.7 Tokenizer: 35% Cost Inflation Hits API Users

Frontier AI Runs on Consumer Hardware

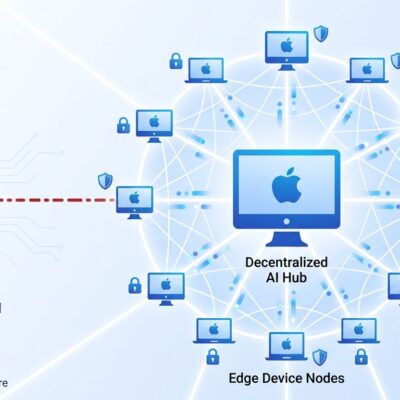

Gemma 4 eliminates the hardware tax that previously locked powerful AI behind expensive cloud infrastructure. The E2B and E4B models operate on phones and Raspberry Pi with as little as 5GB RAM (4-bit quantization). The 31B model delivers GPT-4 class reasoning on consumer GPUs with 20-34GB RAM. NVIDIA’s NVFP4 quantization maintains near-identical accuracy at 4-bit precision while increasing performance per watt.

Performance metrics validate the edge deployment claims. Raspberry Pi 5 (CPU) achieves 133 prefill tokens/s and 7.6 decode tokens/s. Qualcomm Dragonwing IQ8 with NPU acceleration hits 3,700 prefill tokens/s and 31 decode tokens/s. Jetson Orin Nano delivers near-zero latency, completely offline.

This hardware flexibility unlocks use cases that weren’t viable before. Privacy-first applications in healthcare and finance can run powerful models locally without data leaving the network. Offline-capable mobile apps for travel or remote work no longer depend on cloud connectivity. Industrial IoT deployments gain on-device intelligence for robotics and automation without latency bottlenecks.

The 70% Coverage Rule: Hybrid Wins

Developer consensus is pragmatic: Gemma 4 covers “70% of daily coding work at acceptable quality.” Benchmarks confirm strong but not state-of-the-art performance—87.1% MMLU, 82.7% HumanEval, 80.0% LiveCodeBench. Claude 3.5 Sonnet beats it on complex reasoning: 89.5% MMLU, 94.3% HumanEval, 73.5% on MATH versus Gemma’s 58.7%.

The performance gap is small enough to ignore for routine tasks but large enough to matter for deep architectural reasoning or complex multi-file refactoring. One developer reported that “Gemma 4 27B produced working code on the second attempt in my tests.” Another summarized the experience as “genuinely impressive in places, mildly annoying in others.”

The smart architecture isn’t edge-only or cloud-only—it’s hybrid. Run Gemma 4 locally for high-volume standard tasks: code completions, API documentation, test generation, structured data extraction. Switch to Claude or GPT for heavy lifting: complex system design, performance optimization, debugging elusive bugs. This balances cost efficiency with capability where it matters.

Real Issues: Early-Stage Maturity

Transparency matters. Community testing within 24 hours revealed genuine technical issues. Non-quantized 31B produces infinite loops and can’t read text from images. Mac users report hard crashes loading 31B or 26B in LM Studio. The model jailbreaks with basic system prompts. Fine-tuning requires patches for the new Gemma4ClippableLinear layer type and mm_token_type_ids field.

These aren’t showstopping failures—quantized models work fine, patches emerged within hours, and workarounds exist for most issues. But this is a 17-day-old release with early adopter friction, not the production-hardened stability of Claude or GPT. Developers need realistic expectations.

The vendor-independent assessment from the community cuts through marketing hype: Apache 2.0 changes the commercial calculus more than any benchmark number. Integration friction matters more than peak performance metrics. For cost-sensitive deployments processing high volumes of standard tasks, Gemma 4’s “strong enough, small enough, right license” equation makes sense. For mission-critical applications where 2-3% accuracy gaps compound, stick with cloud APIs.

Key Takeaways

- Apache 2.0 license eliminates commercial restrictions (no MAU caps, full modification rights)—the biggest differentiator over benchmark numbers

- Frontier AI runs on 5-34GB RAM (phones to consumer GPUs)—hardware tax eliminated, cloud dependency optional

- 70% daily work coverage makes hybrid architectures (local for volume, cloud for complexity) the practical standard

- Real technical issues exist (infinite loops, crashes, jailbreaking)—17-day-old release lacks production hardening

- Strategic shift underway: open models with commercial-friendly licenses challenge proprietary APIs on cost and privacy grounds

The verdict: Gemma 4 isn’t replacing Claude or GPT—it’s creating a new deployment tier where cost economics and privacy requirements outweigh marginal performance differences. For organizations balancing budget constraints with capability needs, that’s the equation that matters.