Within 48 hours of Claude Opus 4.7’s release, Hacker News erupted. Moreover, two threads totaling 920+ points and 700+ comments documented what Anthropic hadn’t: the new model’s tokenizer inflates token counts by 35-45% for the same input text. Consequently, your production app that cost $500/day yesterday now costs $675/day today – a silent price increase disguised as a technical improvement. Furthermore, developers discovered this through their bills, not through Anthropic’s changelog.

This is what happens when pricing transparency becomes optional.

The Stealth Increase Nobody Announced

Opus 4.7 launched on April 16, 2026, with a headline promise: “Pricing unchanged at $5/$25 per million tokens.” However, what Anthropic didn’t highlight was the new tokenizer that generates 1.0x to 1.35x more tokens for identical input. Specifically, code-heavy prompts get hit hardest – up to 45% inflation. Therefore, the same API call that processed 1,000 tokens under Opus 4.6 now burns through 1,350 tokens under 4.7.

The math is brutal. Indeed, a production workload consuming 100 million tokens daily jumped from $500/day to $675/day overnight. No usage increase, no new features – just 35% more expensive for the exact same work. Additionally, enterprises running $50,000/month budgets face $17,500 monthly overruns, or $210,000 annually. All because a tokenizer changed and nobody sent a memo.

The detailed technical analysis from Finout.io confirms what developers measured in their dashboards: this isn’t a rounding error. It’s a structural cost increase deployed without transparency.

The Trust Tax: Pricing Uncertainty as Hidden Cost

Here’s the part that matters more than one model update: developers now pay a “trust tax” on top of actual tokens. Nevertheless, every vendor evaluation must factor in the risk of surprise cost changes. Can you trust your $50K/month forecast when tokenizers can silently inflate by 35% between releases?

This isn’t unique to Anthropic. In fact, research on tokenizer drift across the industry from Trend Micro documents a troubling pattern: “LLM providers advertise low headline rates, then optimize for revenue through usage pattern manipulation that’s difficult for customers to predict or control.” Meanwhile, CPU usage per request can spike 8-40% when tokenizers change. The trap is silent until your cost dashboards light up red.

The economics are simple: vendors control tokenization, billing, and disclosure. Subsequently, developers are “charged based on reported token usage, but have no means to confirm that these metrics reflect real or necessary work.” You’re paying for tokens you can’t independently verify, counted by rules that change without notice.

That’s the trust tax. It’s not paranoia when your February budget gets shredded by an April tokenizer update.

When “Better Models” Means Higher Bills

Defenders argue that improved models justify higher costs. Fair enough – better quality often costs more. However, there’s a difference between “we’re raising prices because performance improved” and “we changed how we count tokens, hope you didn’t notice.”

The Hacker News discussion on Claude 4.7’s tokenizer (674 points, 470 comments) captures developer frustration: “Opus 4.7 feels like the pre-nerf version of 4.6 dressed up as a new model, with a tokenizer change functioning as a stealth price increase.” Within 48 hours of launch, sentiment on Reddit, Discord, and HN turned negative. Not because the model got worse, but because the cost surprise eroded trust.

If quality improvements demand price increases, announce them. Give developers migration time. Offer opt-in endpoints so production apps aren’t forced onto new tokenizers mid-deployment. Ultimately, the technical change might be legitimate; the approach was absolutely wrong.

The Exit Strategy: Self-Hosted Models Gain Leverage

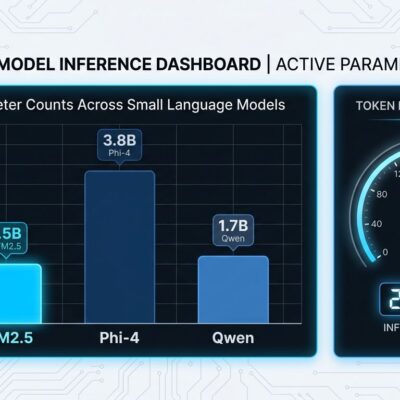

Token inflation incidents are accelerating exactly what LLM vendors should fear: migration to self-hosted open source models. Indeed, the quality gap that once justified proprietary pricing is closing fast. Top open source models ranked by BenchLM.ai like GLM-5 (scoring 85/100), Qwen3.5 (81/100), and DeepSeek-V3.2 now rival proprietary alternatives on real-world benchmarks.

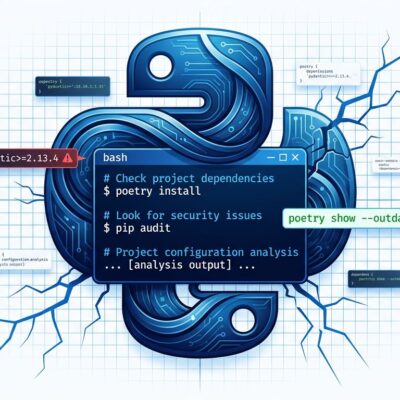

The cost model flips entirely. While proprietary APIs charge per token with volatile tokenization, self-hosted infrastructure costs $500-2,000/month flat, regardless of token counts. For high-volume workloads processing millions of tokens daily, self-hosting becomes more economical according to PremAI’s cost analysis after initial setup. More importantly: you pay for hardware once, not per request. Zero vendor lock-in, zero surprise tokenizer changes.

Tooling maturity (Ollama, vLLM, llama.cpp) has made self-hosting accessible to teams without PhD-level infrastructure expertise. Similarly, middle-ground options like Fireworks AI offer hosted open source with GPU-based pricing – predictable per-second costs instead of volatile per-token billing.

The credible exit threat matters even if you stay with proprietary APIs. Vendors who know developers can leave cheaply will think twice before deploying stealth price increases.

What Transparency Standards Look Like

The industry doesn’t need to accept opaque pricing as inevitable. Solutions exist:

Pricing locks for enterprise customers. Offer optional 6-12 month rate guarantees that freeze both per-token costs and tokenizer versions. Consequently, developers can forecast reliably while vendors get committed revenue. Everyone wins.

Opt-in tokenizer changes. Version your API endpoints: /v1/chat keeps the old tokenizer, /v2/chat uses the new one. Let developers migrate on their timeline, not yours. Therefore, default existing customers to stability while offering new customers improved tokenization with clear documentation.

Transparency mandates. Changelog every pricing-relevant change before launch. If a tokenizer update will inflate token counts by 10-35%, say so in the release notes with impact estimates. Otherwise, bury it in migration docs and you’ll end up on Hacker News with 700 angry comments.

Independent auditing. Third-party verification of token counts, similar to how credit card processors audit payment systems. Developers shouldn’t have to trust vendor-controlled billing without validation.

The vendors who implement these standards will win enterprise deals. The ones who keep deploying surprise cost changes will lose developers to open source alternatives with zero trust tax.

Pricing Stability Beats Marginal Quality

Opus 4.7’s tokenizer change might be technically justified for improved model performance. However, the lack of transparency definitely wasn’t. Developers building production applications care more about cost predictability than marginal quality improvements. Indeed, a 5% quality boost doesn’t offset a 35% surprise cost increase.

The industry is at an inflection point. Pricing transparency is becoming a competitive advantage as open source narrows the quality gap. Vendors who offer stability – pricing locks, opt-in changes, clear communication – will retain trust. Conversely, those who treat tokenization as a silent profit lever will watch developers migrate to self-hosted models.

Trust isn’t optional in API pricing. It’s the product. And right now, the “trust tax” costs more than the tokens.