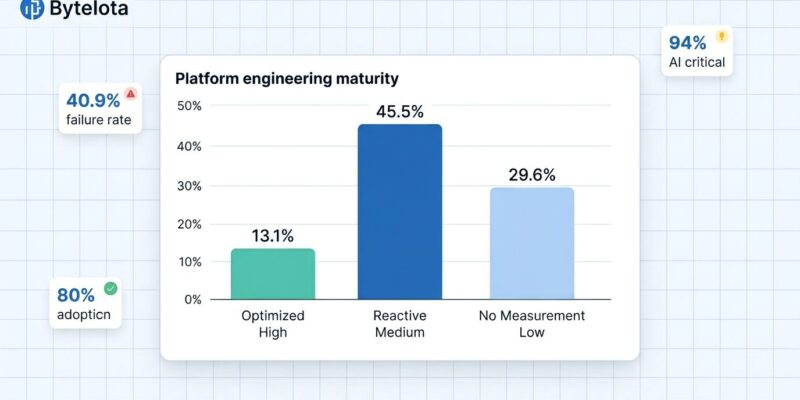

Platform engineering hit 80% adoption in 2026, making it mandatory infrastructure for software organizations. However, the CNCF State of Platform Engineering Report Volume 4 exposes a critical maturity crisis: 29.6% of platform teams don’t measure any success metrics, 45.5% operate reactive teams instead of optimized ecosystems, and only 13.1% have achieved cross-functional maturity. Moreover, 40.9% cannot demonstrate measurable value within twelve months of platform launch. Adoption doesn’t equal success—most organizations are building platform theater, not platform value.

The Measurement Crisis: 29.6% Building Blind

Nearly three out of ten platform teams don’t measure anything. No deployment frequency. No lead time. No MTTR. No developer satisfaction scores. They’re investing millions in internal developer platforms without tracking whether anything improved. Additionally, among teams that do measure, 24.2% lack visibility into whether their metrics have actually improved over time—measurement without trend analysis is just dashboard theater.

Organizations can’t improve what they don’t measure. The 29.6% cohort operating without metrics will fail to prove ROI, secure continued investment, or identify bottlenecks strangling their platforms. Currently, only 40.8% use DORA metrics, 31.0% track time-to-market, and 14.1% employ SPACE metrics. Consequently, more than half are flying blind.

The data shows progress: measurement blindness dropped from 45% in 2024 to 29.6% in 2025, projecting toward fewer than 15% by end of 2026 at current improvement rates. However, that still means tens of thousands of organizations are pouring resources into platforms while having zero idea whether they’re delivering value. Measurement isn’t optional—it’s the foundation for everything else: justifying budgets, demonstrating wins, prioritizing features, and iterating based on evidence instead of gut feel.

Investment Reality: Sub-$1M Budgets Won’t Deliver Enterprise Platforms

Furthermore, 47.4% of platform teams operate on sub-$1M budgets while attempting to deliver enterprise-grade capabilities. This budget-capability mismatch sets teams up for failure. You can’t build comprehensive platforms—AI workload support, security compliance, multi-cloud orchestration, self-service provisioning, observability integration—on shoestring budgets. Therefore, leading organizations invest $5-10M for platforms that actually work. Median budgets are expected to double by 2026, but nearly half remain chronically underfunded.

The investment gap explains the maturity chasm. 45.5% operate dedicated, budgeted teams but remain primarily reactive—firefighting developer tickets, patching infrastructure, maintaining existing systems without capacity to innovate or proactively improve the platform. In contrast, only 13.1% achieved optimized cross-functional ecosystems that operate proactively with product roadmaps, measurable productivity gains, and developers contributing improvements back.

The difference between reactive and optimized often comes down to investment commitment. Sub-$1M budgets guarantee mediocre platforms and developer frustration. Comprehensive capabilities require dedicated headcount (platform engineers, DevEx specialists, security engineers, product managers), tooling costs (Backstage/Port/Cortex, monitoring, orchestration), and infrastructure (cloud resources, testing environments, CI/CD pipelines). Expecting enterprise-grade results on startup budgets is organizational malpractice.

The 12-Month Failure Window

Moreover, 40.9% of platform teams cannot demonstrate measurable value within twelve months of platform launch. Meanwhile, 35.2% deliver value within six months. This bimodal distribution is widening—the gap between fast movers and slow movers accelerates every quarter. Platform teams that hit month 9 without measurable wins face serious defunding risk.

Executive patience has limits. Platforms require upfront investment before delivering returns. However, if you can’t demonstrate concrete wins—faster deployments, reduced developer toil, improved satisfaction scores, cost savings, compliance improvements—within the first year, support evaporates. CFOs don’t fund infrastructure projects indefinitely without ROI proof. Consequently, the 12-month window is a hard deadline for proving value.

The 35.2% who deliver within six months share common patterns: they start with high-pain workflows (not vanity features like service catalogs), validate with developers before building, implement measurement from day one, and treat platforms as products with roadmaps and feedback loops. In contrast, the 40.9% failures typically build comprehensive visions without validating demand, skip measurement infrastructure, and optimize for completeness instead of time-to-first-value. If you’re approaching month 9 without wins, course-correct immediately or prepare for shutdown.

Adoption Type Determines Success

Platform adoption breaks into three patterns with dramatically different outcomes. 36.6% rely on mandate-driven adoption—IT forces platform usage regardless of developer sentiment. Meanwhile, 28.2% report intrinsic value driving adoption—developers choose platforms because they solve genuine problems. However, only 18.3% achieve participatory adoption where developers contribute plugins, templates, and improvements back to the platform.

Mandate-driven adoption is declining in effectiveness. Forcing platforms on developers without demonstrating value creates resentment and shadow IT. Developers route around platforms they perceive as slowing them down, defeating the entire purpose. Therefore, the 36.6% mandate cohort will struggle as intrinsic value becomes the baseline expectation.

Product-centric teams with dedicated product managers report 2.3x higher adoption rates. Treating platforms as internal products—gathering developer feedback, maintaining roadmaps, demonstrating value propositions, measuring satisfaction—drives organic adoption. Consequently, the 18.3% achieving participatory adoption represent the maturity standard most organizations haven’t reached. Moving from mandate to participation requires treating developers as customers, not subjects.

AI Integration: 94% Critical, 57% Lacking Skills

AI integration has become non-negotiable. 94% of organizations view AI as critical or important to platform engineering, 86% believe platforms are essential to realizing AI’s business value, and 75% already host or prepare for AI workloads. However, 57% cite skill gaps as barriers to AI integration. Platform teams need GPU provisioning capabilities, model serving infrastructure, vector databases, MLOps pipelines—expertise most don’t have yet.

AI is forcing platform evolution beyond traditional DevOps capabilities. Furthermore, security jumped 25 percentage points from 57% (2024) to 82% (2026) as a platform motivation—the only statistically significant change in the entire dataset. AI workloads introduce new compliance requirements, data governance challenges, and infrastructure complexity that platform teams must absorb.

This skill gap is driving role specialization. Seven distinct platform engineering roles are emerging: Head of Platform Engineering, Platform Product Manager, Infrastructure Platform Engineer, DevEx Platform Engineer, Security Platform Engineer, Observability Platform Engineer, and dedicated AI-focused platform engineers. Organizations that can’t integrate AI workloads into platforms will lose competitive advantage. Therefore, the 57% skill gap represents an urgent hiring and training priority.

Key Takeaways

- 80% adoption doesn’t mean 80% success—most organizations are building platform theater without measuring outcomes or achieving maturity

- Measurement crisis persists: 29.6% measure nothing, 24.2% can’t track improvement. Organizations without metrics will fail to prove ROI or iterate effectively

- Investment gap is real: 47.4% on sub-$1M budgets won’t deliver enterprise-grade platforms. Leading organizations invest $5-10M for capabilities that actually work

- 12-month window is critical: 40.9% can’t demonstrate value within a year and face defunding. Fast movers (35.2%) deliver within six months

- Adoption type determines outcomes: Mandate-driven (36.6%) declining in effectiveness, participatory (18.3%) represents maturity ceiling most haven’t reached

- AI integration is non-negotiable: 94% view as critical, 75% hosting workloads, but 57% lack necessary skills—urgent gap to close

- Maturity roadmap: Start with measurement (DORA metrics minimum), invest appropriately (double budgets if needed), treat platform as product, demonstrate value within six months, shift from mandate to intrinsic value