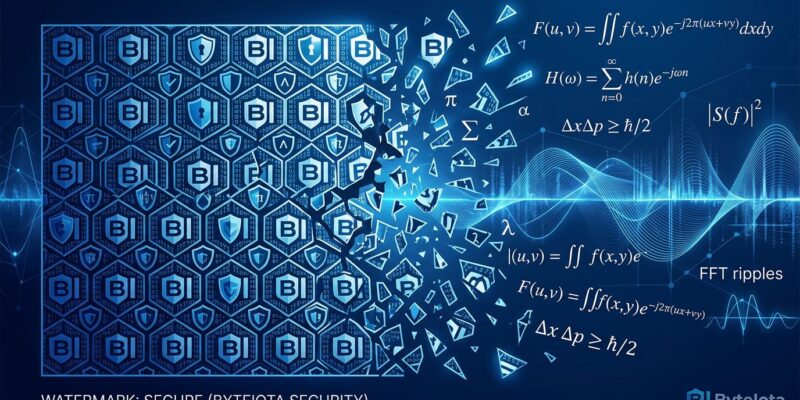

A developer reverse-engineered Google DeepMind’s SynthID watermarking system in March 2026 using nothing but mathematics and 123,000 Gemini-generated images, achieving 91% watermark removal while preserving near-perfect image quality. Independent researcher Alosh Denny published the full methodology—spectral analysis and Fourier transforms, no neural networks, no proprietary access—on GitHub, where it’s collected over 1,600 stars and sparked intense debate. The timing couldn’t be worse: the EU AI Act mandates watermarking for all AI-generated content by August 2, just over three months away.

This isn’t a minor exploit. It exposes a fundamental flaw in how regulators are approaching AI content verification. If a single independent developer can break Google’s watermarking system using public tools and signal processing, the entire regulatory compliance layer becomes security theater.

Pure Math Defeats SynthID Watermark Protection

The reverse-SynthID project achieves 75% carrier energy drop, 91% phase coherence drop, and 43+ dB PSNR—that last metric means the image quality is near-lossless, indistinguishable from the original to the human eye. Moreover, the technique exploits a design constraint baked into SynthID’s architecture: it encodes fixed carrier frequencies at constant phase in the image’s spectral domain.

Here’s how it works. SynthID embeds watermarks by subtly manipulating pixel values in ways invisible to humans but detectable by machines—specifically, by modifying frequency components in the Fourier domain. Because these carrier frequencies use consistent phase patterns, averaging enough Gemini-generated images reveals the watermark signature emerging from the noise. Denny collected 123,000 image pairs at different resolutions, ran them through two-dimensional Fourier transforms, and extracted the watermark fingerprint.

Once you have the fingerprint, removal is surgical. The reverse-SynthID tool uses a multi-resolution spectral codebook—essentially a database of per-resolution watermark signatures—to identify and neutralize the watermark at specific frequency bins. Unlike brute-force attacks (JPEG compression, noise injection) that destroy both watermark and image quality, this approach targets only the watermark signal while preserving the image.

The project is published under open-source license for research purposes, with full code and methodology available on GitHub. Consequently, it’s not theoretical—it’s working, reproducible, and accessible to anyone with signal processing knowledge.

EU AI Act Deadline: Regulations Obsolete Before Enforcement

The EU AI Act’s Article 50 transparency obligations become enforceable on August 2, 2026. Furthermore, the requirements are strict: all AI systems generating images must embed invisible watermarks that survive compression, cropping, and format conversion. The Code of Practice, expected to finalize in June, mandates a multi-layered approach combining C2PA metadata, invisible watermarks, and digital fingerprints as fallback.

However, the watermark layer is already broken. Only 38% of AI image generators currently implement adequate watermarking, according to academic research published this year. India’s IT Amendment Rules went into force in February 2026 with similar watermark requirements, and now those regulations are chasing a moving target—one that’s already been caught.

This creates a compliance problem. Companies will spend resources implementing watermarking systems to meet the August deadline, users will assume AI content is verifiable through watermarks, and adversarial actors will use publicly available techniques to strip those watermarks with minimal effort. The regulatory approach needs updating before it becomes law, or we’re codifying security theater into European policy.

Information Asymmetry: Why Watermarks Can’t Win the Arms Race

The reverse-SynthID breakthrough isn’t an implementation bug Google can patch—it’s a fundamental mathematical problem with watermarking as a security mechanism. Invisible watermarks face a design constraint: they must be imperceptible (to preserve image quality) and robust (to survive compression and edits). Meeting both requirements forces watermarks to operate in the spectral domain by manipulating frequency components.

This constraint creates predictable vulnerabilities. If an attacker can collect enough model outputs, they can extract the watermark signature through statistical analysis—exactly what Denny demonstrated with 123,000 images. Additionally, defenders need to protect every single output; attackers only need to gather enough samples to reveal the pattern. Information asymmetry favors the attacker.

Academic research validates this concern. The University of Waterloo’s UnMarker tool can remove watermarks universally without knowing the algorithm, and the ICLR 2025 Workshop on GenAI Watermarking presented theoretical results on “strong watermark impossibility.” Security researchers are blunt: “Watermarks offer value in transparency efforts, but they do not provide absolute security against AI-generated manipulation.”

Watermarking is a communications technique for embedding and detecting information, not a cryptographic technique for securing against adversaries. Therefore, treating it as the latter creates false confidence in AI content verification.

Google Disputes Claims, But Principle Stands

Google has pushed back on the 91% removal claim, arguing the watermark isn’t “fully broken” and that removal rates are lower in practice. Nevertheless, Denny’s evidence—91% phase coherence drop with 43+ dB PSNR maintained—suggests otherwise, and the GitHub repository’s 1,600+ stars indicate the technical community finds the methodology credible.

But the exact percentage isn’t the point. Even if removal is 75% instead of 91%, the principle holds: watermarks can be significantly degraded using publicly available signal processing techniques. Security experts recommend treating watermark detection as “a useful signal, not a reliable gate,” acknowledging that “no single lab can build a watermarking system that remains secure in isolation.”

The debate isn’t “can you completely remove watermarks?”—it’s “should regulatory compliance depend on a technology that independent researchers can defeat?” The answer from the security community is increasingly clear: no.

The Alternative: Cryptographic Provenance Over Watermarks

The Coalition for Content Provenance and Authenticity (C2PA) offers a different approach: cryptographically signed metadata that tracks content origin and edit history through public/private key infrastructure. Unlike watermarks, C2PA signatures can’t be forged without the private key—attackers can strip the metadata, but they can’t create fake signatures claiming Google generated an image they created.

The EU AI Act requires both C2PA and watermarking in a multi-layered defense strategy. If the watermark layer is broken, C2PA becomes the only reliable verification mechanism. The weakness is fragility—social media platforms and content distributors often strip metadata during processing—but at least the cryptography is sound when the metadata survives.

Industry guidance acknowledges this reality: “C2PA and watermarking work together—neither replaces the other.” The practical implication is that watermarks should be treated as transparency tools for legitimate use cases (helping users identify AI content) rather than security mechanisms for adversarial scenarios (preventing bad actors from hiding AI content origins).

Realistic expectations about each layer’s limitations are critical. Developers implementing AI content verification should prioritize C2PA for provenance, use watermarks as supporting evidence, and invest in behavioral detection methods that don’t rely on embedded signals at all.

Key Takeaways

Watermarks are transparency tools, not security mechanisms. The EU AI Act’s August 2026 enforcement deadline is approaching with outdated technical requirements. Regulators need to shift focus toward cryptographic provenance (C2PA) while maintaining realistic expectations about watermark limitations. The arms race between AI generation and detection continues, but betting regulatory compliance on a broken technology isn’t the answer.