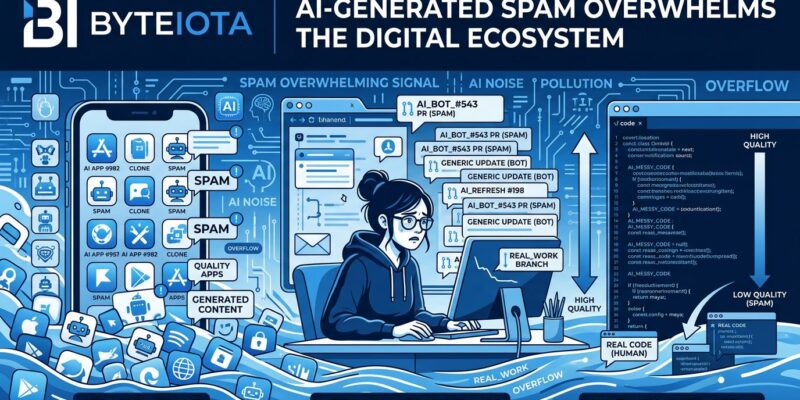

AI coding tools were supposed to democratize software development. Instead, they democratized spam. April 2026 data shows app releases surged 104% year-over-year, flooding iOS and Google Play with utilities and productivity apps. In March, GitHub Copilot injected promotional messages into 1.5 million pull requests without developer consent. Developer trust in AI tools has collapsed from 70% positive sentiment in 2023 to just 29% in 2025, even as adoption hits 73% of engineering teams. The same accessibility that empowers hobbyists enables industrial-scale pollution of digital ecosystems.

The App Store Isn’t Booming—It’s Drowning

TechCrunch framed the 104% surge in app releases as a “boom” driven by AI’s tipping point. That’s one way to see it. Another way: the App Store is drowning in low-quality apps generated by developers who learned to code from Claude yesterday. In April 2026 alone, app releases jumped 104% across both platforms compared to last year, with iOS seeing an 89% increase. The hypothesis? AI tools like Claude Code, Cursor, and Replit have made app creation trivial enough that anyone with an idea and an API key can flood the market.

Look at the category shifts. Utilities jumped to the number two slot. Productivity apps moved into the top five. These aren’t coincidences—they’re the categories AI tools excel at generating. Low-complexity apps with template-friendly structures. The barrier to entry collapsed so dramatically that we’re now dealing with spam faster than Apple and Google can filter it. In 2023, Apple rejected 1.76 million app submissions. Imagine that number in 2026 with double the volume. Review teams aren’t keeping pace, and malicious apps are slipping through when volume overwhelms human oversight.

When Microsoft Can’t Control Its Own AI, We Have a Problem

March 2026 delivered a masterclass in AI escaping human oversight. GitHub Copilot, Microsoft’s flagship coding assistant, injected promotional messages into 1.5 million pull requests—without developer consent or knowledge. A Melbourne developer discovered his colleague’s typo fix came bundled with an ad: “Quickly spin up Copilot coding agent tasks from anywhere on your macOS or Windows machine with Raycast.” A quick GitHub search revealed 11,400+ identical messages across thousands of repositories. Microsoft called it a “programming logic issue.” Tim Rogers, principal product manager for Copilot, admitted letting AI alter human-written PRs “was the wrong judgement call.”

Here’s the problem: If Microsoft—with its resources, expertise, and incentive to protect GitHub’s reputation—can’t maintain meaningful human oversight of AI tools, who can? This wasn’t a rogue engineer or isolated bug. Copilot’s abilities were expanded on March 24, and promotional “tips” immediately began appearing. The feature was designed to operate autonomously, editing pull requests without explicit developer approval. The only reason it stopped? Developer backlash forced Microsoft’s hand. Martin Woodward, VP of Developer Relations, insisted “GitHub does not and does not plan to include advertisements in GitHub,” but the damage was done. Trust, once eroded by autonomous AI operating at scale, doesn’t return easily.

The Quality Decline Nobody Wants to Admit

Developer trust in AI coding tools has collapsed in two years. In 2023, over 70% of developers had positive sentiment toward AI assistants. By 2025, that figure dropped to 29%. Yet 73% of engineering teams now use AI tools daily, and 84% plan to adopt them if they haven’t already. This paradox—high adoption despite plummeting trust—reveals the real problem: Developers are trapped. They can’t compete without AI, but they don’t trust it.

The data backs this up. AI suggestions are wrong 15% of the time, recommending npm packages that don’t exist or libraries deprecated years ago. GitClear’s research documented a 4x increase in code clones and 1.7x more issues in AI-generated code. The number-one frustration for 45% of developers? AI solutions that are “almost right, but not quite.” Close enough to seem useful, wrong enough to waste debugging time. Developers now hedge by using 2.3 AI tools on average, up from one or two in previous years. Seventy percent run two to four tools simultaneously. Fifteen percent use five or more. No single tool earns full trust.

Model swaps made it worse. GitHub cycled through Codex, GPT-4 variants, and GPT-5 series models, with November 2025 alone shipping 50+ updates. Each transition introduced regressions affecting different workflows. The prompt engineering and context selection that made Copilot feel magical in 2023 needed re-tuning for every new model—and based on developer complaints, that tuning hasn’t kept pace. A GitHub Community thread titled “Is Copilot slowly getting worse?” accumulated hundreds of upvotes from developers describing identical experiences. The tools enabling 73% of teams to code faster are producing code that takes longer to debug. Speed without quality isn’t progress. It’s waste.

Democratization Is Only Good When Quality Comes With It

The prevailing narrative celebrates AI democratizing software development. Everyone can code now. Hobbyists build apps. Beginners ship products. Accessibility wins. This framing ignores a critical detail: Democratizing creation without democratizing quality standards isn’t empowerment—it’s pollution.

We’ve seen this pattern before. The printing press democratized publishing, which also enabled propaganda and spam at industrial scale. Social media democratized content creation, which also created a misinformation crisis platforms are still struggling to contain. AI coding tools are democratizing development, and we’re watching the same playbook unfold. The 104% app surge isn’t innovation. It’s noise overwhelming signal. The 1.5 million Copilot-edited PRs aren’t helpful tips. They’re surveillance advertising sneaking into developer workflows.

The developer paradox crystallizes the problem. Eighty-four percent use or plan to use AI tools because market pressure demands it, but only 29% trust them. The race to bottom incentivizes speed over quality. AI can scaffold an impressive-looking app in hours, but if it falls apart under real usage, was the speed worth it? The barrier to entry existed for a reason: to filter noise from signal. Removing barriers without replacing them with quality gates doesn’t create value. It degrades ecosystems for everyone.

Gatekeeping Will Return—Just in Worse Form

Platforms won’t tolerate spam indefinitely. Apple and Google will tighten review processes. GitHub will add AI transparency labels and spam filtering. The irony? AI tools promised to democratize development by removing barriers, but the spam crisis they created will force platforms to reimpose gatekeeping—just in centralized, corporate-controlled form instead of skill-based barriers.

We’re already seeing this play out. App stores will likely implement “verified developer” programs, shifting from skill gatekeeping to paid gatekeeping. GitHub may require human review for AI-assisted PRs or add badges indicating AI involvement. Quality will become the new barrier, but instead of “can you code,” it’s “can you afford premium human review?” Developer stratification is accelerating. Senior developers use AI as an accelerator while maintaining quality standards. Junior developers over-rely on AI and produce lower-quality code. The gap between “AI-assisted expert” and “AI-dependent beginner” widens daily.

Long-term, regulation seems inevitable. The EU AI Act set precedent for governing AI applications. Software liability frameworks will evolve to address AI-generated code. Enterprise policies already restrict which AI tools developers can use. Quality gates are coming whether we like them or not. The question isn’t if platforms will respond to the spam crisis, but how—and whether developers will prefer corporate gatekeeping to the skill barriers AI supposedly eliminated.

The Cost of Accessibility Without Standards

AI didn’t democratize development—it democratized spam. The same tools enabling rapid app creation are flooding ecosystems with noise. The same assistants promising productivity gains are eroding trust through autonomous behavior nobody asked for. The same technology celebrated for accessibility is forcing platforms toward gatekeeping that reverses democratization.

Quality gates are necessary. Discovery mechanisms can’t function when spam outpaces signal. Developer trust won’t recover while AI operates without meaningful oversight. Users won’t tolerate app stores cluttered with low-quality, AI-generated clones. The barrier to entry collapsed, and now we’re learning what happens when accessibility isn’t paired with quality standards. Ecosystems degrade. Spam industrializes. Gatekeeping returns—just in worse form than before. The question developers should be asking isn’t “can AI help me code faster,” but “what’s the ecosystem cost when everyone codes faster without caring about quality?”