WebAssembly hit a critical adoption milestone in 2026. 5.5% of websites now use it, runtimes achieve 95% of native speed, and AI inference completes in 15 milliseconds. What started as a niche tool for Figma and Photoshop has become mainstream infrastructure—powering video editors, running client-side AI models, and delivering 10-40x faster serverless cold starts. The performance claims are no longer theoretical. They’re measured benchmarks from production deployments.

The Numbers That Matter

Wasmer 6.0 achieves 95% of native speed on Coremark benchmarks. That’s the “good enough” threshold where WebAssembly stops being experimental and becomes essential. For CPU-bound tasks like image processing, cryptography, and compression, WebAssembly delivers 5-15x faster performance than optimized JavaScript. These aren’t edge cases. They’re the workloads developers actually run.

AI inference shows the most dramatic improvement. Wasmer completes small model tasks in 15 milliseconds—8x faster than TensorFlow.js. This makes client-side AI viable for applications that previously required server round-trips.

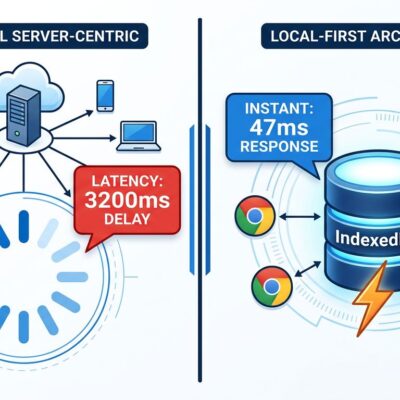

Serverless cold starts saw a 10-40x improvement over container-based functions in AWS Lambda benchmarks. WebAssembly’s pre-compiled binary format means zero cold start time and one-tenth the memory of a Node.js process. Edge providers like Cloudflare, Fastly, and Vercel adopted this for server-side logic, cutting latency dramatically.

Runtime performance varies by use case. Wasmtime leads on cold starts, Wasmer excels at steady-state execution, and WasmEdge has the smallest memory footprint at 8MB with 1.5ms cold starts on edge devices. The competition drives performance improvements across the ecosystem.

Real Companies, Real Results

Figma reduced load time by 3x when it migrated its C++ rendering engine to WebAssembly in 2017. By 2026, Figma expanded WebAssembly usage to “code layers” in Figma Sites, using esbuild and Tailwind v4 compiled to Wasm and executed in Web Workers for real-time bundling. Figma plugins run in a WASM sandbox that can’t access your filesystem or other tabs—security through isolation.

Shopify runs WebAssembly in production for Shopify Functions, handling pricing rules, shipping calculations, and product recommendations at the edge. Processing happens in under 5ms with no central server round-trip. This architecture scales to millions of storefronts without the latency penalty of centralized logic.

Adobe’s Photoshop Web edition uses WebAssembly to handle 4K image processing and advanced filters at near-native performance in a browser. Google Earth relies on WebAssembly for complex geographical rendering. These aren’t proof-of-concept demos. They’re production systems serving millions of users.

The adoption trend is clear. Google’s Chrome Platform Status shows 5.5% of websites now use WebAssembly, up from 4.5% year-over-year. Most of this growth happens “behind the scenes”—developers use tools powered by WebAssembly without realizing it.

When to Use WebAssembly (and When Not To)

WebAssembly isn’t a JavaScript replacement. It’s a surgical tool for specific performance bottlenecks.

Use WebAssembly for CPU-intensive tasks: image processing, video encoding, compression, cryptography. Use it for AI/ML inference when you need 15ms response times on the client. Use it for real-time encryption or mathematical computation where 5-15x speedup justifies the added complexity. Use it when you need portable code that runs identically across web, server, and edge environments.

Don’t use WebAssembly for I/O-bound operations. Network calls and database queries spend most of their time waiting, not computing. WebAssembly won’t make them faster. Don’t use it for simple UI logic where JavaScript’s flexibility beats compilation complexity. Don’t use it for DOM manipulation—WebAssembly can’t access the DOM directly and must communicate through JavaScript, adding overhead.

The tradeoff is performance versus complexity. WebAssembly requires learning Rust or C++. Debugging is harder than JavaScript. Some languages produce multi-megabyte bundles that hurt load times. Mobile browsers can’t reliably allocate more than 300MB, which limits memory-intensive applications.

Evaluate based on your bottleneck. If profiling shows CPU-bound computation as your slowest path, WebAssembly likely helps. If you’re waiting on network or disk, it won’t.

The 2026 Ecosystem

WebAssembly matured significantly in 2026. WASI 0.3.0 arrives in February with native async support in the Component Model, enabling high-performance streaming and language-integrated concurrency. This paves the path to WASI 1.0 in late 2026 or early 2027, which aims to replace containers for many workloads.

The Component Model gains traction, enabling components written in different languages to interoperate seamlessly. Wasmtime leads implementation, with Wasmer and WasmEdge catching up throughout the year.

Use cases expanded beyond “specialized apps.” Video editing, client-side AI models, edge computing, and serverless functions all adopted WebAssembly in 2026. It’s no longer just Figma and Photoshop. It’s becoming part of the standard developer toolkit.

Limitations remain. Debugging is still the biggest adoption barrier. The “wall of complexity” for getting started frustrates developers who assume a compiler flag produces browser-ready code. Languages with garbage collection (Python, Ruby, Java) face challenges with WebAssembly’s linear memory model. Tooling improved but lags behind JavaScript’s mature ecosystem.

The Inflection Point

2026 marks WebAssembly’s transition from experimental to essential. 95% native speed crosses the “good enough” threshold for production workloads. 5.5% website adoption represents critical mass. Use cases expanded from niche applications to everyday development tools. Zero cold starts make serverless viable at scale.

WebAssembly gained adoption “behind the scenes” from 2024-2025. In 2026, it became explicit infrastructure—something developers choose deliberately for performance-critical paths. The standards roadmap shows maturity. WASI 0.3.0’s async support and Component Model’s language interoperability address the ecosystem’s roughest edges.

The performance data is conclusive. 5-15x faster execution, 15ms AI inference, 10-40x cold start improvements. Companies like Figma, Shopify, and Adobe bet production systems on these numbers and saw measurable results. That’s the signal developers should watch: not hype, but deployment at scale with quantified outcomes.

WebAssembly isn’t replacing JavaScript. It’s becoming the performance escape hatch when JavaScript isn’t fast enough. That shift defines 2026.