# PostgreSQL pgvector Delivers 28× Better Performance Than Pinecone

The vector database market is collapsing. Vectors have shifted from being a specialized database category to a standard data type, and specialized vendors like Pinecone, Qdrant, and Weaviate are watching PostgreSQL eat their lunch. Teams are dismantling multi-database architectures and consolidating onto PostgreSQL with pgvector and pgvectorscale extensions. The numbers tell the story: pgvectorscale delivers 28× lower latency and 16× higher throughput than Pinecone at 99% recall, while self-hosted PostgreSQL costs $1,500/month versus Pinecone’s $5,000-6,000/month for the same 100 million vector workload. That’s 75% savings with better performance.

This isn’t incremental improvement—it’s an architectural paradigm shift. If the default choice for vector workloads is moving to PostgreSQL, teams building AI applications need a data-driven framework for when consolidation works and when specialized databases still matter.

The Database Consolidation Wave

Traditional databases added native vector support in 2025-2026, transforming vectors from specialized infrastructure into a standard feature. PostgreSQL, Oracle, and MongoDB all ship vector types now. The market responded swiftly: engineering conversations shifted from “use Pinecone” to “we can build this on PostgreSQL” as teams questioned why they’re managing separate vector databases.

Supabase reports hundreds of migrations via their vec2pg tool from Pinecone and Qdrant to PostgreSQL. The pattern mirrors the NoSQL exodus of the 2010s, when teams realized PostgreSQL’s JSON support eliminated the need for MongoDB in most cases. History repeats: specialized databases lose to consolidated platforms when the performance gap closes.

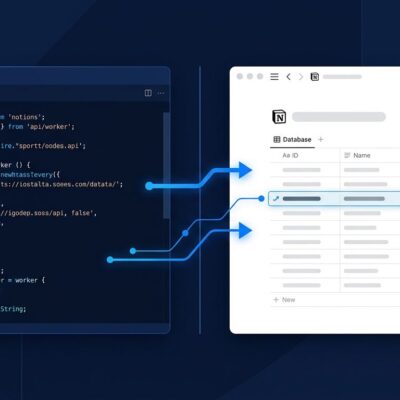

The consolidation makes architectural sense. Multi-database setups—application connected to PostgreSQL for relational data, Pinecone for vectors, Redis for caching—introduce sync complexity, operational overhead, and multiplied costs. Consolidated PostgreSQL with pgvector collapses this to two databases (PostgreSQL + cache), enabling hybrid queries that join structured data and vector similarity searches without cross-database gymnastics.

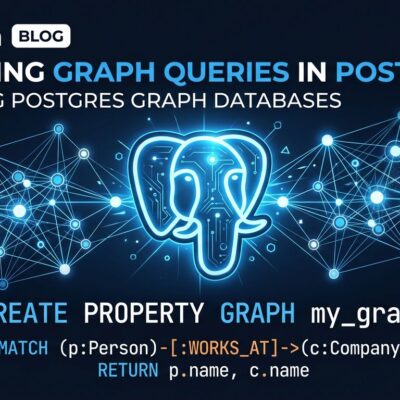

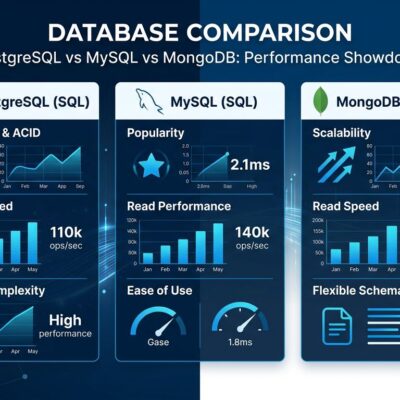

pgvectorscale Performance Benchmarks: Beating Pinecone and Qdrant

pgvectorscale, Timescale’s DiskANN implementation for PostgreSQL, benchmarks at 471 queries per second on 50 million vectors at 99% recall. Qdrant manages 41 QPS under the same conditions—11.4× slower. Pinecone’s storage-optimized index shows 28× higher p95 latency than pgvectorscale at equivalent recall levels. The performance objection to PostgreSQL is dead at moderate scales.

The technical breakthrough comes from StreamingDiskANN, a disk-based graph indexing algorithm that stores compressed structures on disk rather than RAM. Traditional HNSW indexes consume 2-5× the vector data size in memory, creating RAM bottlenecks at scale. DiskANN reduces memory requirements by 10-20× while maintaining query performance through efficient disk I/O and graph compression techniques.

This matters because it shifts the consolidation sweet spot upward. Where pgvector with basic HNSW indexing struggled beyond 1 million vectors, pgvectorscale pushes viable performance to 10-50 million vectors depending on latency requirements and hardware configuration. For most AI applications—RAG pipelines, semantic search, recommendation systems—this covers production needs without specialized infrastructure.

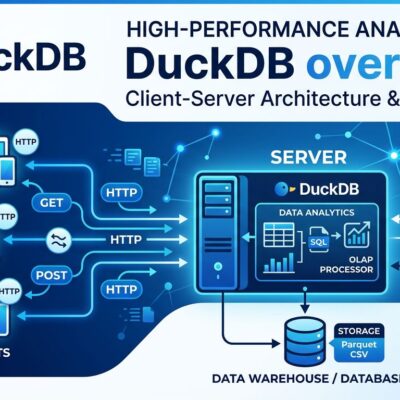

The Cost Advantage: 75% Savings

Self-hosted PostgreSQL with pgvectorscale costs approximately $1,200-1,500/month on AWS EC2 for 100 million vectors (1024 dimensions) handling 150 million queries monthly. Pinecone charges $5,000-6,000/month for the same workload. Qdrant managed runs $3,000-4,000/month. Weaviate lands at $2,500-3,500/month. The 75% cost reduction versus Pinecone compounds: $60,000-72,000 annually versus $15,000-18,000 for PostgreSQL.

Cost becomes a forcing function at this scale. Teams burning tens of thousands yearly on specialized vector databases need clear justification: massive scale beyond 50 million vectors, sub-20ms latency SLAs, or specific features (GPU acceleration, sparse vectors) that PostgreSQL lacks. Without these requirements, it’s wasteful spending that consolidation eliminates.

The managed service economics shift too. Cloud providers (AWS, Google Cloud, Azure) offer managed PostgreSQL with pgvector at commodity pricing, undercutting specialized vector database margins. The database consolidation playbook—familiar from the relational database wars—repeats: hyperscalers leverage existing infrastructure to price out specialized vendors at moderate scales.

When PostgreSQL Wins (and When It Doesn’t)

Consolidation works for workloads under 10 million vectors with hybrid search needs (vectors plus metadata filters), moderate query rates below 500 QPS, and acceptable 50-100ms latency. These thresholds cover most production AI applications. RAG chatbots querying document embeddings, e-commerce recommendations filtering by inventory and pricing, semantic search with category constraints—PostgreSQL handles these efficiently.

Specialized databases win beyond 50 million vectors as primary workload, when sub-20ms latency is non-negotiable, or sustained throughput exceeds 1,000 QPS. Advanced features matter here: GPU-accelerated indexing, sparse vector support, real-time streaming ingestion. PostgreSQL’s general-purpose architecture hits limits that purpose-built databases navigate better.

The critical insight from practitioners: “pgvector was the right choice at 10,000 vectors but stopped being the right choice at 5 million.” This isn’t PostgreSQL advocacy—it’s acknowledging that vector search at massive scale has unique challenges general-purpose databases weren’t designed to handle. The decision framework comes down to specific thresholds: vector count, latency requirements, query throughput, and feature needs.

-- PostgreSQL pgvectorscale: hybrid search example

CREATE EXTENSION vector;

CREATE EXTENSION vectorscale;

CREATE TABLE documents (

id SERIAL PRIMARY KEY,

content TEXT,

category TEXT,

embedding vector(1536) -- OpenAI ada-002

);

-- DiskANN index for scale

CREATE INDEX diskann_idx ON documents

USING diskann (embedding);

-- Hybrid query: metadata filter BEFORE vector search

SELECT id, content,

1 - (embedding query_vector) AS similarity

FROM documents

WHERE category = 'AI' -- Filter first, avoiding post-filtering trap

ORDER BY embedding query_vector

LIMIT 10;This SQL demonstrates why consolidation appeals: metadata filters and vector similarity in a single query, no post-filtering anti-patterns where results get discarded after nearest-neighbor search. Specialized vector databases require separate filtering logic or accept degraded performance.

How to Migrate from Pinecone to PostgreSQL

Supabase’s vec2pg CLI tool migrates Pinecone indexes at 700-1,100 records per second and Qdrant collections at 900-2,500 records per second. For a 10 million vector dataset, expect 3-4 hours migration time at Pinecone rates, 1-2 hours from Qdrant. The process is straightforward: pass API credentials, PostgreSQL connection string, and collection reference. vec2pg handles iteration and progress tracking.

The migration strategy should include cold storage. Store source embeddings in S3, Google Cloud Storage, or Parquet files before indexing into any database. This prevents massive cloud egress fees when switching providers. Regenerating vectors from raw data avoids expensive transfer costs that make migrations prohibitively expensive. Cold storage first, then hydrate into PostgreSQL or alternative databases as architecture evolves.

# vec2pg migration example

cargo install vec2pg

vec2pg pinecone \

--api-key \

--index-name production-index \

--connection-string "postgresql://user:pass@host/db"

# Throughput: 700-1,100 records/sec

# 10M vectors = ~3-4 hours migration timeKey Takeaways

- Vector database consolidation onto PostgreSQL is reshaping AI infrastructure—vectors are becoming a data type, not a category, as traditional databases add native support

- pgvectorscale delivers performance parity or advantages versus specialized databases at moderate scales: 28× lower latency than Pinecone, 11.4× higher QPS than Qdrant at 99% recall on 50 million vectors

- Cost savings are substantial: 75% reduction ($1,500/month vs. $5,000-6,000/month) for self-hosted PostgreSQL handling 100 million vectors compared to managed Pinecone

- The decision threshold is clear: consolidate for 50M vectors, sub-20ms requirements, or >1000 QPS sustained throughput

- Migration tooling exists with measurable throughput (vec2pg: 700-2,500 rec/sec), and cold storage strategies (S3/Parquet before indexing) prevent expensive cloud egress fees

The default choice for vector workloads is shifting to PostgreSQL consolidation unless specific scale or latency requirements justify specialized infrastructure. Teams evaluating architecture should start with pgvector, measure against thresholds, and migrate to purpose-built databases only when PostgreSQL hits documented limits.