DuckDB launched Quack on May 12, 2026—an HTTP-based client-server protocol that’s 32x faster than PostgreSQL for bulk analytics. This marks the first time DuckDB can run as a server with multiple concurrent writers, solving its biggest historical limitation. Moreover, it’s the first database protocol built on HTTP from scratch in 2026, and that choice changes everything.

The numbers validate the approach. In AWS benchmarks, Quack transferred 60 million TPC-H rows in 4.94 seconds versus PostgreSQL’s 158 seconds. It also beat Arrow Flight SQL by 3.5x. Consequently, for developers who’ve been waiting for DuckDB to support team analytics workflows, this is the answer.

Built on HTTP: Familiar Infrastructure, Enterprise-Ready

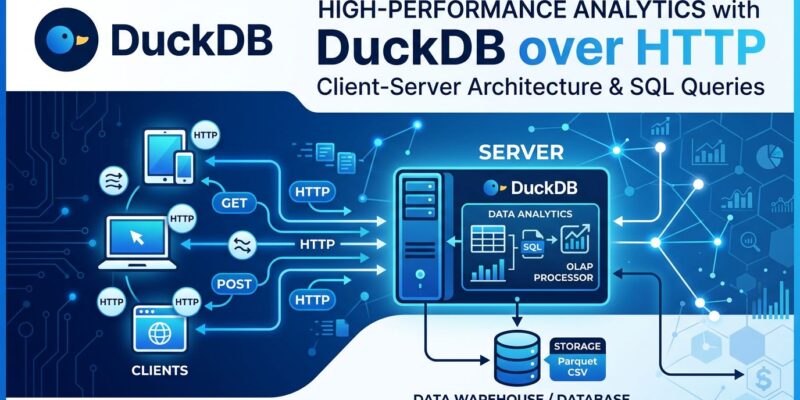

Quack runs on HTTP, not a custom binary protocol. This seemingly simple decision unlocks profound advantages: every infrastructure tool you already know—nginx, Let’s Encrypt, load balancers, firewalls—works with Quack out of the box.

Consider the alternative. Arrow Flight SQL uses a custom binary protocol that requires specialized tooling and knowledge. In contrast, Quack uses port 9494 (chosen for the year Netscape Navigator launched) and standard application/duckdb MIME types. Your ops team already knows how to proxy, load balance, and secure HTTP endpoints.

The HTTP foundation also enables browser-to-server connections. Furthermore, DuckDB-Wasm running in a browser can connect directly to a DuckDB instance on EC2. No binary protocol can do this. Browser security models understand HTTP; custom protocols require workarounds that compromise either security or functionality.

Performance That Validates HTTP

The conventional wisdom says binary protocols are faster than HTTP. However, Quack proves that’s wrong—at least for analytical workloads. The benchmarks from AWS m8g.2xlarge instances (8 vCPUs, 32GB RAM, 0.280ms latency) are decisive.

For bulk transfers of 60 million TPC-H rows:

- Quack: 4.94 seconds

- Arrow Flight: 17.40 seconds

- PostgreSQL: 158.37 seconds

Quack is 32x faster than PostgreSQL and 3.5x faster than Arrow Flight. The speedup comes from DuckDB’s columnar storage and efficient internal serialization, not from avoiding HTTP overhead. In fact, Quack uses the same serialization primitives as DuckDB’s Write-Ahead Log—proven, battle-tested code.

For concurrent small writes, Quack handles 5,434 transactions per second across eight threads versus PostgreSQL’s 4,320. The current limitation is DuckDB’s concurrent insertion bottleneck, not the protocol itself. Therefore, future optimizations will push this higher.

Getting Started: Five SQL Commands

Setting up Quack takes five SQL commands total. This maintains DuckDB’s “it just works” philosophy while enabling multi-user architecture.

Server setup:

INSTALL quack FROM core_nightly;

LOAD quack;

CALL quack_serve('quack:localhost', token = 'super_secret');Client connection:

INSTALL quack FROM core_nightly;

LOAD quack;

CREATE SECRET (TYPE quack, TOKEN 'super_secret');

ATTACH 'quack:localhost' AS remote;

FROM remote.hello;No configuration files. No separate server binaries. No Docker containers required. Just SQL commands. Additionally, for queries that process large datasets remotely, use the remote.query() function to execute server-side:

FROM remote.query('SELECT s FROM hello');This ships the entire query to the remote server, avoiding unnecessary data transfer for complex operations on large datasets.

Concurrent Writers Unlock New Applications

Before Quack, DuckDB couldn’t handle multiple processes writing simultaneously. This limitation killed entire application categories: real-time monitoring dashboards, collaborative analytics environments, and multi-process data pipelines.

Quack solves this. Multiple processes can now write to the same DuckDB server while dashboards query in real-time. Consequently, data science teams can run concurrent analyses without file-locking issues. Moreover, browser-based tools can connect to production DuckDB servers for live data exploration.

Consider a telemetry collection system. Previously, you’d need separate DuckDB files per collector or accept single-process bottlenecks. With Quack, collectors write concurrently to one server while monitoring dashboards query the same data live. The architecture is simpler and performs better.

Production Security: Sensible Defaults

Quack defaults to secure local development. At startup, it generates a random authentication token and binds to localhost only. For production deployments, use nginx as a reverse proxy with Let’s Encrypt for SSL termination. Don’t expose Quack directly to the internet.

Authentication is extensible via user-supplied code—LDAP queries, file-based validation, or SQL macros. The default authorization function approves everything, but you can implement granular access control via callbacks. This matches the DuckDB philosophy: simple defaults that don’t get in your way, production-ready patterns when you need them.

Key Takeaways

Quack transforms DuckDB from an in-process database into a viable client-server platform without sacrificing simplicity:

- HTTP foundation enables familiar tooling – nginx, load balancers, SSL termination all work out of the box

- Performance validates the approach – 32x faster than PostgreSQL for bulk analytics, 3.5x faster than Arrow Flight

- Concurrent writers unlock new use cases – real-time dashboards, team collaboration, browser-based analytics

- Five-command setup maintains DuckDB simplicity – no complex configuration or infrastructure overhead

- Production-ready with secure defaults – random tokens, localhost binding, extensible authentication

If you’ve been waiting for DuckDB to support your team’s analytics workflow, Quack delivers. It’s available now in DuckDB v1.5.2 via the core_nightly repository.