IBM announced on March 12 the industry’s first published quantum-centric supercomputing blueprint, claiming it will deliver “practical quantum advantage by the end of 2026″—just eight months away. The architecture integrates quantum processors with classical supercomputers to tackle scientific problems in chemistry and materials science that neither system can solve alone. However, while IBM touts real achievements like a 303-atom protein simulation and 10x faster error correction, industry prediction markets show near-zero expectations for meaningful quantum applications by year’s end. Is this the breakthrough quantum computing has been promising for decades, or another round of moved goalposts?

The Hybrid Approach: Quantum as Co-Processor, Not Replacement

IBM’s blueprint isn’t about standalone quantum computers—it’s about quantum-centric supercomputing where quantum processors work alongside GPUs and CPUs in hybrid workflows. Moreover, the architecture coordinates across on-premises systems, research centers, and cloud environments using open tools like Qiskit. This is IBM’s strategic admission that quantum won’t replace classical computing—it will augment it for narrow tasks.

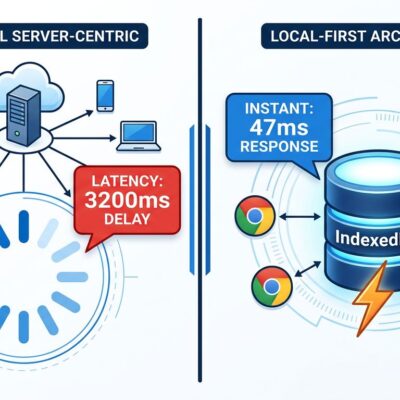

The proof comes from real deployments. RIKEN’s Fugaku supercomputer (152,064 classical nodes) exchanged data in real-time with a co-located IBM Quantum Heron processor to simulate iron-sulfur clusters—molecules fundamental to biology and chemistry. Additionally, Cleveland Clinic simulated a 303-atom tryptophan-cage protein using this hybrid approach. IBM even verified the first half-Möbius molecule creation via quantum-centric supercomputing, published in Science.

At the heart of this architecture sits IBM Quantum Nighthawk: a 120-qubit processor with 30% higher circuit complexity than previous systems, capable of handling up to 5,000 two-qubit gates. It’s a more honest framing than earlier “quantum will revolutionize everything” hype. Consequently, developers should think of quantum as a specialized co-processor for specific scientific workloads, not a general-purpose platform.

Bold Timeline Claims Meet Industry Skepticism

IBM claims it will achieve practical quantum advantage by the end of 2026 and fault-tolerant quantum computing by 2029. The confidence stems from a breakthrough: the company achieved a 10x speedup in quantum error correction decoding—one year ahead of schedule. Furthermore, by shifting from surface codes to quantum LDPC codes, IBM reduced physical qubit requirements by up to 90%. That’s significant technical progress.

However, the industry isn’t buying the timeline. Prediction markets show near-unanimous skepticism that quantum will outperform classical systems in cryptography or complex simulation by year’s end. Hacker News discussions echo the doubt: “There isn’t really much practicality for quantum computers right now.” The tension is clear—vendor roadmaps skew optimistic while community consensus remains cautious.

IBM’s roadmap is detailed and milestone-driven: Nighthawk (delivered in 2025), Kookaburra (2026, first modular processor combining logic with qLDPC memory), Cockatoo (2027, multi-module entanglement), and Starling (2029, large-scale fault-tolerant system capable of 100 million quantum gates on 200 logical qubits). Analysts call this “the only realistic path” toward fault-tolerant quantum, noting IBM “appears to have solved the scientific obstacles to error correction.” Nevertheless, solving scientific obstacles doesn’t guarantee commercial viability at scale. IBM’s “quantum advantage by end of 2026” is likely aspirational marketing. In contrast, the 2029 fault tolerance goal is more credible.

What “Practical Quantum Advantage” Actually Means

Practical quantum advantage has evolved far beyond Google’s 2019 “quantum supremacy” claim, which solved a contrived benchmark with no real-world use case. By 2026 standards, quantum advantage must be programmable, scalable, verifiable, robust to classical attack, and economically relevant. That’s a high bar, and no one has cleared it yet.

Early definitions required hundreds of thousands to millions of qubits. However, recent research suggests optimized architectures could achieve advantage with as few as 11,495 atoms and roughly 15 hours of runtime. IBM’s bet: its 120-qubit Nighthawk can deliver advantage in narrow scientific applications—chemistry and materials—by year’s end.

Current results show narrow wins but nothing transformative. D-Wave’s quantum annealing beat classical optimization in early 2026, but only for specialized problems. Similarly, IonQ and Ansys ran a medical device simulation on a 36-qubit system that outperformed classical by 12%—a marginal speedup, not a revolution. The field is making progress, but “practical quantum advantage” remains debated because the goalposts keep shifting.

Google Willow vs IBM Quantum: No Consensus on Strategy

Google’s Willow chip (announced in December 2025) takes a different approach. With 105 qubits, it achieved exponential error reduction as qubits scale—solving a 30-year quantum error correction challenge. Its Quantum Echo algorithm ran 13,000x faster than a classical supercomputer on molecular simulation, a benchmark that sounds impressive but remains narrow in scope.

IBM’s Nighthawk, with 120 qubits, prioritizes circuit complexity and connectivity. Its square lattice layout with 218 tunable couplers targets workflows requiring 5,000+ two-qubit gates (with a goal of 10,000 by 2027). Google focuses on quality—lower error rates per qubit. In contrast, IBM focuses on quantity—higher circuit depth and complexity.

Both companies are racing to prove quantum isn’t perpetual hype, but neither has demonstrated broad practical advantage. The quantum industry hasn’t converged on a “right” approach yet. It’s still experimental, and the winner remains unclear.

What Developers Should Actually Do

For 99% of developers, quantum computing is irrelevant in 2026. The only realistic near-term applications are quantum chemistry and materials science simulations—fields that require PhD-level expertise in quantum algorithms, not general software development skills. If you’re asking “should I learn Qiskit or Q#?” the answer is probably no.

The exceptions are narrow. If you work in pharmaceutical R&D, chemical engineering, or advanced materials science, exploring partnerships with IBM Quantum Network or AWS Braket makes sense. Current applications are limited to research collaborations like Cleveland Clinic’s protein simulations or RIKEN’s molecular modeling. These aren’t weekend projects—they’re PhD-level efforts with specialized domain expertise.

For everyone else, quantum remains a waiting game. Pre-fault-tolerant systems (2026-2028) are research tools, not production platforms. If IBM delivers its Starling fault-tolerant system in 2029, production applications might become viable in chemistry and materials. However, that’s three years away, and quantum timelines historically slip. Don’t invest time learning quantum programming unless you’re in a narrow scientific field. Instead, focus on classical distributed systems, machine learning, or domain expertise in chemistry if you want to position yourself for a quantum-adjacent role later.

The cost reality also matters. Quantum cloud services (IBM Quantum, AWS Braket) charge hundreds to thousands of dollars per job. The ROI is unclear for most organizations. Consequently, the skills barrier is high, the use cases are narrow, and the timeline to broader applicability is murky. Quantum computing is advancing, but it’s not ready for general developer adoption.

The Verdict: Measurable Progress, Persistent Hype

Quantum computing in 2026 sits between real technical progress and exaggerated public expectations. IBM’s error correction breakthrough and Google’s Willow error reduction represent genuine advances. Moreover, the shift from pure quantum to quantum-centric supercomputing is a more honest framing—acknowledging quantum’s role as a specialized tool rather than a classical computing replacement.

Nevertheless, the field still lacks a killer application beyond narrow scientific niches. Billions have been invested, yet prediction markets show investors and the public are losing patience with perpetual hype. IBM’s 2029 fault tolerance roadmap is the most concrete in the industry, but watch for end-of-year results to see if “practical quantum advantage” materializes or becomes another moved goalpost.

For developers, the message is clear: quantum is progressing, but temper your expectations. Chemistry and materials applications might see traction in 2027-2029. Everything else is speculative. Don’t bet your career on quantum unless you’re already in computational chemistry or materials science. And if IBM’s “practical advantage by end of 2026” claim doesn’t pan out? It won’t be the first time quantum timelines slipped.