The 2026 LinearB benchmark report analyzed 8.1 million pull requests across 4,800 engineering teams and found something that contradicts the AI productivity hype: coding assistants made developers 30-40% faster at writing code but created a massive review bottleneck that slows teams down overall.

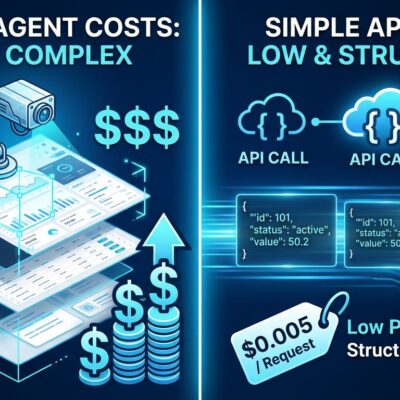

The uncomfortable truth: developers now spend 11.4 hours per week reviewing AI-generated code versus 9.8 hours writing new code. Code review has become more time-consuming than coding itself – a complete reversal from 2024 that exposes the hidden cost vendor demos conveniently ignore.

What 8.1 Million Pull Requests Reveal

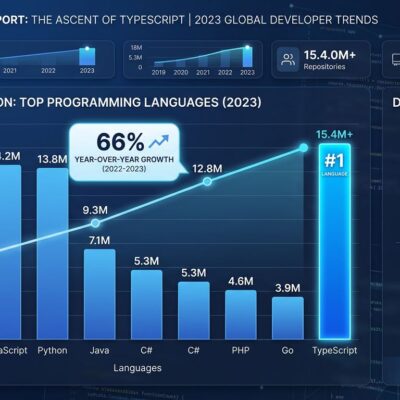

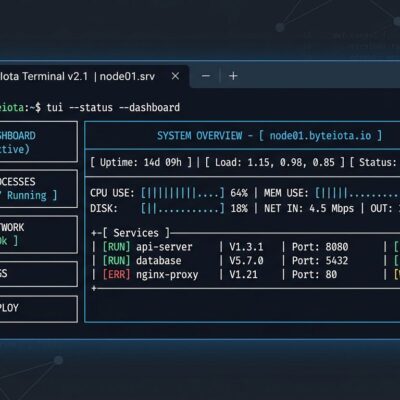

LinearB’s benchmarks analyzed pull requests from 42 countries and found clear patterns. AI tools improved throughput by 30-40%, which sounds impressive until you see what happens next. AI-generated PRs wait 4.6 times longer before review begins. When reviews finally happen, they move twice as fast – not because the code is better, but because issues are more obvious.

The quality gap is stark. Only 32.7% of AI-generated pull requests get accepted compared to 84.4% for human-written code. That’s not a minor difference – it’s a fundamental trust problem that creates bottlenecks throughout the development pipeline.

Industry data shows AI PRs are 2.6 times larger than manual ones and take 5.3 times longer to complete. Teams report AI-assisted code makes up 15-25% of their codebase on average, with top performers hitting 40-60%. But more AI code doesn’t necessarily mean better productivity.

The Review Bottleneck Nobody Talks About

The time allocation shift is revealing. Developers spend 11.4 hours per week reviewing AI-generated code but only 9.8 hours writing new code. That’s a role reversal most teams didn’t plan for. The promise was AI would free developers for higher-level work. The reality is it created a verification job that consumes more time than the original coding work.

Ninety-five percent of developers now spend significant time reviewing, testing, and correcting AI output. This isn’t a minor workflow adjustment. Most engineering organizations aren’t equipped with the review infrastructure to handle it.

The quality issues explain why. Analysis shows AI-generated code has 1.7 times more issues than human code and 2.74 times more security vulnerabilities. That 32.7% acceptance rate isn’t arbitrary skepticism. It’s developers protecting code quality after seeing what happens when AI suggestions go unchecked.

There’s also a perception gap. Developers report feeling 20% faster with AI tools but measure 19% slower when productivity is actually tracked. That’s a 39-point perception gap between feeling productive and being productive. The average time saved is 3.6 hours per week – far below the productivity revolution vendors promised.

The 25-40% Sweet Spot

Research on safe AI productivity thresholds identified an optimal range: teams that keep AI-assisted code between 25-40% of their codebase see real productivity improvements of 10-15% while maintaining effective quality gates. Beyond 40%, the returns diminish and quality risks accelerate.

GitHub Copilot shows a 46% code completion rate, but only about 30% of that code gets accepted by developers. Acceptance rates above 45% might sound positive, but they often indicate uncritical acceptance rather than tool quality – which means technical debt is being merged into production.

Tool performance varies significantly. Devin’s acceptance rates have been rising since April 2026, while Copilot’s have been slipping since May. The specifics matter less than the pattern: AI coding tools require constant evaluation and measurement.

What Elite Teams Do Differently

The gap between elite and average teams hasn’t changed – it’s widened. DORA metrics for 2026 show elite performers deploy 208 times more than low performers and recover from failures 7,300 times faster. Their change failure rate stays below 0.5%, and mean time to recovery can be as low as seven minutes.

Elite teams don’t reject AI tools. They use them within a measurement framework. They track DORA metrics (deployment frequency, lead time, change failure rate, and MTTR) to ensure speed gains don’t sacrifice stability. They recognize that the fastest teams are also the most stable, which is the opposite of what happens when AI tools are adopted without proper gates.

The SPACE framework adds another dimension: Satisfaction, Performance, Activity, Communication, and Efficiency. Teams using SPACE metrics have twice the engineer retention over three years. Happy developers are 13% more productive, which matters more than any tool optimization.

Elite teams invest in review infrastructure. They use AI-assisted code review tools, automated testing pipelines, and security scanning to handle the increased volume of AI-generated code. They don’t just throw AI at the writing phase and hope reviews scale naturally.

The Hybrid Future

AI won’t replace developers, but it’s changing what development work looks like. The shift from writing to reviewing is already happening. Cycle time has dropped from 11 days in 2020 to under seven days in 2026, driven by AI-assisted review practices and better asynchronous collaboration.

The developer role is evolving toward architecture, quality assurance, and strategic decision-making. The 32.7% acceptance rate shows why human judgment remains critical. AI generates code faster than it generates good code, and that gap requires skilled evaluation.

The takeaway isn’t that AI tools are bad. The data shows they provide real throughput gains when used within the 25-40% sweet spot and backed by proper measurement frameworks. The problem is treating AI as a silver bullet instead of a double-edged sword that requires sophisticated integration.

Teams that succeed with AI measure impact multi-dimensionally using DORA and SPACE frameworks, invest in review infrastructure alongside writing tools, maintain quality gates even when they slow things down, and stay within the proven 25-40% threshold. They treat AI as one tool in a comprehensive engineering system, not as a replacement for engineering discipline.

The 8.1 million pull requests analyzed by LinearB tell a clear story. AI coding assistants work, but not the way most teams think. Speed gains are real. So are the hidden costs in review time, quality issues, and delivery instability. Understanding both sides of that equation is what separates elite teams from everyone else.