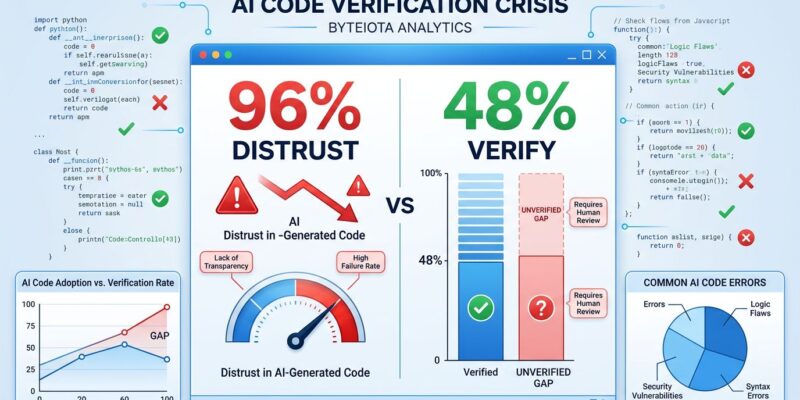

AI-generated code now represents 42% of all commits and is on track to hit 65% by 2027, but a critical AI code verification bottleneck has emerged this year. While 96% of developers don’t fully trust AI-generated code, only 48% actually verify it before committing, according to the State of Code Developer Survey 2026 released in January by Sonar. Moreover, the survey of 1,149 professional developers globally reveals a dangerous paradox—everyone distrusts AI code, but half skip the check anyway. The consequence? 43% of AI-generated code changes require manual debugging in production, even after passing QA and staging tests.

The Verification Gap Is Real and Dangerous

The gap between distrust and action isn’t just a theoretical problem. Lightrun’s 2026 State of AI-Powered Engineering Report found that not a single engineering leader surveyed described themselves as “very confident” that AI-generated code would behave correctly when deployed. Yet developers keep committing unverified code, creating what Sonar calls the “verification bottleneck”—the phenomenon where AI generates code faster than humans can confidently review it.

Furthermore, the problem runs deeper than trust metrics suggest. Specifically, 61% of developers agree that AI tools often produce code that “looks correct but isn’t reliable.” Consequently, this creates a particularly dangerous scenario: code passes visual inspection and might even pass automated tests, but subtle bugs only surface in production. When verification happens at all, it’s often cursory. The data shows that 52% of developers sometimes skip verification entirely, gambling that AI got it right.

The quality impact is measurable. According to the Lightrun report, 88% of teams need two to three redeploy cycles to verify an AI-suggested fix, while 11% need four to six cycles. Indeed, nobody gets it right on the first try. As a result, production has become the final testing ground—an expensive and risky way to catch AI mistakes.

Reviewing AI Code Takes More Effort Than Human Code

Here’s the productivity paradox AI vendors don’t talk about: 38% of developers report that reviewing AI-generated code requires more effort than reviewing human-written code. Despite AI promising massive productivity gains, 59% rate their code review effort as “moderate” or “substantial,” and developers now spend an average of 38% of their week—two full working days—on debugging, verification, and troubleshooting.

Sonar’s research captures the problem succinctly: “AI has shifted the center of gravity in software engineering. The hard part is no longer writing code, but validating it.” The speed advantage of AI code generation gets neutralized by the verification burden. Yes, you can generate code faster. However, if that code takes longer to review, catches only 57% of issues before production, and still breaks 43% of the time in production, where’s the net productivity gain?

Moreover, the verification burden isn’t distributed evenly. Junior developers, who might benefit most from AI assistance, often lack the experience to spot subtle issues. Meanwhile, senior developers, who could catch the problems, are overwhelmed reviewing AI output instead of architecting systems. As a result, a verification bottleneck slows teams down, not speeds them up.

The Volume Problem Is Accelerating

The scale of AI-generated code is growing exponentially. In 2023, AI accounted for just 6% of committed code. By 2026, that jumped to 42%—a seven-fold increase in three years. Furthermore, developers surveyed predict it will hit 65% by 2027. Meanwhile, 72% of developers who’ve tried AI coding tools now use them daily, and adoption spans all project types: 88% for prototypes, 83% for internal production systems, 73% for customer-facing applications, and even 58% for mission-critical services.

This isn’t a temporary spike that will self-correct. Instead, the volume of AI-generated code is accelerating faster than verification practices can adapt. By 2027, nearly two-thirds of committed code will be AI-generated—if current trends hold—while the verification gap persists. Consequently, teams are committing AI code to production systems without adequate review infrastructure, hoping quality tools will catch up. They haven’t.

The Tools and Practices Haven’t Caught Up

The infrastructure to safely validate and monitor AI-generated code in production environments has not kept pace with AI adoption. Companies rushed to deploy AI coding assistants—Copilot, Cursor, Claude Code—but the ecosystem of verification, testing, and monitoring tools is playing catch-up. Indeed, the Lightrun survey of 200 senior site-reliability and DevOps leaders found that nobody could verify an AI fix in a single redeploy cycle. Everyone needs multiple attempts.

Some verification tools exist. SonarQube users, for example, experience 44% fewer outages caused by AI-generated code and report substantially better outcomes in code quality, rework costs, and defects. However, adoption remains limited. Most teams rely on manual code review—the same process used for human code—to catch AI mistakes. That doesn’t scale when AI is generating 42% of commits and rising.

The lack of verification tooling creates a predictable pattern: developers generate code with AI, run basic tests, commit it, deploy it, and discover issues in production. Rinse and repeat across two to six redeploy cycles. Therefore, production becomes the de facto testing environment because pre-deployment verification is inadequate. This isn’t sustainable at 42% AI code volume, and it will be catastrophic at 65%.

Verification Is Now the Most Valuable Developer Skill

The skill premium in software development has flipped. When asked to rank the most important skills in the AI era, 47% of developers put “reviewing and validating AI-generated code for quality and security” at number one. Not coding. Not architecture. Verification. Indeed, the ability to spot what AI got wrong is now more valuable than the ability to write code from scratch.

This represents a fundamental shift in what makes developers valuable to organizations. Routine implementation tasks—the kind AI handles well—are being commoditized. Conversely, high-value skills are now system design, security analysis, code review, stakeholder communication, and prompt engineering. As one analysis put it, “The ability to articulate technical requirements in a way that produces optimal AI output is the skill that separates a 2x developer from a 10x developer in 2026.”

For teams, this means rethinking training and hiring. Verification expertise matters more than coding speed. Security analysis matters more than syntax knowledge. Moreover, the developers who thrive in an AI-assisted world won’t be the fastest at generating code—they’ll be the best at validating it, catching edge cases, and making architectural decisions AI can’t handle. Companies that don’t invest in upskilling their teams around AI code verification will find the verification bottleneck worsening, not improving.

The AI code verification bottleneck is real, it’s measurable, and it’s not going away. AI coding adoption has outpaced the development of verification practices, creating a dangerous gap between what we trust and what we check. The productivity narrative around AI coding is incomplete at best, misleading at worst. Until verification tools and practices catch up—or until AI code quality improves dramatically—the bottleneck will persist, and production will keep serving as the final testing ground for AI mistakes.