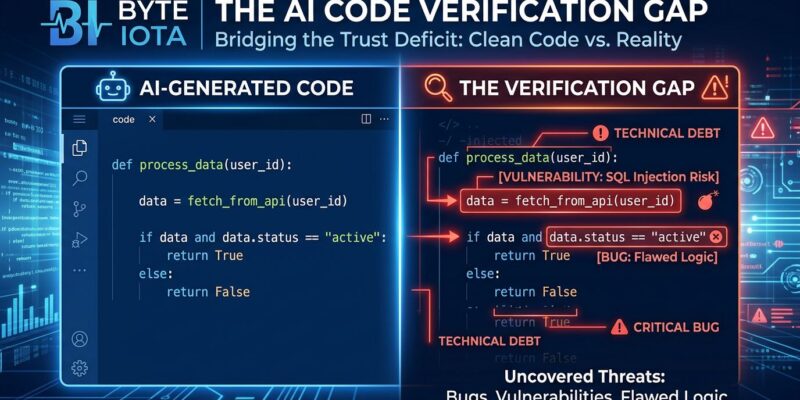

SonarSource’s 2026 State of Code survey just confirmed what developers won’t admit: 96% don’t trust AI-generated code, yet only 48% actually verify it before committing. This 48-point “verification gap” is creating a technical debt crisis as AI now generates 42% of all committed code—and developers expect that number to hit 65% by 2027. With 72% of developers using AI tools daily, the industry faces a mounting question: Why are we shipping code we don’t trust?

The Trust Paradox Behind the Numbers

SonarSource surveyed over 1,100 developers globally and released their findings in January 2026. The results expose a dangerous disconnect: nearly every developer questions AI code quality, but fewer than half take action. The gap isn’t technical—it’s behavioral.

Time pressure wins. AI-generated code looks professional: proper formatting, clean syntax, standard patterns. That visual polish masks functional problems. When deadlines loom and code passes a glance test, skepticism gives way to “ship it.” Amazon CTO Werner Vogels captured the problem at AWS re:Invent 2025: “When you write code yourself, comprehension comes with creation. When the machine writes it, you rebuild that comprehension during review.”

That rebuilding never happens. The verification gap widens every sprint.

The Great Toil Shift

Here’s the productivity paradox everyone misses: AI speeds up code generation but doesn’t accelerate shipping. Developers spend 23-25% of their time on toil tasks—that percentage stays constant whether they use AI daily or never. The toil doesn’t disappear. It migrates.

SonarSource found that 88% of developers report at least one negative impact from AI on technical debt. More than half—53%—say AI creates code that “looks correct but is unreliable.” The survey revealed what SonarSource calls “the great toil shift”: AI isn’t eliminating frustrating, repetitive work that hinders productivity, it’s simply changing its shape.

Developers using AI infrequently get relief from debugging poorly documented legacy systems. Heavy AI users trade that burden for new toil: managing AI-generated technical debt and rewriting code that passed initial review but fails in production. The kicker? 38% say reviewing AI code requires more effort than reviewing human code. Only 27% say it’s easier. That’s not a productivity gain—it’s a bottleneck relocation.

Verification Debt Compounds

Werner Vogels coined “verification debt” to describe this new category of technical debt. Developers write less code because generation is instant, but review more code because understanding takes time. The gap between AI’s speed and human comprehension creates unverified code that reaches production.

The survey data confirms Vogels’ thesis: 95% of developers spend effort reviewing, testing, and correcting AI output. Nearly 60% rate that effort as moderate or substantial. When 38% say AI code is harder to audit than human code, verification becomes the constraint. Code generation scales infinitely. Code comprehension doesn’t.

The Real-World Damage

This isn’t academic. AI-generated code contains 1.7 times more issues than human code—10.83 defects per pull request versus 6.45 for human-written code. Organizations see technical debt increase 30-41% after adopting AI tools. Developers report feeling faster but measurements show they’re 19% slower on end-to-end tasks.

Forrester predicts 75% of tech decision-makers will face moderate-to-severe technical debt by 2026. Gartner warns that prompt-to-app approaches will increase software defects by 2,500% by 2028. The financial costs are mounting: an estimated 8,000+ startups that built production applications with AI now need full or partial rebuilds, costing $50,000 to $500,000 each. That’s $400 million to $4 billion in cleanup costs.

Ox Security’s 2025 analysis identified ten anti-patterns present in 80-100% of AI-generated code: incomplete error handling, weak concurrency management, inconsistent architecture, and security vulnerabilities top the list. By year two, unmanaged AI code drives maintenance costs to four times traditional levels as technical debt compounds exponentially.

What Actually Works

The answer isn’t less AI—it’s systematic verification. Organizations with verification frameworks see measurably better outcomes. SonarSource’s data shows that 70% of developers already use static code analysis tools, and SonarQube users report stronger results than non-users across code quality, technical debt management, rework costs, and vulnerability counts.

The recommended approach? “Vibe, then verify.” Let developers experiment boldly with AI tools, but enforce rigorous verification. That means pre-commit quality gates, automated security scanning, architectural compliance checks, and measurable verification coverage. Treat code verification like cloud cost management: measure it, track it, create accountability.

SonarSource put it plainly: “The risk isn’t obvious bugs—it’s plausible-looking code with concealed vulnerabilities that create false security confidence.” The verification gap exists because AI code looks correct. That appearance is the danger.

Speed Without Verification Is Just Debt

The survey reveals a broader truth: 93% of developers cite measurable benefits from AI tools even as 88% report negative impacts. The technology isn’t failing—the verification process is. AI generates 42% of committed code today and will hit 65% by 2027. That volume makes systematic verification non-negotiable.

Developers know this. The 96% who don’t trust AI code aren’t wrong. The 48% who skip verification aren’t lazy. They’re operating in systems that reward speed over scrutiny. The verification gap won’t close with better AI—it’ll close with better processes. Every organization using AI coding tools needs verification frameworks that match the scale of code generation. Otherwise, that 48-point gap becomes a multi-billion-dollar crater.