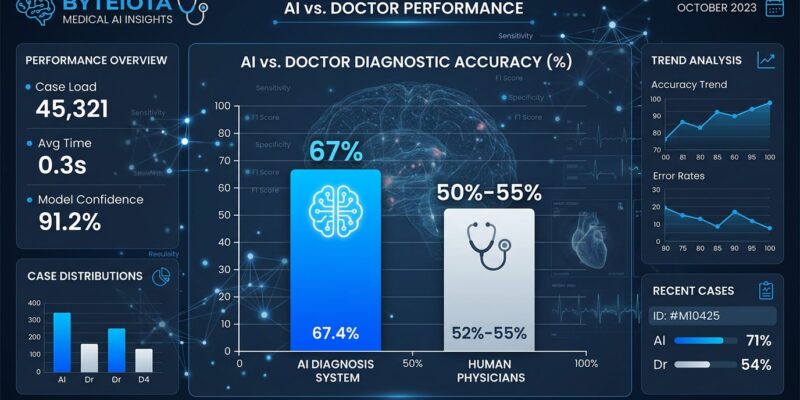

A Harvard study published this week shows OpenAI’s o1 model achieved 67% diagnostic accuracy in emergency room triage, beating two attending physicians who scored 50% and 55%. This is the first real-world evidence that AI can outperform doctors at diagnosis using actual patient cases from Beth Israel’s ER—and the gap isn’t small.

The results shocked researchers. “The performance of AI compared with human experts on these cases shocked many people,” said Prof. Arjun Manrai of Harvard Medical School. Moreover, across all six experiments in the study, AI won every single one without exception. The biggest gap appeared in management reasoning—deciding which tests to order, which antibiotics to prescribe, and how to discuss end-of-life care. AI scored 89% while doctors using Google and conventional resources managed just 34%. That’s a 55-point performance gap on clinical decisions that directly affect patient outcomes.

The Catch: Text Only, No Images or Physical Exams

Before we hand over stethoscopes to GPUs, there’s a critical limitation. The study was text-only. AI reviewed written patient histories, symptoms, vitals, and test results—but had zero access to X-rays, CT scans, physical examinations, or patient interactions. It was a retrospective review of existing cases, not real-time ER diagnosis with a scared patient bleeding on a gurney.

This explains both the AI advantage and its limits. AI excels at pattern recognition from structured text data, cross-referencing symptoms against millions of documented cases instantly. However, emergency medicine requires more than text parsing. A rash, a patient’s facial expression, or an abnormal heart sound can change everything. The study authors acknowledged AI “underperforms on most medical imaging benchmarks” and cannot assess the non-verbal cues doctors rely on constantly.

Harvard researcher Arya Rao noted that “model reasoning” isn’t the same as “clinical reasoning”—these models optimize for sequential thought, not the diagnostic training methodology taught in medical schools. The question isn’t whether AI can replace doctors (it can’t, yet). It’s whether AI can assist with the narrow but critical task of text-based triage and treatment planning (it can, apparently better than most doctors).

Who’s Liable When AI Gets It Wrong?

Here’s the uncomfortable question no one has answered: when AI diagnoses incorrectly and a patient dies, who’s responsible? The current legal framework is, according to medical liability experts, “inadequate and requires urgent intervention.” Doctors can’t blindly follow AI recommendations—that’s negligence. But if AI demonstrates superior diagnostic accuracy and a doctor ignores it, is that also negligence? We’re in a liability vacuum.

Over 25 states have introduced more than 35 bills regulating healthcare AI since early 2025, but there’s no consensus framework. Furthermore, the proposed “AI LEAD Act” from Senators Hawley and Durbin would classify AI systems as products, letting patients sue developers for harm. Meanwhile, malpractice insurers are watching nervously, taking what they call a “cautious but flexible” approach—which translates to: we don’t know either.

The data says AI is objectively better at text-based diagnosis than most doctors. The law says doctors are responsible for all clinical decisions. Something has to give, and soon.

FDA Clearing the Regulatory Path

While lawyers figure out liability, the FDA is accelerating AI deployment. Commissioner Martin Makary announced in January 2026 that the FDA is developing a “new regulatory framework for AI” designed to move “at Silicon Valley speed.” The agency softened oversight of clinical decision support software—AI diagnostic tools can now avoid device classification entirely if a clinician reviews the output basis.

This is FDA saying “we’re not standing in the way.” The emphasis shifted from premarket approval to post-market monitoring. Translation: get AI tools into hospitals faster, watch for problems, adjust as needed. It’s a calculated bet that the benefits (fewer missed diagnoses, faster triage, better management decisions) outweigh the risks of premature deployment.

Given this Harvard study’s results—67% vs 50% accuracy, 89% vs 34% on treatment decisions—that bet looks increasingly rational. Every percentage point in diagnostic accuracy translates to lives saved and harm prevented.

What Happens Next

The study authors were clear: “There’s an urgent need for prospective trials to evaluate these technologies in real-world patient care settings.” This wasn’t a call for celebration—it was a warning. Consequently, lab results don’t equal real-world performance. AI needs testing in actual ER workflows with real-time decisions and all the chaos, time pressure, and incomplete information that entails.

The likely future isn’t AI replacing doctors. It’s AI as a mandatory second opinion tool embedded in hospital workflows. Doctor makes initial diagnosis, AI provides its assessment, discrepancies get flagged for review. The doctor still decides, but has access to a diagnostic assistant that demonstrably outperforms human pattern recognition on text-based cases.

Integration with electronic health record systems like Epic and Cerner remains a major barrier. Additionally, automation bias poses risks—the tendency for doctors to unconsciously defer to AI even when it’s wrong. Early research suggests clinicians lean on AI outputs rather than challenge them. We’re replacing one set of problems (missed diagnoses, cognitive fatigue, variability between providers) with another (over-reliance, edge case failures, liability confusion).

But the direction is clear. AI has proven it can beat doctors at diagnosis in controlled settings. The question shifted from “can this work?” to “how do we deploy this safely?” The next 12 to 24 months will determine whether we get it right.