A new industry report puts a number on something developers have been sensing for months: AI coding tools are generating an enormous amount of invisible work that never shows up in the metrics used to justify them. The Harness State of Engineering Excellence 2026, published May 13 and based on surveys of 700 engineering practitioners across five countries, found that 31% of developer time is now consumed by work that doesn’t register on standard productivity dashboards — reviewing AI-generated code, fixing AI-introduced bugs, and context-switching between tools. That’s nearly a third of the working day absorbed by overhead that most engineering organizations aren’t tracking.

The Feedback Loop Is Broken

Here’s the part that should make every engineering manager uncomfortable. The same report found that 89% of engineering leaders say AI tools have improved developer productivity. That sounds like a success story — until you read the next finding: 94% of those same leaders admit their current metrics are missing critical factors like tech debt accumulation, validation time, and developer burnout.

You cannot have both. Either the metrics accurately reflect reality, or they don’t. What the data actually describes is a broken feedback loop: leaders are confident in a measurement system they simultaneously know is incomplete. They’re declaring victory in a game they’ve stopped scoring correctly.

Code Review Has Become the Real Bottleneck

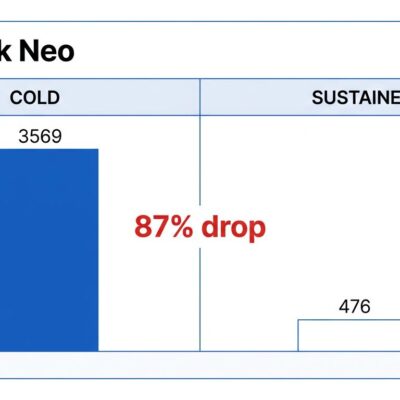

The invisible work breaks down into a few categories, but code review dominates. 81% of organizations in the Harness survey reported that developers spend more time in code review since adopting AI tools — not less. 28% of those organizations say the increase exceeds 30%.

The mechanics aren’t surprising once you think about it. AI tools dramatically accelerate code generation. But AI-written code surfaces 1.7x more issues than human-written code. So you get higher volume of code that requires more scrutiny per line. The bottleneck hasn’t been eliminated — it’s moved upstream and gotten more expensive. Code churn is projected to double in 2026, and delivery stability has already dropped 7.2% despite faster output metrics.

“Faster code generation” and “faster delivery” are not the same thing. Engineering teams are discovering this the hard way.

The Measurement Frameworks Were Built for a Different Era

DORA metrics — the industry’s go-to for measuring DevOps performance — cannot tell you whether a deployment frequency improvement came from AI tooling or a sustainable process change. The framework wasn’t designed to attribute outcomes to AI assistance. Neither was SPACE. Both predate AI coding tools operating at this scale.

AI now writes an estimated 41% of all code in organizations with high AI adoption. DORA metrics applied to that environment will surface whatever the AI’s output looks like — faster commits, more frequent deploys — without capturing the review overhead, the quality regression, or the burnout accumulating in the people doing the verification work.

Agentic Fatigue: The New Kind of Burnout

There’s a specific type of cognitive load emerging from agentic AI workflows that researchers are calling “agentic fatigue” — the overhead of constantly evaluating whether to trust AI output. Every AI-generated function requires a judgment call: Is this correct? Is this secure? Is this what I actually asked for? At scale, those micro-decisions accumulate into something that looks like exhaustion — and hits hardest the developers who adopted AI most aggressively.

The Pragmatic Engineer’s 2026 survey found 75% of engineers now use AI for at least half their work. Many report that organizations treat every minute saved as a minute available for more work. The result isn’t less burnout — it’s a different kind of burnout, happening to the same people.

What Actually Needs to Change

The Harness report frames this as a measurement crisis, not an AI crisis. The tools aren’t necessarily the problem — the accounting is. Engineering organizations that want an accurate picture of what AI is actually costing them need to start tracking what current frameworks ignore:

- Review time for AI-generated code as a separate line item in sprint planning

- Code churn rates — not just deployment frequency

- AI-attributed tech debt, tracked separately from human-written tech debt

- Developer-reported cognitive load, not just self-reported satisfaction scores

The 31% invisible work figure isn’t an argument against AI coding tools. It’s an argument for measuring them honestly. Most teams are operating with a productivity narrative built on incomplete data, and standard DORA metrics alone won’t close the gap. The cost is real. It’s just not in your dashboard yet.