Your AI agent failed in production. You know it made several LLM calls, invoked a few tools, handed off to a subagent — and somewhere in that chain, something went wrong. Your monitoring dashboard shows a 500 error and a sad latency spike. It tells you nothing about the decision that caused it. This is not an edge case. It is the default state of AI agent debugging right now, and it has been broken since the moment developers started shipping agents to production.

Honeycomb shipped a direct answer to this problem on May 12. The company announced three new agent observability capabilities: Agent Timeline, a rebuilt Canvas Agent with auto-investigations, and Canvas Skills. Together, they represent the most substantive attempt yet to apply real observability principles to AI agent workflows — as opposed to the single-turn LLM call logging that most tools still pretend is enough.

Agent Timeline: The Thing That Was Actually Missing

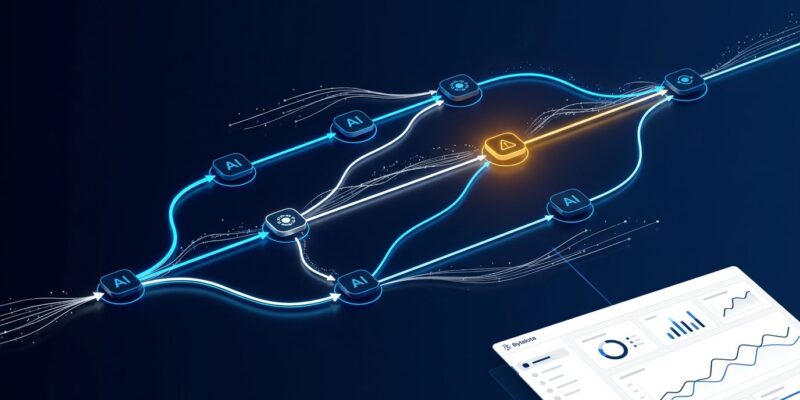

Agent Timeline does for multi-agent workflows what distributed tracing did for microservices: it stitches together a coherent picture from what would otherwise be a scattered mess of individual spans. Every LLM call, tool invocation, agent handoff, and downstream system impact gets rendered as a single view — in real time. Engineers can trace what an agent did, reconstruct the full decision path, and understand failures without switching tools or manually cross-referencing logs.

The capability is currently in Early Access, with general availability expected in June. The more important detail is what it does not require: no proprietary SDK, no framework lock-in, no re-instrumentation. Agent Timeline is built on OpenTelemetry’s gen_ai.* semantic conventions, which means it works across LangChain, AutoGen, CrewAI, and MCP-based workflows out of the box. The OTel GenAI semantic conventions are still experimental in 2026, but every major vendor — Datadog, Elastic, New Relic, Honeycomb — has already committed to them. That convergence matters more than the “experimental” label on the spec.

One practical note on instrumentation: each tool span should record the tool name, arguments, raw output, duration, retry count, and error state. That level of detail is what makes decision-path reconstruction possible. Without it, you have timestamps and status codes — which is not observability. It is logging wearing a costume.

Canvas Agent and the Auto-Investigation You Did Not Know You Needed

Canvas was previously a collaborative workspace for querying Honeycomb in plain English. It has been rebuilt into a workspace, a chat interface, and an autonomous agent simultaneously. The practical upgrade is auto-investigations: when an alert fires, an SLO burns, or an anomaly surfaces, Canvas Agent starts working immediately — gathering data, testing hypotheses, proposing remediation — before any engineer has opened their laptop.

Bubble’s engineering team provided a concrete example of what this looks like in practice. During a production investigation, Canvas found the cause of API slowness by comparing whole traces and identifying patterns within child spans that were not visible on the affected spans themselves. That is precisely the kind of multi-trace correlation that manual log analysis consistently misses, because humans tend to look at the obvious trace and stop.

Canvas Skills: Capturing What Your Best Engineer Knows

The most novel part of this release is Canvas Skills. The concept: encode your senior engineers’ debugging knowledge and service-specific best practices into reusable playbooks that run autonomously. Instead of paging your best infrastructure engineer at 2am for a Kafka consumer lag issue, a Kafka debugging playbook runs, surfaces the likely cause, and proposes a fix. The engineer reviews rather than investigates from scratch.

Honeycomb also offers pre-built skills for Claude Code and Cursor users via the honeycombio/agent-skill GitHub repository: a honeycomb-investigator for autonomous multi-step production debugging and an instrumentation-advisor for analyzing codebases and identifying OpenTelemetry instrumentation gaps. The latter is useful if you are migrating legacy telemetry to OTel and want an agent to tell you what you are missing.

Why Most LLM Observability Tools Still Fall Short

There are over 60 tools that claim to solve AI observability. Most were built for single-turn LLM call logging — a fundamentally different problem from agent-native observability. Single-turn logging tells you that a model call took 2.3 seconds and returned 400 tokens. It does not tell you why the agent called the same broken database tool three times in a row and then decided to hallucinate a response instead.

Agent-native observability requires capturing causal dependencies between steps. LangSmith does this well inside the LangChain ecosystem, but switching orchestration frameworks means re-instrumenting your entire observability layer from scratch. Datadog has no native understanding of prompt chains or multi-step reasoning loops. Arize Phoenix has strong evaluation rigor and is worth considering if hallucination detection at scale is your priority. Honeycomb’s architectural advantage is its high-cardinality data store, purpose-built for unpredictable, high-dimensionality telemetry — exactly what AI agents generate.

What to Do Now

If you are shipping AI agents to production, two steps are worth taking immediately. First, instrument with OTel GenAI semantic conventions if you have not already — the standard is converging and retrofitting later is painful. Second, request access to Agent Timeline’s Early Access program through Honeycomb’s announcement. Canvas and Canvas Skills are already available to all Honeycomb customers as of this week.

The broader point: debugging AI agents requires a different mental model than debugging traditional applications. It is closer to distributed systems debugging — reconstructing decision paths across distributed spans — than finding an exception in a stack trace. The tooling is finally catching up to that reality.