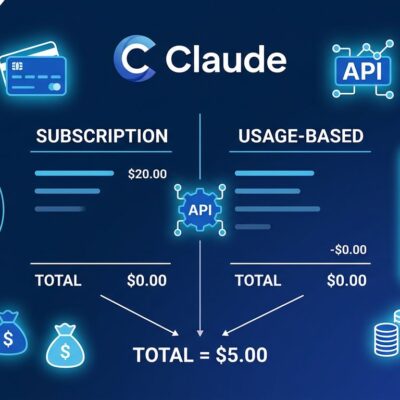

Apple is converting its 1.3 billion-device platform into an AI marketplace — and OpenAI is already threatening legal action over the original deal that made this necessary. iOS 27’s new Extensions system, expected to be announced at WWDC on June 8, will let users select the AI model powering Siri, Writing Tools, and Image Playground. Claude, Gemini, and ChatGPT are all on the list. For developers, this is either a significant distribution opportunity or a signal that the AI default wars just moved to iOS.

What the Extensions System Actually Does

Extensions is not a cosmetic change to Siri’s settings menu. It is a system-level API that routes AI queries from Siri, Writing Tools, and Image Playground to whichever third-party provider a user has selected. The mechanism follows the same model as existing iOS extensions — share sheets, widgets, keyboard replacements — with the same sandboxed, permission-gated architecture Apple has used since iOS 8.

In practice: users install a compatible AI provider’s App Store app (Claude, Gemini, ChatGPT, or others), then set their preferred provider in Settings. The choice applies system-wide. Siri remains the interface and orchestration layer. The third-party model operates as a permissioned backend, receives the query, and returns a response through the Extension interface.

9to5Mac confirmed the feature inside iOS 27 test builds on May 5. Official API documentation and certification requirements are expected at WWDC. The public release ships with iOS 27 in fall 2026.

Why This Is Happening Now: The OpenAI Lawsuit

The timing is not coincidental. Bloomberg reported on May 14 that OpenAI is considering legal action against Apple over the ChatGPT-Siri integration that launched with iOS 18 in 2024.

The deal structure: no direct payment between the companies, but Apple takes a cut of new ChatGPT subscriptions driven by the integration. OpenAI believed it could generate billions in annual subscription revenue. That figure has not materialized. OpenAI’s complaint is specific: Apple buried the integration. Users must explicitly say “ChatGPT” to trigger OpenAI’s model through Siri. Responses returned through the Siri interface are stripped-down compared to the standalone app. Internal OpenAI research found users preferred the standalone ChatGPT app over the built-in integration when they knew both existed.

OpenAI has enlisted outside counsel to review its options, which could include a formal breach-of-contract notice. MacRumors notes no final decisions have been made and OpenAI still prefers to resolve this without litigation.

Read that situation straight: Apple built a dependency on OpenAI, got the AI credibility it needed for iOS 18, then replaced the exclusive arrangement with a multi-provider marketplace. OpenAI goes from privileged partner to one option in a Settings menu. The lawsuit threat is the sound of leverage shifting.

Who Gets In — and the Distribution Math

Google Gemini and Anthropic Claude are both being tested internally. OpenAI ChatGPT transitions from exclusive integration to standard Extension. Others rumored to be evaluated: Grok, Perplexity, Microsoft Copilot.

For AI companies, the distribution logic is straightforward. Apple has 1.3 billion active devices. Most users on those devices will never deliberately download a standalone AI app. An Extensions listing means appearing as a selectable option in iOS Settings — functionally a new kind of App Store placement. Google already has a reported $1 billion per year deal with Apple for Gemini to power Siri’s backend intelligence. A user-facing Extension gives Gemini double presence on every iPhone: invisible infrastructure and explicit choice.

What Developers Should Do Before WWDC

The Extensions API will not be public until WWDC at the earliest. But there are concrete preparation steps available now:

- Learn the Foundation Models framework now. The current on-device AI SDK patterns are the foundation the Extensions API will build on. Time spent there is not wasted.

- Watch the WWDC keynote on June 8. Apple’s developer portal has registration details and session previews already posted.

- Audit your app’s AI integration surface. If your app involves text, image, or voice interaction, Extensions will create new user expectations. Understanding where your app stands before the API drops matters.

- Consider the certification requirements. Apple will review and certify AI providers for Extension eligibility. Privacy, data handling, and response quality standards will apply. Familiarity with Apple’s existing App Review criteria for AI features is useful groundwork.

Visual Intelligence — Apple’s camera-level AI — is also expected to open as a developer API at WWDC, expanding the opportunity surface further for apps that work with visual content.

The Bigger Picture

Google pays Apple over $20 billion per year to be Safari’s default search engine. iOS 27 Extensions is the AI-era equivalent of that negotiation — except Apple is running a multi-provider auction rather than signing one exclusive deal. The providers who get in early, build quality integrations, and earn user preference will claim a distribution channel that took Google over a decade to secure in the browser era.

WWDC is three weeks away. The API documentation is coming. The certification process is coming. The question for every developer building AI-integrated products is whether they want to be waiting for the announcement or already positioned to move when it drops.