Cloudflare announced Agent Memory in private beta on April 17, 2026, marking the shift of persistent memory from experimental feature to production infrastructure for AI agents. Without memory, coding agents forget everything between sessions—coding conventions, architectural decisions, bug patterns, team knowledge—all lost when the conversation ends. The solution landscape matured fast in early 2026. agentmemory hit 5,880 GitHub stars with benchmark-validated confidence scoring. mem0 expanded to 21 framework integrations across Python and TypeScript. Supermemory shipped MCP plugins for Claude Code that work with zero configuration. Memory is now first-class architecture with its own benchmark suite, enabling agents to evolve from junior assistants to knowledgeable team members.

Why AI Coding Agents Forget Everything

AI coding agents reset to zero knowledge after every conversation by default. They’re stateless. Close the session, and everything learned—your coding conventions, that tricky bug you just debugged, the architectural decision you explained—vanishes. Start a new conversation, and you’re teaching the same lessons again.

The naive solution is cramming everything into context windows. However, even million-token contexts hit “context rot”—output quality degrades as context fills past 500,000 tokens, according to research cited in Cloudflare’s Agent Memory launch. Moreover, cost explodes. A 1-million-token context at $0.50 per million input tokens costs $0.50 per call. Memory systems with selective retrieval? $0.05-$0.15 per call. That’s 10-20x cheaper.

For teams, the impact compounds. New developer onboarding takes 4-6 weeks without shared agent memory—they’re learning conventions the hard way. With shared memory profiles, teams report 2-3x faster onboarding because the agent already knows team standards. Furthermore, one engineer’s corrections propagate to everyone’s agents automatically.

Agent Memory Frameworks in 2026: Which to Choose

The memory framework ecosystem split into three tiers in early 2026. Managed services—Cloudflare Agent Memory runs on edge infrastructure with zero deployment overhead, though it’s still in private beta. Self-hosted open source—agentmemory (5,880 stars, trending today with +1,048 stars) offers benchmark-driven validation with confidence scoring and knowledge graphs. mem0 provides 21 framework integrations spanning LangGraph, Mastra, and Vercel AI SDK across Python and TypeScript. Coding-specific plugins—Supermemory built MCP (Model Context Protocol) plugins for Claude Code, OpenCode, OpenClaw, and Hermes with automatic capture and zero-config setup.

Benchmarks matter. Zep/Graphiti scored 63.8% on LongMemEval’s temporal reasoning tests versus mem0’s 49.0%, driven by Zep’s temporal knowledge graph that tracks how facts change over time. Consequently, Letta (formerly MemGPT) models memory like an operating system—main context as RAM, external storage as disk, with agents explicitly controlling paging. Cognee offers fully local deployment for air-gapped environments with GraphRAG implementation.

Choose based on deployment model, not GitHub stars. Need managed infrastructure? Cloudflare when it exits beta. Self-hosting with team scale? mem0 or agentmemory. Coding agents only? Supermemory’s MCP plugins. Temporal reasoning required? Zep/Graphiti. Air-gapped deployment? Cognee.

Implementing Persistent Memory: Practical Setup

Setup for agentmemory via MCP takes three commands. Start the memory server, install the plugin, verify it’s running.

# Start memory server

npx @agentmemory/agentmemory

# In Claude Code or MCP client

/plugin marketplace add rohitg00/agentmemory

/plugin install agentmemory

# Verify setup

curl http://localhost:3111/agentmemory/healthThe plugin registers 12 hooks, 4 skills, and 51 MCP tools automatically—memory_smart_search, memory_save, memory_sessions, memory_governance_delete. No manual configuration. Additionally, real-time viewer runs at localhost:3113 for debugging.

What to remember versus forget determines whether memory helps or pollutes retrieval. Remember architecture decisions with rationale—why you chose microservices pattern X over Y. Remember coding conventions and standards—camelCase for variables, async/await for I/O. Remember bug patterns and solutions—that subtle auth library issue that took three hours. Forget temporary debug variables, one-off script context, exploratory prototyping, completed task details after implementation.

Modern frameworks handle forgetting automatically. agentmemory’s confidence scoring decays transient information naturally. Low-confidence memories below 0.3 threshold after 7 days get archived. Therefore, this prevents context pollution without manual pruning.

Team Shared Memory: The 2026 Breakthrough

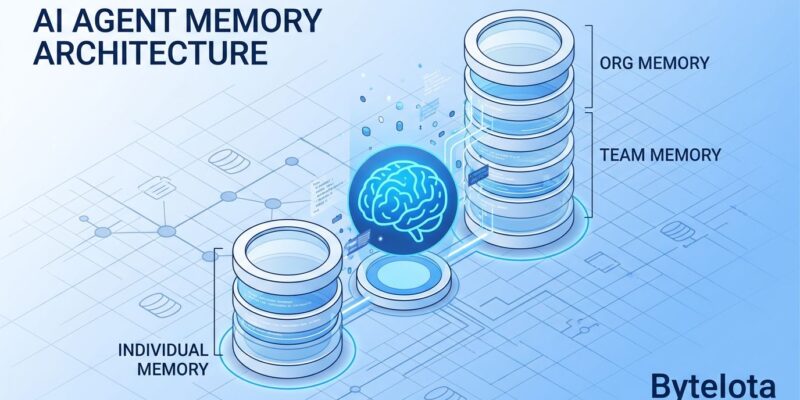

The killer feature in 2026 is team-scale shared memory. One developer’s agent learns a coding convention. The entire team’s agents inherit it immediately. Implementation uses hierarchical profiles—individual developer profile for personal preferences, team profile for shared conventions, organization profile for company-wide standards. Agent queries all three with precedence: individual overrides team overrides org.

Use cases prove the value. Onboarding—new dev’s agent inherits team knowledge instantly instead of spending weeks learning conventions. Consistency—style and pattern enforcement happens automatically across all agents. Tribal knowledge—architectural rationale that lives in senior engineers’ heads gets captured and shared. Furthermore, one Reddit user on r/ClaudeCode: “Best feature is onboarding new devs—their agent already knows everything.”

The technical pattern is straightforward. Create three profiles: dev-alice (personal), team-backend (shared), org-global (company). Configure agent to query all three with documented precedence rules. When conflicts arise, personal preference wins.

Common Pitfalls and When Memory Isn’t Worth It

Four pitfalls sink memory implementations. Context pollution—storing too much low-value information degrades retrieval quality. One team stored every variable name they’d ever used. Agent performance collapsed because searches returned thousands of irrelevant results. Solution: implement importance scoring. Only store information that would be useful weeks later, not minutes later.

Staleness without decay—external world changes but memory doesn’t update. Team deprecates a pattern, but agent keeps suggesting it because memory says “this is how we do it.” Solution: temporal decay where information becomes less relevant over time, or explicit versioning tracking when facts were learned. Moreover, database mismatch—using vector DB for all memory types fails at multi-hop reasoning. Use hybrid storage: vector for semantic search, graph for relationships, SQL for structured facts.

Skip memory for one-off scripts, solo simple projects, exploratory prototyping. Setup overhead exceeds value when projects live for three days. Context windows are sufficient for short-lived work. Don’t add infrastructure complexity when you don’t need it.

Key Takeaways

Memory shifted from experimental to production infrastructure in 2026, with managed services (Cloudflare April 17 launch), benchmark suites (LongMemEval), and mature frameworks (mem0’s 21 integrations, agentmemory’s 5,880 stars).

- Framework choice depends on deployment model: Coding-specific agents benefit from Supermemory’s MCP plugins. Self-hosted teams should evaluate mem0 or agentmemory. Enterprises wanting managed services should join Cloudflare’s waitlist.

- Team shared memory unlocks enterprise value: 2-3x faster onboarding, automatic knowledge transfer, consistent conventions enforced across all agents.

- Storage patterns matter more than technology: Remember architecture decisions and coding standards. Forget debug context and one-off scripts. Implement confidence scoring or temporal decay to prevent staleness.

- Memory costs 10-20x less than context-only approaches: Selective retrieval from memory systems runs $0.05-$0.15 per call versus $0.50+ for million-token context windows.

- Skip memory for projects under one month: Setup overhead exceeds value for one-off scripts and exploratory work. Memory shines on long-lived codebases with team collaboration.

Try agentmemory if you use MCP-compatible tools (Claude Code, Cursor, Windsurf). Explore mem0 for production Python or TypeScript workflows with framework integrations. Request Cloudflare Agent Memory beta access for managed deployment with edge distribution.