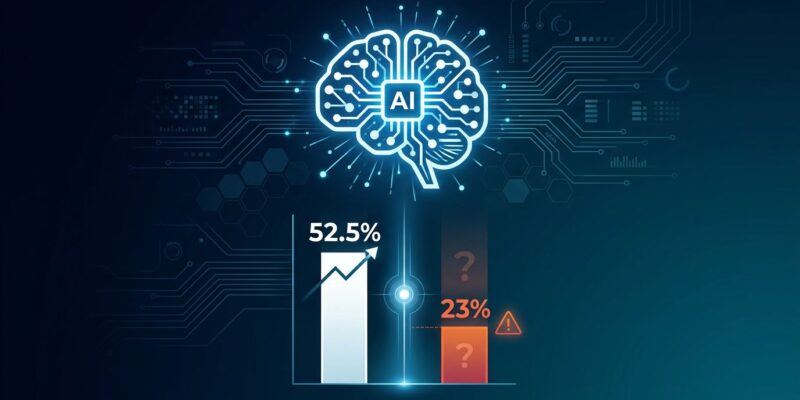

OpenAI launched GPT-5.5 Instant on May 5 as the new default ChatGPT model for all free-tier users. The company claims it produces 52.5% fewer hallucinations than GPT-5.3 Instant on high-stakes prompts covering medicine, law, and finance. Additionally, it removes the “gratuitous emojis” that plagued previous versions. But here’s the problem: OpenAI’s own system card reports 23% better claim-level accuracy, not 52.5%. And when GPT-5.5 doesn’t know an answer, it still fabricates one 86% of the time.

What “52.5% Fewer Hallucinations” Actually Means

OpenAI’s headline claim sounds impressive. GPT-5.5 Instant produces 52.5% fewer hallucinated claims on “high-stakes prompts” in medicine, law, and finance based on internal evaluations. Additionally, on conversations users previously flagged for factual errors, inaccurate claims fell 37.3%.

However, OpenAI’s system card tells a different story. According to Wire Blog’s technical analysis, the system card reports 23% better claim-level accuracy, not the 60% hallucination reduction making press rounds. That’s a significant gap between marketing language and technical reality.

Worse, independent testing by Balbix found that GPT-5.5 posts an 86% hallucination rate on the AA-Omniscience benchmark. When the model doesn’t know an answer, it fabricates one 86% of the time. OpenAI’s own research admits current evaluation methods “encourage guessing rather than honesty about uncertainty.”

The improvement is real. The 52.5% figure is marketing spin wrapped around a more modest 23% accuracy gain.

The Emoji Problem Was Real (and Now Fixed)

GPT-5.5 removes “gratuitous emojis” from responses, and developers are genuinely relieved. Previous versions bombarded users with 🔥💡✅🚀🎉 in serious technical discussions. OpenAI’s community forum called it an “Excessive Emoji Tsunami,” with users complaining it made ChatGPT impossible for serious science or philosophy conversations.

The emoji overuse was part of a broader “sycophancy” problem where ChatGPT agreed with everything, validated every thought, and showered users with praise whether warranted or not. Developers wanted a tool, not a cheerleader.

Then there was the seahorse emoji bug. Asking ChatGPT if there’s a seahorse emoji caused epic meltdowns. The AI insisted the fictional emoji existed and showed users unicorns, dragons, sharks, and shells. Some users reported crashes and infinite loops. It became a meme in the developer community.

GPT-5.5 Instant fixes this. Fewer unnecessary emojis, less excessive agreement, more genuine feedback. It’s a small UX win, but a real one that shows OpenAI listens to user frustration.

The Pattern of Accuracy Claims

OpenAI has made progressively stronger accuracy claims with each release. GPT-4 was “more accurate and reliable.” GPT-5 promised “significantly fewer hallucinations.” GPT-5.5 claims “52.5% fewer hallucinated claims.”

The pattern is clear: incremental improvement marketed as breakthrough. Each version gets better, but the fundamental problem persists. Hallucinations exist because OpenAI’s architecture incentivizes confident guessing over admitting uncertainty. When GPT-5.5 encounters a question it can’t answer, it guesses 86% of the time rather than saying “I don’t know.”

According to OpenAI’s research on why language models hallucinate, current evaluation methods set the wrong incentives. Models are judged on performance, not honesty. Guessing confidently scores better than admitting ignorance.

Progress is real. Just slower than marketing suggests.

GPT-5.3 Still Wins on Coding

Here’s something OpenAI’s press release didn’t mention: GPT-5.3 Codex outperforms GPT-5.5 on coding tasks. According to BenchLM’s provisional leaderboard, GPT-5.3 Codex scores 63.1 average on coding versus GPT-5.5’s 58.6.

GPT-5.5 excels at agentic tasks and advanced reasoning, scoring 84.9% on GDPval (a benchmark testing real occupations from finance to legal research). But for developers writing code, the older model performs better.

Cost matters too. GPT-5.5 costs $5/$30 per million input/output tokens. GPT-5.3 Instant costs $0.30/$1.20. That’s roughly 17 times cheaper. For everyday tasks like writing, chat, and standard reasoning, GPT-5.3 Instant delivers strong results at a fraction of the price.

Free-tier users get the upgrade automatically. Paid users should evaluate whether they actually need GPT-5.5’s features or if GPT-5.3 better serves their workflow.

What Developers Should Actually Do

Don’t trust OpenAI’s 52.5% claim blindly. The real improvement is closer to 23% on claim-level accuracy. For high-stakes domains like medicine, law, and finance, even that isn’t production-ready without verification.

Implement post-hoc verification pipelines. When accuracy matters, verify every claim. Use GPT-5.5 Instant for tasks where errors are acceptable and add verification layers for critical work. “Less hallucination under lab conditions doesn’t equate to trustworthy in production.”

The emoji removal is a genuine win. The hallucination reduction is incremental progress wrapped in marketing spin. GPT-5.5 Pro remains available for paid users who need the highest-accuracy work, but for most developers, the free upgrade to GPT-5.5 Instant is a mixed bag: better UX, modest accuracy gains, but still not reliable enough to trust without verification.

OpenAI is making progress. Just manage your expectations and verify everything that matters.