AI coding assistants now generate 41% of all code worldwide, but only 29% of developers trust the output. AI-generated pull requests contain 1.7 times more issues than human code, with silent failures that pass tests but break in production. React Doctor, a brand new CLI tool from the Million.js team, addresses this quality crisis by scanning React codebases for 60+ anti-patterns and producing a 0-100 health score in milliseconds. With 8,793 GitHub stars in just 88 days and trending #6 on GitHub today, it’s becoming the missing quality gate between “AI writes code” and “code ships to production.”

The AI Code Quality Crisis Is Real

Ninety percent of developers now use AI coding tools regularly, but trust has plummeted from 40% in 2024 to 29% in 2026. The problem isn’t obvious bugs—it’s silent failures where code runs without syntax errors but fails in edge cases. IEEE Spectrum reports that models like GPT-5 generate code that “fails to perform as intended, but on the surface seems to run successfully, avoiding syntax errors or obvious crashes.” This is the worst kind of bug: one that makes it to production.

The statistics are alarming. According to CodeRabbit’s analysis, 45% of AI-generated code contains security flaws, and technical debt increases 30-41% after teams adopt AI tools. Addy Osmani from Google Chrome Engineering describes the pattern: “teams seeing speed gains at first, then spending months fixing contradictory patterns and mounting technical debt.” The industry has optimized for code generation speed while quality assurance remains manual.

Related: Persistent Memory for AI Agents: 2026 Implementation

What React Doctor Actually Does

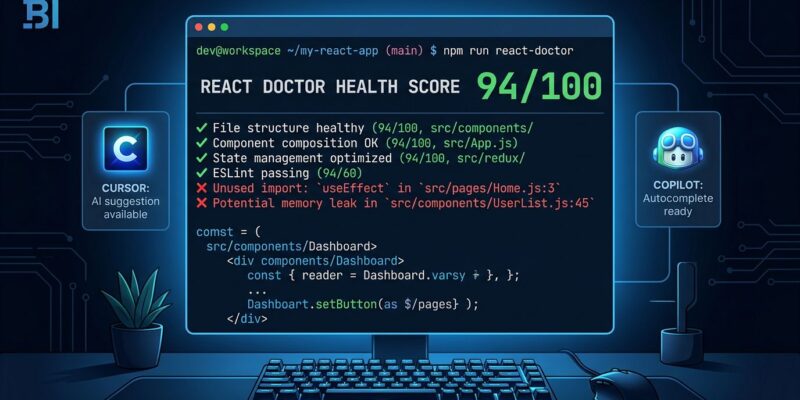

React Doctor scans React code for 60+ anti-patterns across security, performance, architecture, accessibility, and dead code. It outputs a 0-100 health score calculated using the formula: 100 - (unique_error_rules × 1.5) - (unique_warning_rules × 0.75). Scores above 75 are labeled “Great,” 50-74 means “Needs Work,” and below 50 is “Critical.”

The tool runs on Rust-based Oxlint, processing tens of thousands of lines in milliseconds—fast enough to run on every pull request without slowing down pipelines. Categories include state management issues (inefficient hooks, unnecessary re-renders), security problems (hardcoded secrets, unsafe eval), architectural violations (giant components, nesting violations), and unused code detection. The tool auto-detects your framework (Next.js, Vite, React Native) and applies relevant rules without configuration.

React Doctor maintains a public leaderboard showing top-scoring projects: executor scored 94, nodejs.org hit 86, and tldraw reached 70. These benchmarks provide context for what “good” looks like in real-world codebases.

Quick Start: Zero-Config React Doctor Scanning

Getting started with React Doctor requires one command:

npx -y react-doctor@latest .Run this at your project root and React Doctor returns a health score, categorized issues sorted by severity, and actionable fix suggestions. The output includes ASCII art and colored diagnostics that make it immediately clear where problems exist. Framework detection happens automatically—if you’re using Next.js, you get Next.js-specific rules; Vite projects get Vite rules.

The zero-config approach matters. Tools that require extensive setup don’t get adopted. React Doctor works out of the box, which means developers actually use it.

CI/CD Integration: Automated Quality Gates

React Doctor’s killer feature is GitHub Actions integration with diff mode scanning. Instead of analyzing your entire codebase, diff mode scans only files changed versus the base branch—keeping scans under a second even in large repositories. The github-token option enables inline PR comments showing issues exactly where they occur.

Here’s a production-ready workflow that blocks PRs below a quality threshold:

steps:

- uses: actions/checkout@v5

with:

fetch-depth: 0

- uses: millionco/react-doctor@main

with:

diff: main

github-token: ${{ secrets.GITHUB_TOKEN }}

# Fail if score below threshold

- name: Check Score

run: |

SCORE=$(npx react-doctor@latest . --score)

if [ $SCORE -lt 70 ]; then

echo "Quality score too low"

exit 1

fiThis approach prevents quality decay by enforcing minimum standards at the PR level. The numeric score output means you can adjust thresholds based on your baseline—start at 60, gradually tighten to 70, then 75 as code quality improves. Diff mode solves the “CI slowdown” objection that kills most linting tool adoptions.

AI Agent Self-Auditing: Teaching Agents to Catch Their Own Bugs

React Doctor can be installed as a skill for 50+ coding agents including Cursor, Claude Code, GitHub Copilot, and Windsurf:

npx -y react-doctor@latest installThis configures your agent to run React Doctor automatically after generating code, review diagnostics, and apply fixes before committing. The agent audits its own work, sees the severity levels, and iterates until the code passes. This closes the AI trust gap—instead of hoping the agent wrote good code, you verify it programmatically.

Agent self-auditing is React Doctor’s unique differentiator. As 90% of developers use AI tools, this becomes critical infrastructure. The tool directly addresses the problem stated at the top: only 29% of developers trust AI output. Automated verification makes AI-generated code trustworthy.

When to Use React Doctor: Complementary, Not Replacement

React Doctor complements ESLint rather than replacing it. Use ESLint for general JavaScript linting and its vast plugin ecosystem; use React Doctor for React-specific anti-patterns, AI code validation, and CI/CD speed. Unlike SonarQube or CodeClimate, React Doctor is lightweight, self-hosted, and optimized for millisecond scans.

The best practice is a three-tool stack: ESLint handles general JavaScript rules, React Doctor catches React anti-patterns and AI quality issues, and Prettier manages formatting. Each tool does one thing well. React Doctor’s Rust-based architecture (versus ESLint’s JavaScript) justifies the addition—it’s 20-35x faster in typical codebases.

Skip React Doctor if your team doesn’t use AI coding assistants and already has strong manual code review. However, if you’re using Cursor, Copilot, or Claude Code, automated quality gates aren’t optional anymore. They’re essential infrastructure for AI-assisted development.

Key Takeaways

- AI-generated code has a quality crisis: 45% contains security flaws, 1.7x more issues than human code, and technical debt increases 30-41% after AI tool adoption

- React Doctor scans React codebases for 60+ anti-patterns in milliseconds using Rust-based Oxlint, producing a 0-100 health score with actionable diagnostics

- CI/CD integration with diff mode enables sub-second scans on pull requests, letting teams enforce quality thresholds without slowing pipelines

- AI agent skills teach Cursor, Claude Code, and GitHub Copilot to audit their own code and apply fixes automatically—closing the AI trust gap

- React Doctor complements ESLint rather than replacing it: use ESLint for general JavaScript, React Doctor for React anti-patterns and AI quality gates, and Prettier for formatting

The tool is brand new (88 days old) but adoption is accelerating—8,793 GitHub stars and trending #6 today signals strong developer demand. As AI code generation reaches 50% of all code worldwide, automated quality verification transitions from nice-to-have to critical infrastructure. React Doctor is positioned as the missing layer between “AI writes code” and “code ships to production.”