A developer’s Hacker News post titled “I’m Going Back to Writing Code by Hand” sparked a 311-comment debate today, exposing a sharp divide in the developer community. While 93% of developers use AI coding tools and 51% of GitHub code is now AI-generated, a study by Model Evaluation & Threat Research revealed something surprising: experienced developers using AI tools were 19% slower, not faster. Developers forecast AI would make them 24% faster, but objective testing showed the opposite.

The developer who went back to manual coding isn’t an outlier—he’s onto something the industry doesn’t want to admit.

The Productivity Paradox

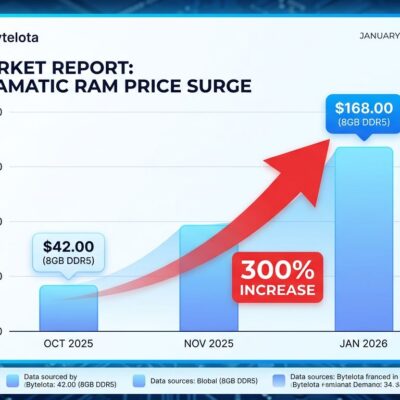

METR’s randomized controlled trial tested 16 experienced open-source developers across 246 tasks in mature projects. The result wasn’t close: AI tools added 19% to completion time. What makes this finding particularly striking is the perception gap. Before starting tasks, the same developers believed AI would reduce their completion time by 24%. Reality delivered a 43-point swing in the wrong direction.

Why did experienced developers slow down? The study identified extra cognitive load and context-switching as primary factors. When you already know your codebase intimately—the architecture decisions, the edge cases, the quirks that aren’t in any documentation—AI suggestions often miss crucial context. You spend mental energy evaluating whether the AI’s output fits, then more energy fixing what it got wrong. The developers with the highest repository familiarity slowed down the most.

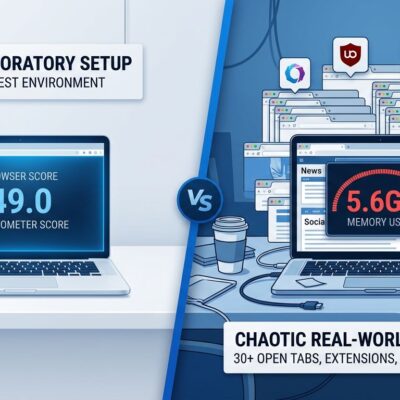

That tracks with what development teams report in practice. According to recent surveys, 64% of teams say manually verifying AI-generated code takes as long or longer than writing the code from scratch. Even when AI writes faster, the bottleneck just shifts from writing to reviewing. McKinsey’s February 2026 study found teams with high AI adoption complete 21% more tasks and merge 98% more pull requests—but PR review time increased 91%. You’re not saving time; you’re moving it.

The Technical Debt Nightmare

The speed problem is obvious when you measure it. The maintenance problem is insidious because it compounds over months.

GitClear analyzed 211 million lines of code and found AI-driven development degrading code quality in measurable ways. AI coding assistants don’t reuse existing patterns efficiently—they flood repositories with redundant code that will eventually demand extensive refactoring. A developer shared an experience from a greenfield Java project where a teammate used GitHub Copilot to generate boilerplate code. The AI introduced outdated patterns that made the codebase harder to maintain long-term. The code worked on day one. Six months later, it’s a liability.

The data backs this up. AI-assisted code increases issue counts by roughly 1.7 times. Security findings rise when organizations adopt AI without proper governance. Stack Overflow’s analysis concluded that AI can 10x developers—in creating technical debt. About 40% of developers now spend two to five working days per month debugging, refactoring, and maintaining code generated by AI tools. You get the feature shipped faster this sprint. You pay for it every sprint after.

The Community Is Split

The Hacker News thread isn’t just popular—599 points and 311 comments signals a nerve struck hard. The debate breaks along philosophical lines that don’t reconcile easily.

One camp describes AI coding as “initially exciting, then soul-crushing.” Developers in this group report they’re no longer solving mentally stimulating problems—they’re copy-pasting code from an AI assistant. It doesn’t feel rewarding or like an accomplishment anymore. Programming as craft matters to them. They enjoy carefully designing elegant solutions.

The opposing camp sees this as nostalgia disguised as principle. A 52-year-old senior programmer noted that “purely manual” coding made him work 12-hour days. With AI handling boilerplate, he spends time on architecture and testing—the parts he actually enjoys. Programming as means matters to this group. They value that code is completed, structured, and functional.

Fred Brooks’ “No Silver Bullet” argument applies here: the core challenge of software development is conceptual, not syntactic. AI helps with syntax—the typing part. It doesn’t help with the thinking part. Whether that tradeoff works for you depends on which part of programming you value and which part causes you friction.

When Manual Actually Wins

The answer isn’t binary. The right question is: when does each approach work better?

Manual coding wins when quality, reliability, or control are priorities. Complex architecture and system design decisions require understanding tradeoffs AI can’t evaluate. Debugging interconnected issues in a mature codebase demands context AI doesn’t have. Mission-critical or security-sensitive code can’t afford the 1.7x issue multiplier. Learning a new codebase by reading and modifying code by hand teaches you things AI-generated shortcuts skip.

AI coding wins on boilerplate and CRUD operations. Standard implementations in well-established frameworks. Prototyping when you need five features in 84 minutes and polish later. Repetitive patterns where the risk of subtle bugs is low and the time savings are high. Frameworks you don’t know well enough to write fluently from memory.

The best developers in 2026 use an average of 2.3 AI tools, treating each as specialized for different workflows. The real skill isn’t choosing AI or choosing manual—it’s developing judgment about when to use which approach.

The Developer Isn’t Wrong

The developer who went back to writing code by hand made a rational decision based on his experience. The industry narrative that “AI makes everyone faster” is oversimplified and, for experienced developers working in familiar codebases, demonstrably false. The METR study isn’t an outlier—it’s confirmation of what many senior developers already felt but couldn’t quantify.

Blind adoption is hurting some developers more than helping them. If you’re experienced, know your codebase well, and AI tools feel slower, you’re not imagining it. You’re probably right. The cognitive overhead of evaluating AI suggestions, the context it misses, and the verification time afterward can exceed the benefit of faster initial code generation.

That doesn’t mean abandoning AI entirely. It means developing the judgment to know when manual coding is the better tool. Use AI for boilerplate, prototyping, and unfamiliar frameworks. Write by hand for architecture, complex logic, and code you’ll maintain for years. Set aside time in each sprint to review AI-generated code before technical debt accumulates. Trust your instincts when something feels off.

Even GitHub’s CEO acknowledges that manual coding remains key despite the AI boom. The 311-comment debate on Hacker News isn’t going to resolve. The two camps value different things. But the data is clear: AI isn’t a universal productivity multiplier. It’s a tool that works brilliantly in some contexts and poorly in others. The sooner developers—and the industry—accept that nuance, the better decisions we’ll all make about when to type and when to let AI type for us.