Anthropic announced “dreaming” for Claude Managed Agents on May 6, 2026—a scheduled process that lets AI agents review past sessions during downtime to extract patterns, identify recurring mistakes, and write plain-text “playbooks” for future improvement. Unlike model fine-tuning, dreaming doesn’t touch model weights. It creates observable, auditable notes that future sessions reference. Legal AI company Harvey reported completion rates increased approximately 6x after implementing dreaming—the kind of production metric most AI announcements lack.

This solves a critical problem: agents repeat mistakes across sessions because they can’t learn from experience. Dreaming enables self-improvement without constant human oversight, while maintaining transparency enterprises demand.

How Dreaming Works: Pattern Recognition During Downtime

Dreaming runs between sessions while agents are idle. The Dreams API reads up to 100 prior session transcripts and the existing memory store, then produces a reorganized memory store. Duplicates merge. Stale entries get replaced. New insights surface from patterns the agent couldn’t see during single sessions.

The system extracts three types of patterns: recurring mistakes (like file format errors or tool-specific bugs), workflows agents converge on (preferred document generation sequences), and team preferences (brand voice guidelines, style choices). Memory gets restructured to maintain signal quality as it evolves.

Harvey’s 6x completion rate increase shows the impact. Their agents handle complex legal drafting—long-form documents with specific formatting requirements. With dreaming enabled, agents remember “Microsoft Word templates crash with certain header styles—use plain format first, then apply styling.” That institutional knowledge compounds over time. Completion rates jumped not from a model upgrade, but from memory that actually works.

Plain-Text Playbooks vs Black-Box Fine-Tuning

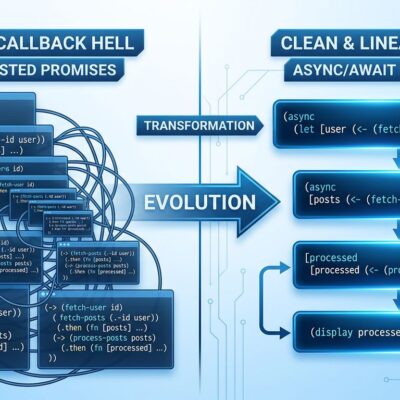

Dreaming writes plain-text notes and structured playbooks. It does NOT modify model weights. This distinction matters for enterprises that need governance and auditability. You can see exactly what the agent learned, review changes before they apply, or discard dream outputs entirely.

Compare this to traditional fine-tuning: model weights update in a black box, you need large datasets and compute, and auditing what changed is nearly impossible. Dreaming uses session transcripts instead of training data. The transparency is the point.

For regulated industries—finance, legal, healthcare—this architecture is the only viable path. Compliance teams can review playbooks, understand what agents learned, and ensure no violations crept in. Plain-text memory beats opaque model updates every time for production deployment.

The Risk: Vendor Lock-In for Enterprise Agent Infrastructure

VentureBeat’s headline cuts through the hype: “Anthropic wants to own your agent’s memory, evals, and orchestration—and that should make enterprises nervous.” By controlling the full stack—memory stores, evaluation frameworks, orchestration tools—Anthropic creates switching costs. Build your prompt tests, workflow simulations, and regression suites around Claude, and you’re locked in.

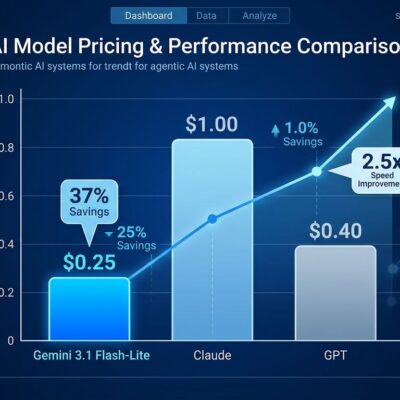

Claude Managed Agents is Claude-only. No GPT-5, no Gemini, no DeepSeek. Session data lives in Anthropic-managed databases. Progressive Robot’s analysis warns that enterprises risk confusing “passes our Claude eval” with “is robust across providers.” Industry data backs this up: 76-81% of enterprises express concern over proprietary dependencies in agent platforms.

Transparent architecture is right for production agents. But vendor lock-in is real. Enterprises must weigh convenience (fast deployment without building custom infrastructure) against portability and multi-model flexibility. This isn’t a technical decision—it’s a strategic commitment.

Related: Anthropic’s 10 AI Agents Transform Wall Street Finance

Outcomes, Multiagent Orchestration, and the Agent Platform War

Dreaming launched alongside two other features in public beta. Outcomes lets developers define success rubrics upfront—agents optimize toward criteria, a separate grader evaluates results. Document generation quality jumped 8.4% for docx files, 10.1% for pptx. Multiagent orchestration enables a lead agent to delegate to specialist subagents operating in parallel on shared file systems.

Netflix’s platform team uses orchestration to analyze build logs across thousands of applications. Instead of surfacing individual failures, the system identifies recurring patterns worth acting on. That’s production-scale signal extraction.

These three features—dreaming, outcomes, orchestration—form a complete stack for enterprise agents. Anthropic is positioning as “the AWS of agentic AI,” not just a model provider. The company signed a $1.8 billion compute deal with Akamai and leased SpaceX’s Colossus 1 data center (220,000+ GPUs) on May 6-8, 2026. Infrastructure buildout signals aggressive platform ambitions.

The agent platform war is heating up. OpenAI focuses on raw model capability with Assistants API. Google pushes Workspace integration with Gemini Enterprise. Anthropic is betting on full-stack convenience. Within a year, every major provider will launch dreaming equivalents. Enterprises choosing platforms now are committing for 3-5 years.

Key Takeaways

- Dreaming enables agents to self-improve transparently—Harvey’s 6x completion rate increase proves production value beyond research benchmarks

- Plain-text playbooks beat black-box fine-tuning for enterprises that need governance, auditability, and compliance reviews

- Vendor lock-in is the elephant in the room—Anthropic controls memory, evals, and orchestration infrastructure with no multi-model support

- Anthropic is positioning as the “AWS of agentic AI” with full-stack platform ambitions, backed by $1.8B Akamai deal and SpaceX Colossus lease

- The agent platform war intensifies in 2026—OpenAI (Assistants), Google (Gemini Enterprise), and Anthropic (Managed Agents) compete for enterprise commitments