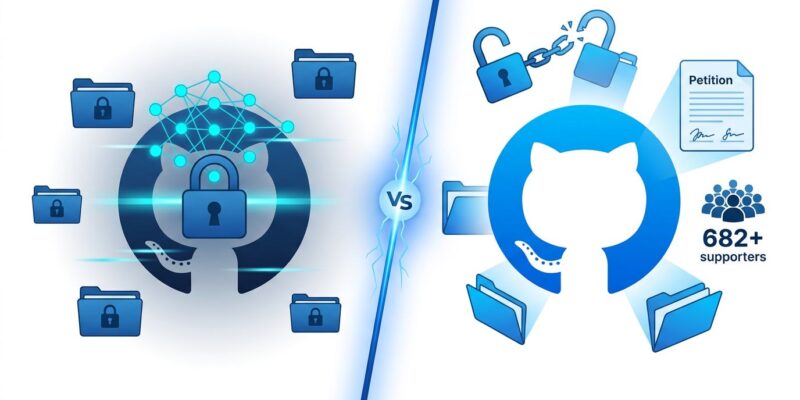

On May 5, NHS England ordered all technology leaders to switch hundreds of public GitHub repositories to private by May 11—a six-day deadline that reverses years of open source commitment. The reason: Anthropic’s unreleased Mythos AI model can scan code at scale and find vulnerabilities that survived decades of human security review, including a 27-year-old bug in OpenBSD. But critics, including former NHS technology head Terence Eden and over 682 petition signatories, call this “security theater” that won’t stop real threats like phishing and supply chain attacks.

What Mythos Can Actually Do

Anthropic’s Claude Mythos Preview represents a genuine leap in automated vulnerability discovery. In testing, it found thousands of zero-day vulnerabilities across every major operating system and web browser. It developed working exploits on the first attempt 83% of the time. Most impressively, it uncovered a 27-year-old vulnerability in OpenBSD—an operating system famous for security hardening.

The UK AI Safety Institute independently validated these capabilities exceed forecasted AI development cycles. Mythos can even reverse engineer stripped binaries back to plausible source code. This isn’t hype—it’s validated, frightening, and real. Part of Anthropic’s Project Glasswing, the model isn’t publicly available precisely because its capabilities are so advanced.

Why Critics Call This Security Theater

Terence Eden, former head of open technology at NHSX, argues closing repositories provides “not a meaningful defence.” His reasoning is straightforward: the code was already public and ingested by AI training systems years ago. “If Mythos really is the ultimate hacker, hiding the code now does nothing. It has likely already retained copies of the repositories,” Eden writes on his blog.

Moreover, Eden contends NHS should focus on actual threats—phishing, supply chain vulnerabilities, and weak password practices—rather than hiding code that’s already been archived and scraped. He points to precedent: the COVID Contact Tracing app was fully open sourced with millions of users, resulting in zero security incidents from being open source. Additionally, most NHS repositories contain documentation, UI components, and internal tools—not sensitive infrastructure. An open letter opposing the decision has grown from 74 signatures to 682+, including former UK Health Secretary Matt Hancock who called it a “huge mistake.”

The Dramatic Policy Reversal

From 2021 to 2025, NHS championed open source with clear commitments. The NHS Data Strategy stated: “Public services are built with public money, so unless there’s a good reason not to, the code should be made available.” NHS Service Standard Point 12 required making new source code open. Furthermore, the COVID app was fully open sourced.

However, in December 2025, NHS quietly removed its open source policy pages—a red flag that went largely unnoticed. Now the policy flips 180 degrees: “All source code repositories must be private by default” with public access only under “explicit and exceptional need” approved by the Engineering Board. Consequently, the timeline is jarring: a May 5 announcement with a May 11 deadline. Six days. No public consultation, no gradual transition—just a leaked internal memo.

Why This Sets a Dangerous Precedent

If NHS succeeds with this approach, finance, defense, and other critical infrastructure sectors will likely follow, citing the same AI security concerns. As a result, this could end the “public money = public code” principle for sensitive sectors. Security experts are divided: Saif Abed, a cybersecurity advisor, calls it “a sensible temporary step considering the rapidly changing threat landscape,” while critics argue it erodes public accountability.

Nevertheless, the irony is hard to miss: Mythos can reverse engineer closed-source binaries, making obscurity ineffective. A smarter path exists—use AI for defense. Anthropic’s Claude Code Security tool, using a less advanced model than Mythos, found over 500 vulnerabilities in production open-source codebases that had existed for decades. Fight AI with AI. Keep code open, scan it aggressively, and fix bugs faster than attackers can exploit them.

The real question isn’t whether AI can find bugs—it demonstrably can. The question is whether hiding code is the right response when AI can analyze closed-source systems just as effectively. NHS is right to worry about AI security threats. But they’re wrong to think closing repos solves the problem. The code is already out there, already scraped, already trained on. This is security theater.

Key Takeaways

- Mythos AI is real: Found 27-year-old OpenBSD bug, 83% exploit success rate—validated by UK AI Safety Institute

- Hiding code doesn’t help: Code already public, scraped, and archived—closing repos now provides zero additional protection

- Wrong threat focus: Real NHS risks are phishing, supply chain attacks, and weak passwords—not subtle code vulnerabilities

- Smarter path exists: Use AI-powered security tools for defense instead of obscurity—fight AI with AI

- Dangerous precedent: If NHS succeeds, finance and defense sectors will follow, ending “public money = public code” principle