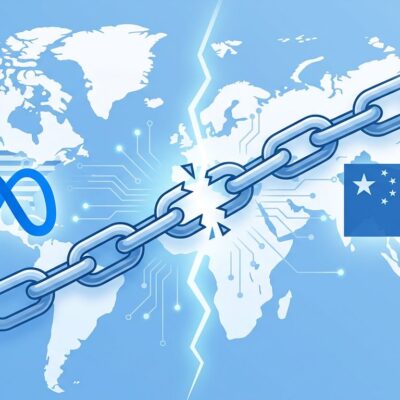

The Pentagon awarded classified AI contracts to seven tech giants on May 1—OpenAI, Google, Microsoft, Amazon, Nvidia, SpaceX, and Oracle. Missing: Anthropic, blacklisted as a “supply chain risk.” However, Pentagon CTO Emil Michael admits the Department of Defense desperately needs Anthropic’s Mythos AI model for cybersecurity. Anthropic refused Pentagon terms allowing fully autonomous weapons and mass surveillance. Meanwhile, every competitor signed without restrictions.

What “Lawful Use” Actually Means

The Pentagon demanded “all lawful purposes” agreements. Anthropic refused, drawing redlines against fully autonomous lethal weapons and domestic mass surveillance. In contrast, seven competitors signed without restrictions.

Moreover, contract terms require companies to remove model safeguards if they prevent military use. Google’s contract explicitly requires disabling technical limitations on government request.

Here’s the problem with “lawful.” Mass surveillance became lawful after 9/11 via the Patriot Act. Similarly, drone strikes in Pakistan are lawful under executive interpretation. Consequently, lawful is a moving target shaped by lawyers, not ethics. The Pentagon’s standard—”not explicitly illegal”—is a low bar for AI making life-or-death decisions.

The Mythos Paradox

Anthropic got the “supply chain risk” label—previously reserved for Chinese adversaries like Huawei. The Defense Secretary barred contractors from working with Anthropic.

Yet Pentagon CTO Emil Michael calls Mythos a “separate national security moment.” Mythos is Anthropic’s AI model for finding cyber vulnerabilities. Furthermore, the Pentagon needs it to harden classified networks. Therefore, Anthropic is simultaneously too dangerous to work with and essential for national security.

On April 17, White House Chief of Staff Susie Wiles met with CEO Dario Amodei about Mythos access. When asked about the meeting, Trump responded: “Who?”

March: blacklisted. April: White House begs for Mythos. May: still blacklisted, but Pentagon admits needing the tech. This is punishment as negotiation leverage.

The Industry Split

Nine hundred fifty Google employees petitioned the company to refuse Pentagon terms. Additionally, thirty OpenAI and Google DeepMind employees, including Chief Scientist Jeff Dean, filed an amicus brief supporting Anthropic. Nevertheless, both companies signed anyway. Employee power has waned since 2018, when 4,000 Google employees canceled Project Maven.

Dario Amodei called OpenAI’s approach “safety theater” and Sam Altman’s statements “straight up lies.” Conversely, Altman countered it’s “bad for society when companies abandon democratic norms because they dislike who’s in power.” Implication: Anthropic is partisan, not principled.

Two narratives emerge: Anthropic stands for ethics when costly, or Anthropic virtue signals while competitors grab billions.

Did Anthropic Win by Losing?

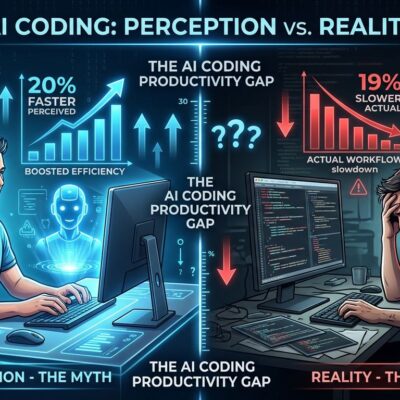

Anthropic lost billions in contracts and is fighting an expensive lawsuit. Meanwhile, competitors deploy on classified networks and train on government data Anthropic can’t access.

However, Anthropic gained brand differentiation as the only major AI lab refusing to enable autonomous weapons and mass surveillance. If disasters emerge, Anthropic isn’t complicit. In contrast, OpenAI, Google, and Microsoft are.

The Mythos paradox reveals leverage. Pentagon blacklisted Anthropic yet desperately needs its cybersecurity model. Anthropic held firm, and the government negotiates anyway. Trump said a deal is “possible.”

The bet: Is “ethical AI company” branding worth more than Pentagon contracts? For enterprises wary of surveillance capitalism, maybe. For revenue-focused investors, maybe not.

What Happens Next

Anthropic’s lawsuit continues after winning district court in March and losing appeals in April. Seven competitors are cleared to deploy on classified networks. Notably, Reflection AI, a two-year-old startup with no public model, won a contract despite raising two billion dollars just months ago.

Anthropic faces a choice: cave for contracts, or maintain positioning while competitors monetize military use. The April meeting suggests Trump wants compromise. What would Anthropic accept? Mythos access without Claude deployment? Autonomous weapons ban but surveillance allowed?

The Pentagon will use competitors’ AI for whatever the law permits. Anthropic’s refusal didn’t stop development. It meant someone else’s models do it instead.

The industry now splits: companies working with government on any lawful application versus those imposing ethical restrictions. Developers choosing between Claude, GPT, and Gemini have a third axis beyond capability and cost: which company’s ethics align with yours. Ultimately, Anthropic bet that matters.