Uber exhausted its $3.4 billion AI budget in four months. Meta ranked 85,000 employees on a leaderboard by token consumption, crowning a “Token Legend” who burned 281 billion tokens in 30 days. Engineering teams across the industry are spending billions on AI coding tools while code churn explodes 861% and developers achieve 2x output at 10x cost. This is tokenmaxxing—the 2026 version of measuring developer productivity by lines of code.

The software industry rejected LOC as a productivity metric 40 years ago because it measured input instead of output. Tokenmaxxing makes the exact same mistake, and it’s costing companies billions while creating a technical debt crisis.

Tokenmaxxing: The New Lines of Code

Tokenmaxxing is the practice of treating AI token consumption as a productivity proxy. More tokens burned equals more productive, or so the thinking goes. Companies gamify this with leaderboards, badges, and performance metrics tied to AI tool adoption.

Meta’s “Claudeonomics” dashboard tracked token usage across 85,000 employees, awarding titles like “Token Legend” and “Session Immortal” to top consumers. The company burned through 60 trillion tokens in 30 days—an estimated $9 billion—with leadership declaring “AI-driven impact” a core expectation for 2026. The leaderboard was shut down two days after it leaked to the press.

Uber’s crisis is even starker. The company rolled out Claude Code in December 2025 and watched usage double by February. By April 15, CTO Praveen Neppalli Naga revealed the company had exhausted its entire annual AI budget—$3.4 billion gone in four months. Per-engineer costs hit $500 to $2,000 monthly as 95% of developers adopted AI tools and 70% of committed code became AI-generated.

This is perverse incentive design at scale. Developers game the system by running idle AI agents, generating code outside project scope, and avoiding refactoring that would reduce LOC and make them look “less productive.” Token consumption becomes the target, and working code becomes secondary.

The Numbers Don’t Lie: 10x Cost for 2x Output

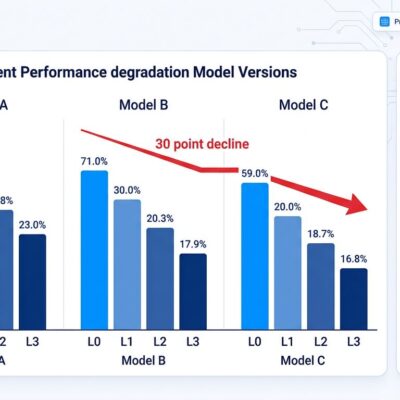

Research from GitClear, Faros AI, and Jellyfish demolishes tokenmaxxing’s productivity claims. GitClear found AI-assisted developers generate 9.4x higher code churn than non-AI developers. Faros AI measured an 861% churn increase in high AI adoption environments. Jellyfish discovered engineers with 10x token budgets achieve only 2x throughput—paying five times more per unit of sustained output.

The problem is code acceptance rates. Engineering managers see 80-90% acceptance at merge time and celebrate productivity gains. However, they miss the churn that happens in weeks two through four. Real acceptance rates—code that survives revision cycles—drop to 10-30%. Companies are paying for output that gets thrown away.

Meanwhile, code duplication jumped 48% (from 8.3% to 12.3%), refactoring declined 60% (from 25% to 10% of changes), bugs per developer increased 54%, and median code review time exploded 5x. The AI-generated code compiles and passes tests, but lacks edge case handling and fails under production load. Multiple revision cycles become mandatory. Technical debt compounds at four times traditional rates.

We Already Rejected This Metric (In the 1980s)

The software industry fought this battle in the 1970s and 1980s. Lines of code was the dominant productivity metric until everyone realized it measured the wrong thing. LOC incentivized verbose code, discouraged refactoring, and rewarded copy-pasting over abstraction. Furthermore, a task accomplished in 100 lines of C++ might take 10 lines of Python—making LOC meaningless for cross-language comparisons.

The fundamental flaw: measuring input (code written) rather than output (working features). Goodhart’s Law applies perfectly: “When a measure becomes a target, it ceases to be a good measure.” If you tell developers their productivity will be judged by lines written, they’ll write more lines. Consequently, if you judge by tokens consumed, they’ll consume more tokens. Neither correlates with delivered business value.

By the mid-1980s, the industry moved toward function points, then DORA metrics (deployment frequency, lead time, change failure rate), and eventually the SPACE framework (Satisfaction, Performance, Activity, Communication, Efficiency). These multi-dimensional approaches recognize that no single metric captures productivity. Tokenmaxxing ignores four decades of hard-won wisdom.

The Bill Is Coming Due

Tokenmaxxing drives three catastrophic outcomes. First, budget explosions. Uber’s $3.4 billion crisis demonstrates how variable costs spiral when token consumption becomes a target rather than a tool. GitHub is transitioning Copilot to usage-based billing in June 2026 specifically because token consumption varies so wildly that per-seat pricing became unsustainable. Companies that can’t forecast AI spending face mid-year tool cutbacks and CFO panic.

Second, technical debt accumulation. Maintenance costs for AI-generated code hit four times traditional levels by year two. Refactoring declined 60% as developers avoid deleting AI-generated code (looks bad on productivity reports). Codebases bloat with duplicated patterns AI copy-pasted instead of abstracted. Moreover, storage costs increase as repositories fill with code that never ships.

Third, developer burnout. When tokenmaxxing becomes a performance metric, engineers face pressure to maximize consumption regardless of need. The leaderboard mentality transforms AI tools from productivity aids into sources of stress. Salesforce is pushing back by developing outcome-based metrics tied to business results, explicitly rejecting token consumption as a vanity metric.

Measure Output, Not Input

The alternative frameworks already exist. DORA metrics focus on delivery speed and quality: deployment frequency, lead time for changes, time to restore service, and change failure rate. These measure outcomes—code that ships and stays shipped—rather than inputs.

The SPACE framework provides a holistic view across five dimensions: Satisfaction (developer wellbeing), Performance (outcomes and impact), Activity (work outputs), Communication (collaboration quality), and Efficiency (workflow smoothness). Nicole Forsgren, who created SPACE at Microsoft Research, emphasizes “the multidimensional nature of productivity—there really is no one measure of productivity.”

Business outcome metrics deliver the clearest signal: features delivered per sprint, customer satisfaction scores, revenue impact of features, user adoption rates. These tie engineering work directly to business value. In fact, the best practice is to track code survival beyond initial merge. Measure what percentage of code survives two weeks, four weeks, eight weeks. That’s your real productivity signal.

Token consumption might be one input among many in a comprehensive productivity dashboard. However, it should never be the primary KPI. The industry learned this lesson with LOC. Time to stop repeating history.

Key Takeaways

- Tokenmaxxing measures input (tokens consumed) rather than output (working code), the same fundamental flaw that killed lines of code as a metric 40 years ago

- Research shows engineers with 10x token budgets achieve only 2x throughput, with real code acceptance rates (10-30%) far below initial merge rates (80-90%) due to revision churn

- Companies like Uber ($3.4B budget exhausted) and Meta ($9B in 30 days) demonstrate the budget crisis tokenmaxxing creates, with GitHub transitioning to usage-based billing to address the problem

- Technical debt from AI-generated code compounds at 4x traditional rates, with refactoring down 60%, code duplication up 48%, and maintenance costs exploding by year two

- Better alternatives exist: DORA metrics (deployment frequency, lead time, change failure rate), SPACE framework (multi-dimensional productivity), and business outcome metrics (features delivered, customer impact) measure what actually matters